كيفية استخدام الإضافة

يوفر مُدمج الدردشة بالذكاء الاصطناعي في وقت التشغيل وظيفتين رئيسيتين: الدردشة من نص إلى نص وتحويل النص إلى كلام (TTS). تتبع كلتا الميزتين سير عمل مشابه:

- تسجيل رمز موفر واجهة برمجة التطبيقات (API) الخاص بك

- تكوين إعدادات خاصة بالميزة

- إرسال الطلبات ومعالجة الردود

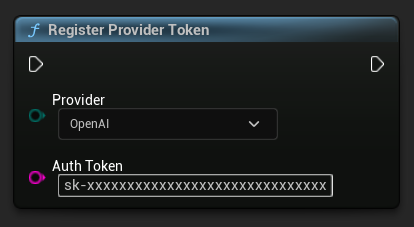

تسجيل رمز المزود

قبل إرسال أي طلبات، قم بتسجيل رمز موفر واجهة برمجة التطبيقات (API) الخاص بك باستخدام الدالة RegisterProviderToken.

يعمل Ollama محليًا ولا يتطلب رمز واجهة برمجة تطبيقات (API). يمكنك تخطي هذه الخطوة لـ Ollama.

- Blueprint

- C++

// Register an OpenAI provider token, as an example

UAIChatbotCredentialsManager::RegisterProviderToken(

EAIChatbotIntegratorOrgs::OpenAI,

TEXT("sk-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx")

);

// Register other providers as needed

UAIChatbotCredentialsManager::RegisterProviderToken(

EAIChatbotIntegratorOrgs::Anthropic,

TEXT("sk-ant-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx")

);

UAIChatbotCredentialsManager::RegisterProviderToken(

EAIChatbotIntegratorOrgs::DeepSeek,

TEXT("sk-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx")

);

etc

وظيفة الدردشة من نص إلى نص

يدعم البرنامج المساعد وضعين لطلب الدردشة لكل مزود:

طلبات الدردشة غير المتدفقة

استرجاع الرد الكامل في مكالمة واحدة.

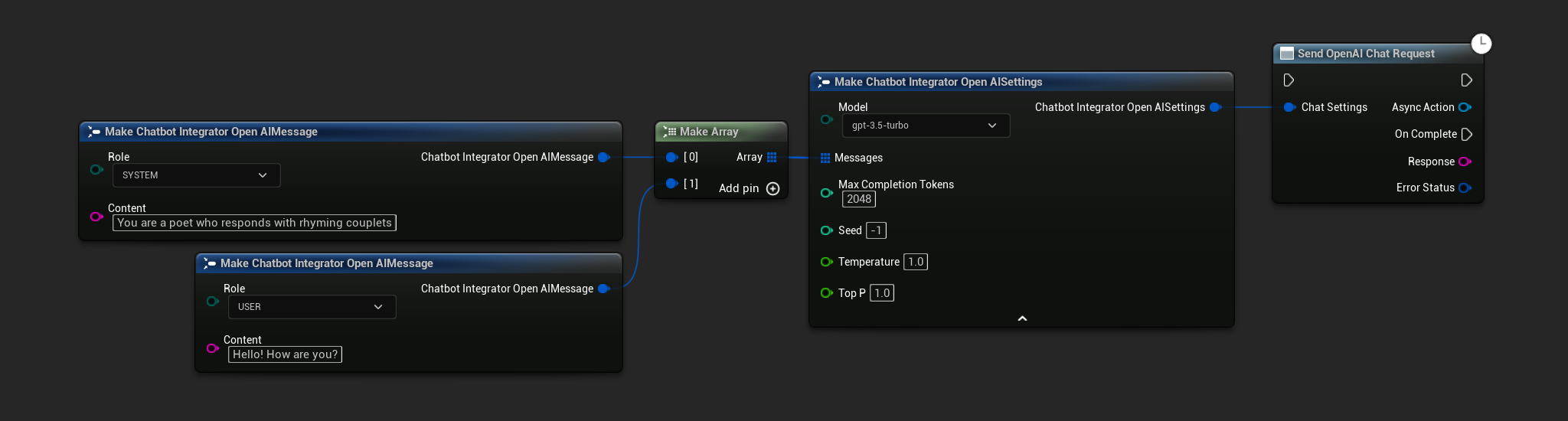

- OpenAI

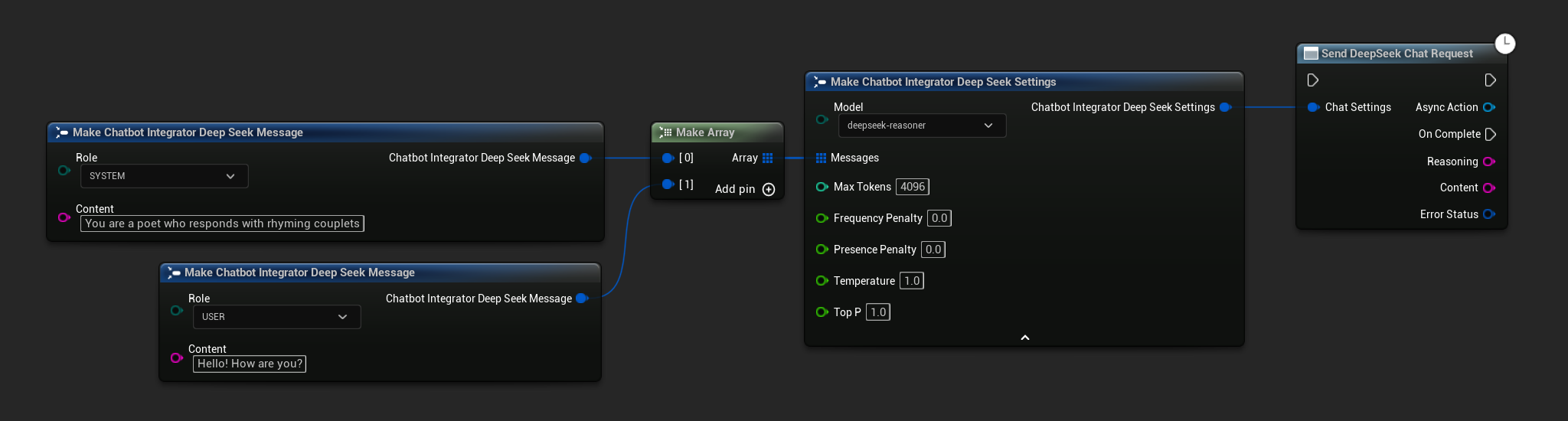

- DeepSeek

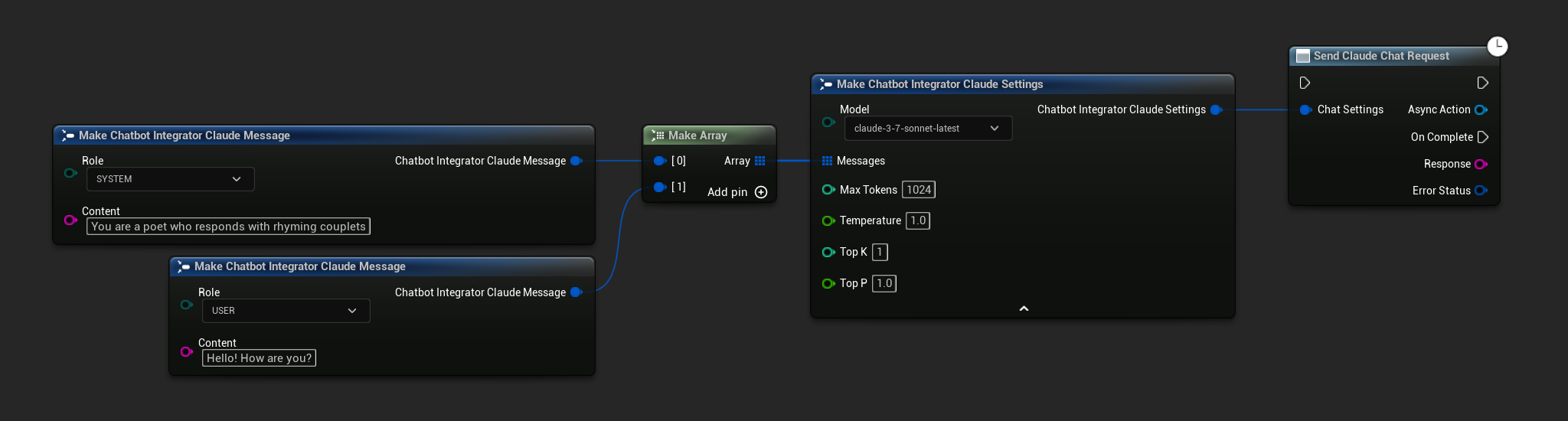

- Claude

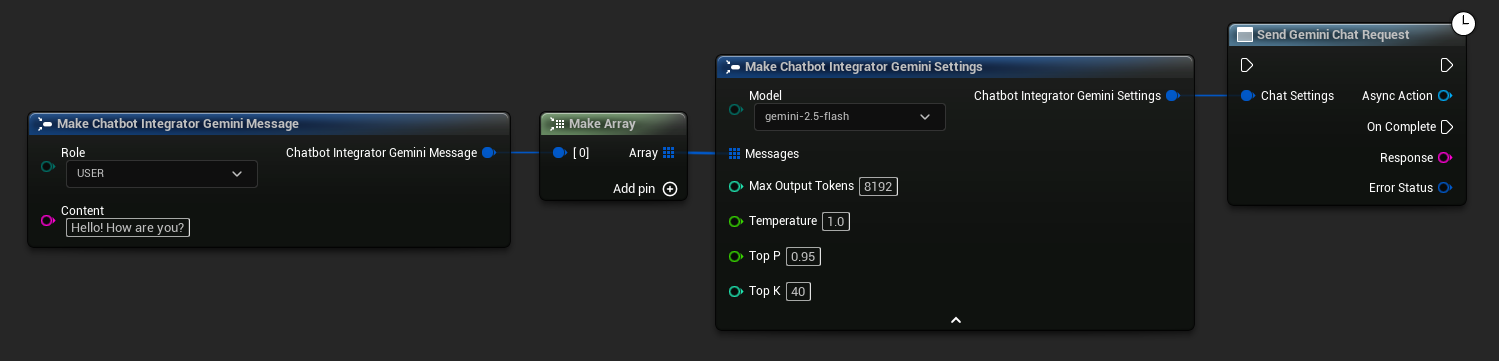

- Gemini

- Grok

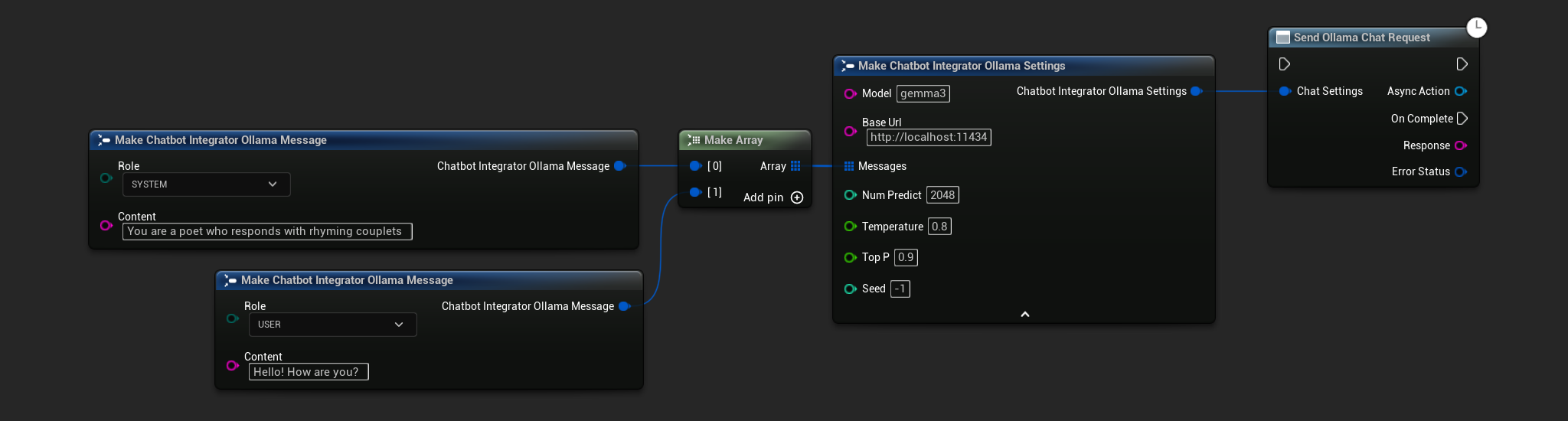

- Ollama

- Blueprint

- C++

// Example of sending a non-streaming chat request to OpenAI

FChatbotIntegrator_OpenAISettings Settings;

Settings.Messages.Add(FChatbotIntegrator_OpenAIMessage{

EChatbotIntegrator_OpenAIRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_OpenAIMessage{

EChatbotIntegrator_OpenAIRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorOpenAI::SendChatRequestNative(

Settings,

FOnOpenAIChatCompletionResponseNative::CreateWeakLambda(

this,

[this](const FString& Response, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Chat completion response: %s, Error: %d: %s"),

*Response, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a non-streaming chat request to DeepSeek

FChatbotIntegrator_DeepSeekSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_DeepSeekMessage{

EChatbotIntegrator_DeepSeekRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_DeepSeekMessage{

EChatbotIntegrator_DeepSeekRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorDeepSeek::SendChatRequestNative(

Settings,

FOnDeepSeekChatCompletionResponseNative::CreateWeakLambda(

this,

[this](const FString& Reasoning, const FString& Content, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Chat completion reasoning: %s, Content: %s, Error: %d: %s"),

*Reasoning, *Content, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a non-streaming chat request to Claude

FChatbotIntegrator_ClaudeSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_ClaudeMessage{

EChatbotIntegrator_ClaudeRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_ClaudeMessage{

EChatbotIntegrator_ClaudeRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorClaude::SendChatRequestNative(

Settings,

FOnClaudeChatCompletionResponseNative::CreateWeakLambda(

this,

[this](const FString& Response, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Chat completion response: %s, Error: %d: %s"),

*Response, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a non-streaming chat request to Gemini

FChatbotIntegrator_GeminiSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_GeminiMessage{

EChatbotIntegrator_GeminiRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorGemini::SendChatRequestNative(

Settings,

FOnGeminiChatCompletionResponseNative::CreateWeakLambda(

this,

[this](const FString& Response, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Chat completion response: %s, Error: %d: %s"),

*Response, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a non-streaming chat request to Grok

FChatbotIntegrator_GrokSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_GrokMessage{

EChatbotIntegrator_GrokRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_GrokMessage{

EChatbotIntegrator_GrokRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorGrok::SendChatRequestNative(

Settings,

FOnGrokChatCompletionResponseNative::CreateWeakLambda(

this,

[this](const FString& Reasoning, const FString& Response, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Chat completion reasoning: %s, Response: %s, Error: %d: %s"),

*Reasoning, *Response, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a non-streaming chat request to Ollama

FChatbotIntegrator_OllamaSettings Settings;

Settings.Model = TEXT("gemma3");

Settings.BaseUrl = TEXT("http://localhost:11434");

Settings.Messages.Add(FChatbotIntegrator_OllamaMessage{

EChatbotIntegrator_OllamaRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_OllamaMessage{

EChatbotIntegrator_OllamaRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorOllama::SendChatRequestNative(

Settings,

FOnOllamaChatCompletionResponseNative::CreateWeakLambda(

this,

[this](const FString& Response, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Chat completion response: %s, Error: %d: %s"),

*Response, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

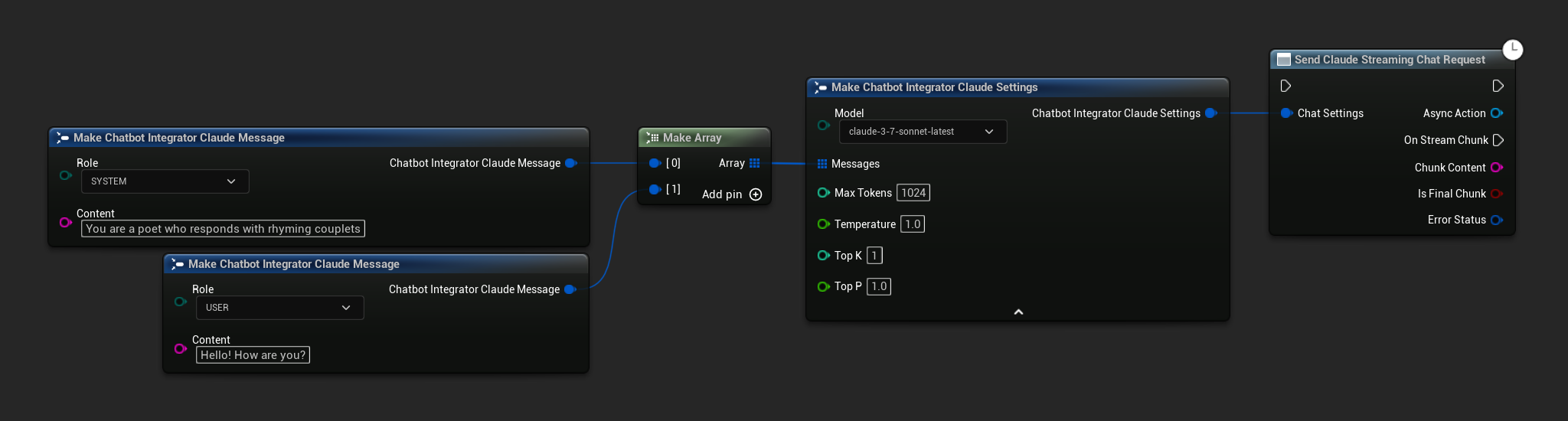

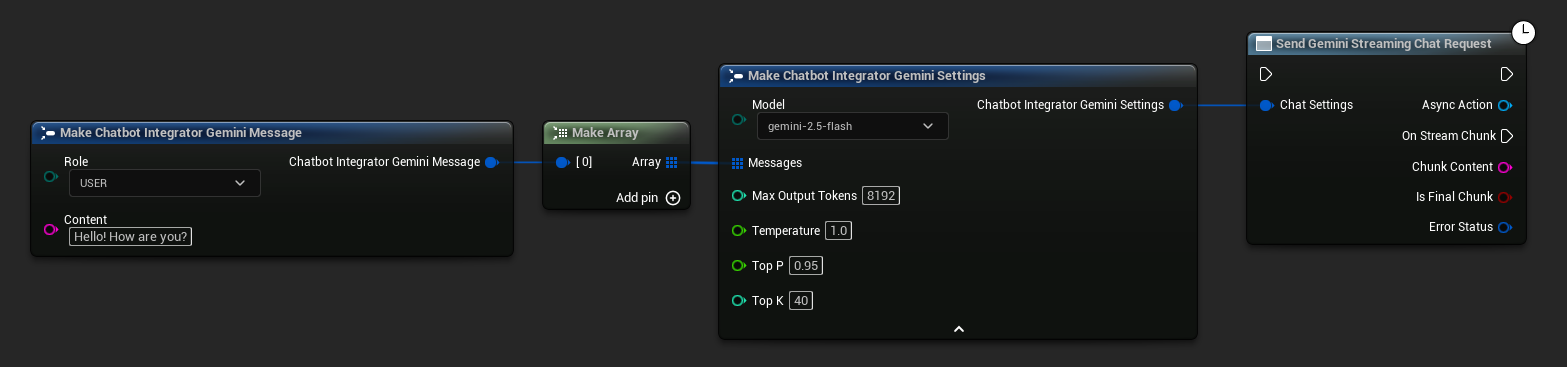

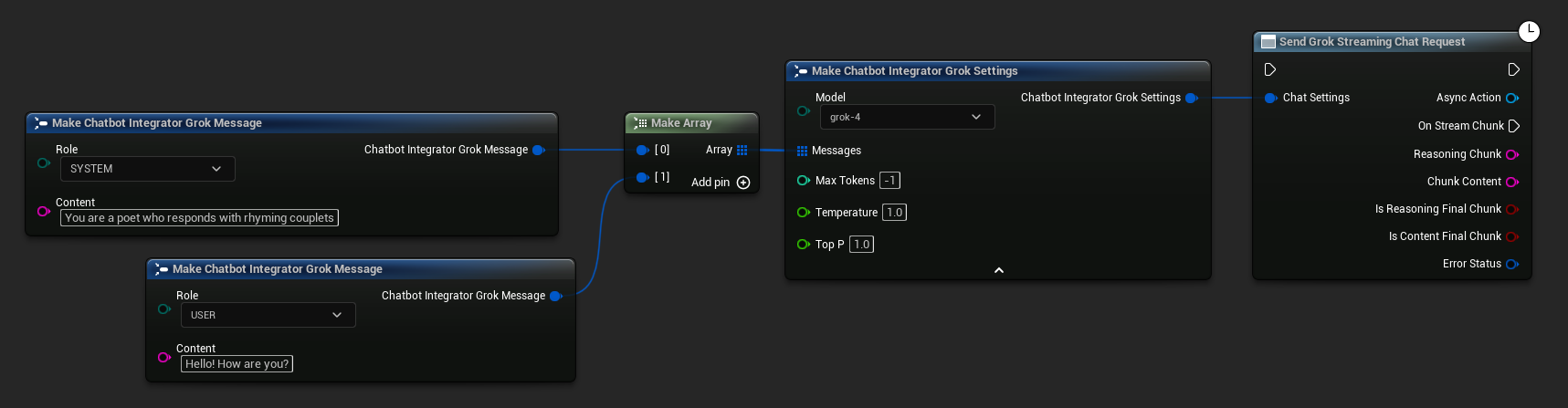

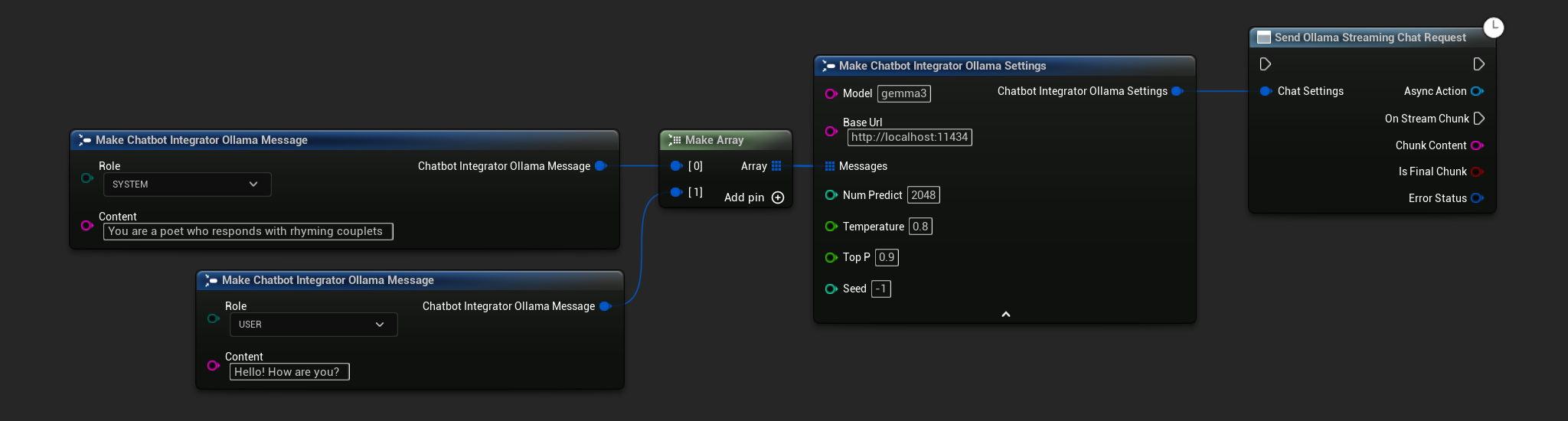

طلبات الدردشة المتدفقة

تلقي أجزاء الرد في الوقت الفعلي لتفاعل أكثر ديناميكية.

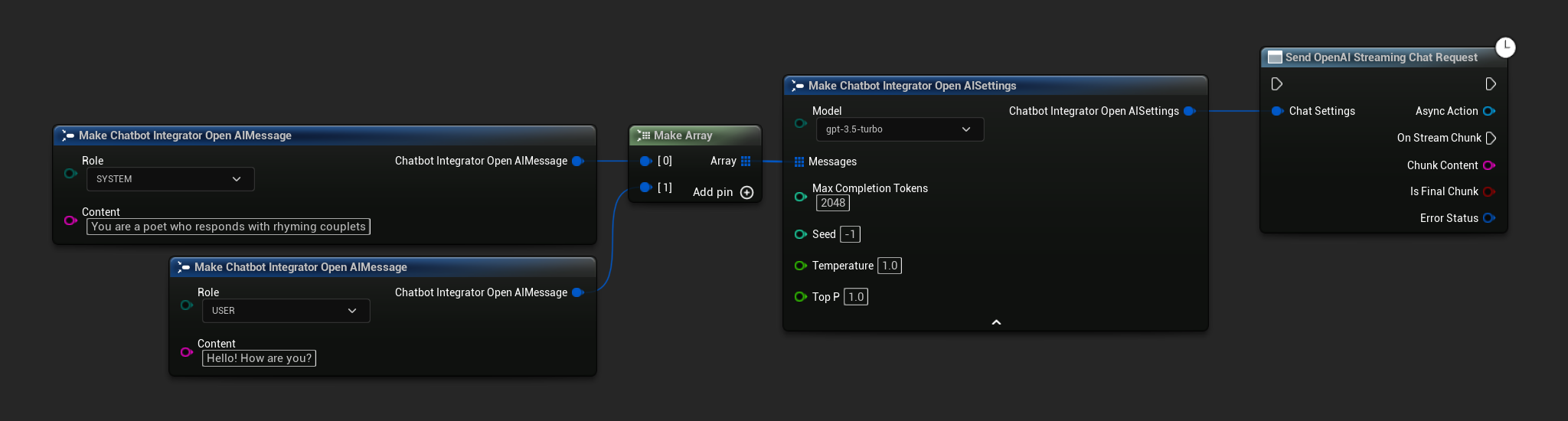

- OpenAI

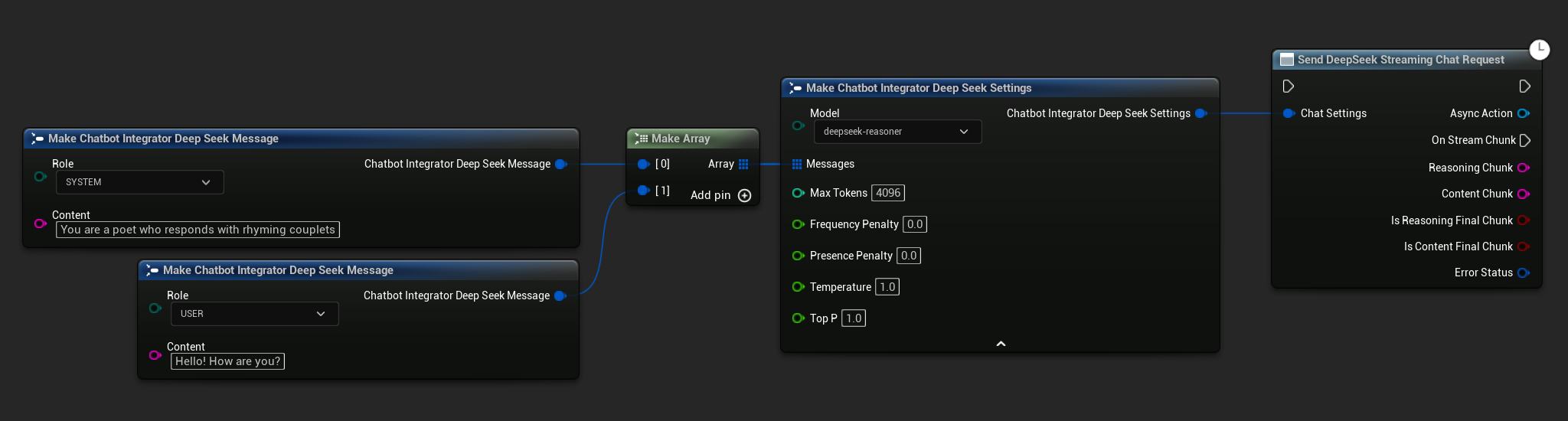

- DeepSeek

- Claude

- Gemini

- Grok

- Ollama

- Blueprint

- C++

// Example of sending a streaming chat request to OpenAI

FChatbotIntegrator_OpenAIStreamingSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_OpenAIMessage{

EChatbotIntegrator_OpenAIRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_OpenAIMessage{

EChatbotIntegrator_OpenAIRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorOpenAIStream::SendStreamingChatRequestNative(

Settings,

FOnOpenAIChatCompletionStreamNative::CreateWeakLambda(

this,

[this](const FString& ChunkContent, bool IsFinalChunk, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Streaming chat chunk: %s, IsFinalChunk: %d, Error: %d: %s"),

*ChunkContent, IsFinalChunk, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a streaming chat request to DeepSeek

FChatbotIntegrator_DeepSeekSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_DeepSeekMessage{

EChatbotIntegrator_DeepSeekRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_DeepSeekMessage{

EChatbotIntegrator_DeepSeekRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorDeepSeekStream::SendStreamingChatRequestNative(

Settings,

FOnDeepSeekChatCompletionStreamNative::CreateWeakLambda(

this,

[this](const FString& ReasoningChunk, const FString& ContentChunk,

bool IsReasoningFinalChunk, bool IsContentFinalChunk,

const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Streaming reasoning: %s, content: %s, Error: %d: %s"),

*ReasoningChunk, *ContentChunk, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a streaming chat request to Claude

FChatbotIntegrator_ClaudeSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_ClaudeMessage{

EChatbotIntegrator_ClaudeRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_ClaudeMessage{

EChatbotIntegrator_ClaudeRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorClaudeStream::SendStreamingChatRequestNative(

Settings,

FOnClaudeChatCompletionStreamNative::CreateWeakLambda(

this,

[this](const FString& ChunkContent, bool IsFinalChunk, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Streaming chat chunk: %s, IsFinalChunk: %d, Error: %d: %s"),

*ChunkContent, IsFinalChunk, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a streaming chat request to Gemini

FChatbotIntegrator_GeminiSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_GeminiMessage{

EChatbotIntegrator_GeminiRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorGeminiStream::SendStreamingChatRequestNative(

Settings,

FOnGeminiChatCompletionStreamNative::CreateWeakLambda(

this,

[this](const FString& ChunkContent, bool IsFinalChunk, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Streaming chat chunk: %s, IsFinalChunk: %d, Error: %d: %s"),

*ChunkContent, IsFinalChunk, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a streaming chat request to Grok

FChatbotIntegrator_GrokSettings Settings;

Settings.Messages.Add(FChatbotIntegrator_GrokMessage{

EChatbotIntegrator_GrokRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_GrokMessage{

EChatbotIntegrator_GrokRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorGrokStream::SendStreamingChatRequestNative(

Settings,

FOnGrokChatCompletionStreamNative::CreateWeakLambda(

this,

[this](const FString& ReasoningChunk, const FString& ContentChunk,

bool IsReasoningFinalChunk, bool IsContentFinalChunk,

const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Streaming reasoning: %s, content: %s, Error: %d: %s"),

*ReasoningChunk, *ContentChunk, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

- Blueprint

- C++

// Example of sending a streaming chat request to Ollama

FChatbotIntegrator_OllamaSettings Settings;

Settings.Model = TEXT("gemma3");

Settings.BaseUrl = TEXT("http://localhost:11434");

Settings.Messages.Add(FChatbotIntegrator_OllamaMessage{

EChatbotIntegrator_OllamaRole::SYSTEM,

TEXT("You are a helpful assistant.")

});

Settings.Messages.Add(FChatbotIntegrator_OllamaMessage{

EChatbotIntegrator_OllamaRole::USER,

TEXT("What is the capital of France?")

});

UAIChatbotIntegratorOllamaStream::SendStreamingChatRequestNative(

Settings,

FOnOllamaChatCompletionStreamNative::CreateWeakLambda(

this,

[this](const FString& ChunkContent, bool IsFinalChunk, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

UE_LOG(LogTemp, Log, TEXT("Streaming chat chunk: %s, IsFinalChunk: %d, Error: %d: %s"),

*ChunkContent, IsFinalChunk, ErrorStatus.bIsError, *ErrorStatus.ErrorMessage);

}

)

);

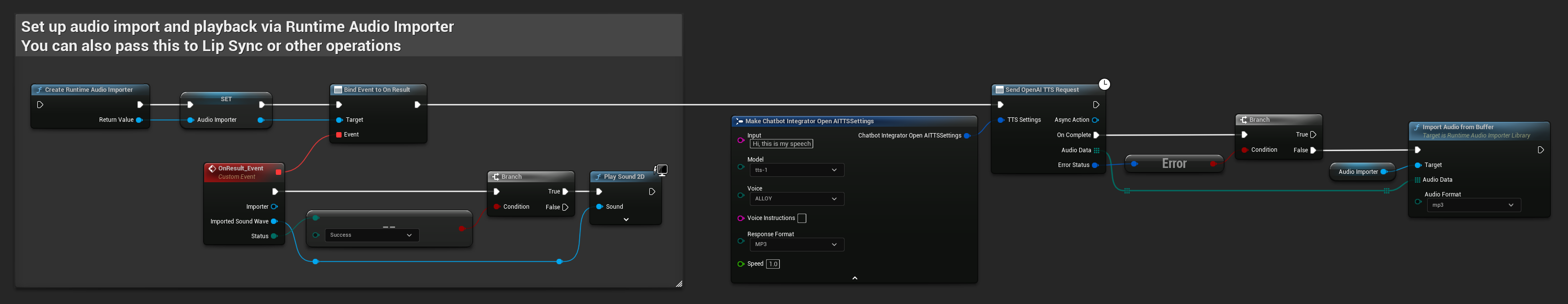

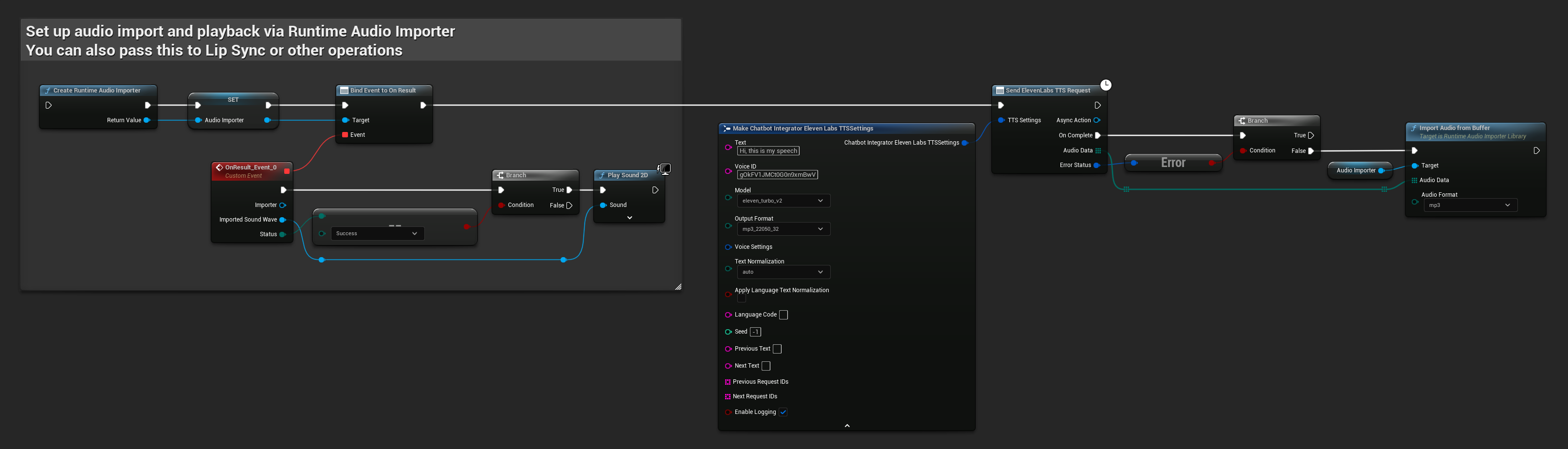

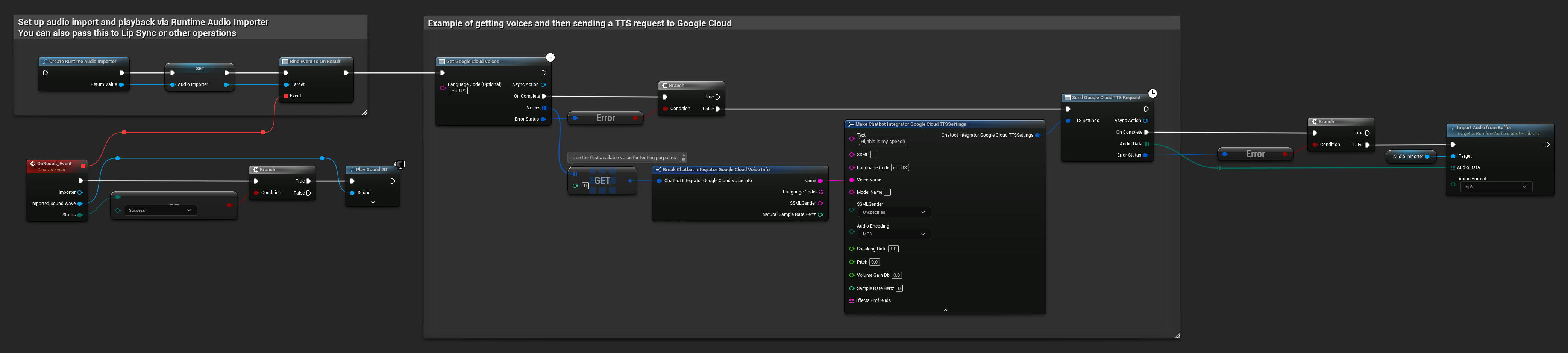

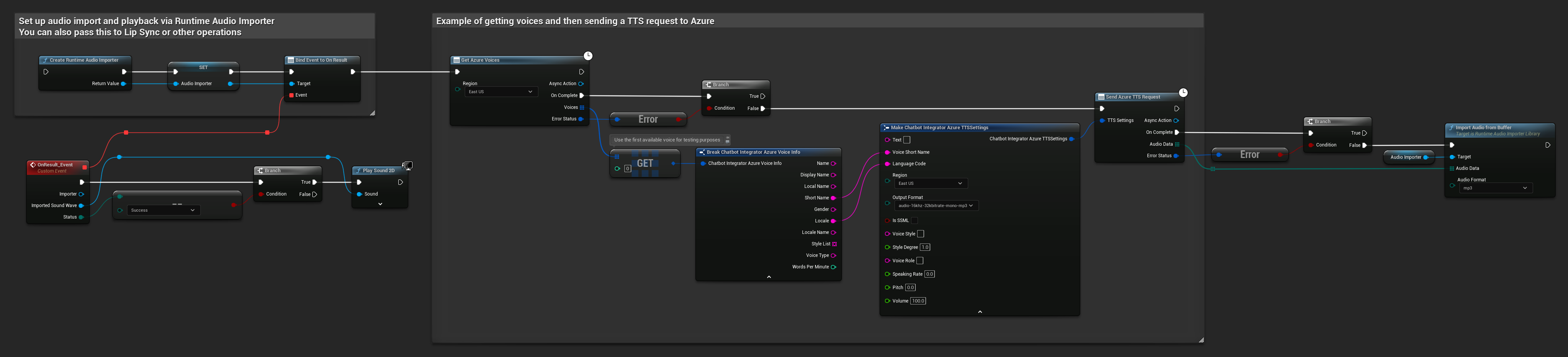

وظيفة تحويل النص إلى كلام (TTS)

تحويل النص إلى صوت كلام عالي الجودة باستخدام مزودي خدمة تحويل النص إلى كلام الرائدين. تُرجع الإضافة بيانات الصوت الخام (TArray<uint8>) والتي يمكنك معالجتها وفقًا لاحتياجات مشروعك.

بينما تُظهر الأمثلة أدناه معالجة الصوت للتشغيل باستخدام إضافة Runtime Audio Importer (انظر توثيق استيراد الصوت)، فإن Runtime AI Chatbot Integrator مصمم ليكون مرنًا. تُرجع الإضافة ببساطة بيانات الصوت الخام، مما يمنحك الحرية الكاملة في كيفية معالجتها لحالة استخدامك المحددة، والتي قد تشمل تشغيل الصوت، الحفظ في ملف، معالجة صوتية إضافية، إرسال إلى أنظمة أخرى، تصورات مخصصة، وأكثر من ذلك.

طلبات تحويل النص إلى كلام غير المتدفقة (Non-Streaming)

تُرجع طلبات تحويل النص إلى كلام غير المتدفقة بيانات الصوت الكاملة في استجابة واحدة بعد معالجة النص بالكامل. هذا النهج مناسب للنصوص الأقصر حيث لا يمثل انتظار الصوت الكامل مشكلة.

- OpenAI TTS

- ElevenLabs TTS

- Google Cloud TTS

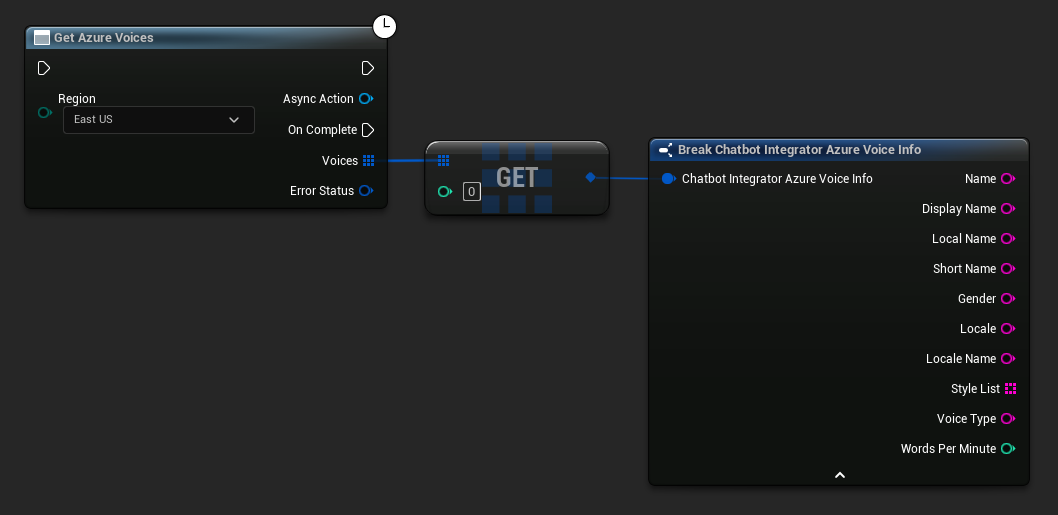

- Azure TTS

- Blueprint

- C++

// Example of sending a TTS request to OpenAI

FChatbotIntegrator_OpenAITTSSettings TTSSettings;

TTSSettings.Input = TEXT("Hello, this is a test of text-to-speech functionality.");

TTSSettings.Voice = EChatbotIntegrator_OpenAITTSVoice::NOVA;

TTSSettings.Speed = 1.0f;

TTSSettings.ResponseFormat = EChatbotIntegrator_OpenAITTSFormat::MP3;

UAIChatbotIntegratorOpenAITTS::SendTTSRequestNative(

TTSSettings,

FOnOpenAITTSResponseNative::CreateWeakLambda(

this,

[this](const TArray<uint8>& AudioData, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError)

{

// Process the audio data using Runtime Audio Importer plugin

UE_LOG(LogTemp, Log, TEXT("Received TTS audio data: %d bytes"), AudioData.Num());

URuntimeAudioImporterLibrary* RuntimeAudioImporter = URuntimeAudioImporterLibrary::CreateRuntimeAudioImporter();

RuntimeAudioImporter->AddToRoot();

RuntimeAudioImporter->OnResultNative.AddWeakLambda(this, [this](URuntimeAudioImporterLibrary* Importer, UImportedSoundWave* ImportedSoundWave, ERuntimeImportStatus Status)

{

if (Status == ERuntimeImportStatus::SuccessfulImport)

{

UE_LOG(LogTemp, Warning, TEXT("Successfully imported audio"));

// Handle ImportedSoundWave playback

}

Importer->RemoveFromRoot();

});

RuntimeAudioImporter->ImportAudioFromBuffer(AudioData, ERuntimeAudioFormat::Mp3);

}

}

)

);

- Blueprint

- C++

// Example of sending a TTS request to ElevenLabs

FChatbotIntegrator_ElevenLabsTTSSettings TTSSettings;

TTSSettings.Text = TEXT("Hello, this is a test of text-to-speech functionality.");

TTSSettings.VoiceID = TEXT("your-voice-id");

TTSSettings.Model = EChatbotIntegrator_ElevenLabsTTSModel::ELEVEN_TURBO_V2;

TTSSettings.OutputFormat = EChatbotIntegrator_ElevenLabsTTSFormat::MP3_44100_128;

UAIChatbotIntegratorElevenLabsTTS::SendTTSRequestNative(

TTSSettings,

FOnElevenLabsTTSResponseNative::CreateWeakLambda(

this,

[this](const TArray<uint8>& AudioData, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError)

{

UE_LOG(LogTemp, Log, TEXT("Received TTS audio data: %d bytes"), AudioData.Num());

// Process audio data as needed

}

}

)

);

- Blueprint

- C++

// Example of getting voices and then sending a TTS request to Google Cloud

// First, get available voices

UAIChatbotIntegratorGoogleCloudVoices::GetVoicesNative(

TEXT("en-US"), // Optional language filter

FOnGoogleCloudVoicesResponseNative::CreateWeakLambda(

this,

[this](const TArray<FChatbotIntegrator_GoogleCloudVoiceInfo>& Voices, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError && Voices.Num() > 0)

{

// Use the first available voice

const FChatbotIntegrator_GoogleCloudVoiceInfo& FirstVoice = Voices[0];

UE_LOG(LogTemp, Log, TEXT("Using voice: %s"), *FirstVoice.Name);

// Now send TTS request with the selected voice

FChatbotIntegrator_GoogleCloudTTSSettings TTSSettings;

TTSSettings.Text = TEXT("Hello, this is a test of text-to-speech functionality.");

TTSSettings.LanguageCode = FirstVoice.LanguageCodes.Num() > 0 ? FirstVoice.LanguageCodes[0] : TEXT("en-US");

TTSSettings.VoiceName = FirstVoice.Name;

TTSSettings.AudioEncoding = EChatbotIntegrator_GoogleCloudAudioEncoding::MP3;

UAIChatbotIntegratorGoogleCloudTTS::SendTTSRequestNative(

TTSSettings,

FOnGoogleCloudTTSResponseNative::CreateWeakLambda(

this,

[this](const TArray<uint8>& AudioData, const FChatbotIntegratorErrorStatus& TTSErrorStatus)

{

if (!TTSErrorStatus.bIsError)

{

UE_LOG(LogTemp, Log, TEXT("Received TTS audio data: %d bytes"), AudioData.Num());

// Process the audio data using Runtime Audio Importer plugin

URuntimeAudioImporterLibrary* RuntimeAudioImporter = URuntimeAudioImporterLibrary::CreateRuntimeAudioImporter();

RuntimeAudioImporter->AddToRoot();

RuntimeAudioImporter->OnResultNative.AddWeakLambda(this, [this](URuntimeAudioImporterLibrary* Importer, UImportedSoundWave* ImportedSoundWave, ERuntimeImportStatus Status)

{

if (Status == ERuntimeImportStatus::SuccessfulImport)

{

UE_LOG(LogTemp, Warning, TEXT("Successfully imported audio"));

// Handle ImportedSoundWave playback

}

Importer->RemoveFromRoot();

});

RuntimeAudioImporter->ImportAudioFromBuffer(AudioData, ERuntimeAudioFormat::Mp3);

}

else

{

UE_LOG(LogTemp, Error, TEXT("TTS request failed: %s"), *TTSErrorStatus.ErrorMessage);

}

}

)

);

}

else

{

UE_LOG(LogTemp, Error, TEXT("Failed to get voices: %s"), *ErrorStatus.ErrorMessage);

}

}

)

);

- Blueprint

- C++

// Example of getting voices and then sending a TTS request to Azure

// First, get available voices

UAIChatbotIntegratorAzureGetVoices::GetVoicesNative(

EChatbotIntegrator_AzureRegion::EAST_US,

FOnAzureVoiceListResponseNative::CreateWeakLambda(

this,

[this](const TArray<FChatbotIntegrator_AzureVoiceInfo>& Voices, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError && Voices.Num() > 0)

{

// Use the first available voice

const FChatbotIntegrator_AzureVoiceInfo& FirstVoice = Voices[0];

UE_LOG(LogTemp, Log, TEXT("Using voice: %s (%s)"), *FirstVoice.DisplayName, *FirstVoice.ShortName);

// Now send TTS request with the selected voice

FChatbotIntegrator_AzureTTSSettings TTSSettings;

TTSSettings.Text = TEXT("Hello, this is a test of text-to-speech functionality.");

TTSSettings.VoiceShortName = FirstVoice.ShortName;

TTSSettings.LanguageCode = FirstVoice.Locale;

TTSSettings.Region = EChatbotIntegrator_AzureRegion::EAST_US;

TTSSettings.OutputFormat = EChatbotIntegrator_AzureTTSFormat::AUDIO_16KHZ_32KBITRATE_MONO_MP3;

UAIChatbotIntegratorAzureTTS::SendTTSRequestNative(

TTSSettings,

FOnAzureTTSResponseNative::CreateWeakLambda(

this,

[this](const TArray<uint8>& AudioData, const FChatbotIntegratorErrorStatus& TTSErrorStatus)

{

if (!TTSErrorStatus.bIsError)

{

UE_LOG(LogTemp, Log, TEXT("Received TTS audio data: %d bytes"), AudioData.Num());

// Process the audio data using Runtime Audio Importer plugin

URuntimeAudioImporterLibrary* RuntimeAudioImporter = URuntimeAudioImporterLibrary::CreateRuntimeAudioImporter();

RuntimeAudioImporter->AddToRoot();

RuntimeAudioImporter->OnResultNative.AddWeakLambda(this, [this](URuntimeAudioImporterLibrary* Importer, UImportedSoundWave* ImportedSoundWave, ERuntimeImportStatus Status)

{

if (Status == ERuntimeImportStatus::SuccessfulImport)

{

UE_LOG(LogTemp, Warning, TEXT("Successfully imported audio"));

// Handle ImportedSoundWave playback

}

Importer->RemoveFromRoot();

});

RuntimeAudioImporter->ImportAudioFromBuffer(AudioData, ERuntimeAudioFormat::Mp3);

}

else

{

UE_LOG(LogTemp, Error, TEXT("TTS request failed: %s"), *TTSErrorStatus.ErrorMessage);

}

}

)

);

}

else

{

UE_LOG(LogTemp, Error, TEXT("Failed to get voices: %s"), *ErrorStatus.ErrorMessage);

}

}

)

);

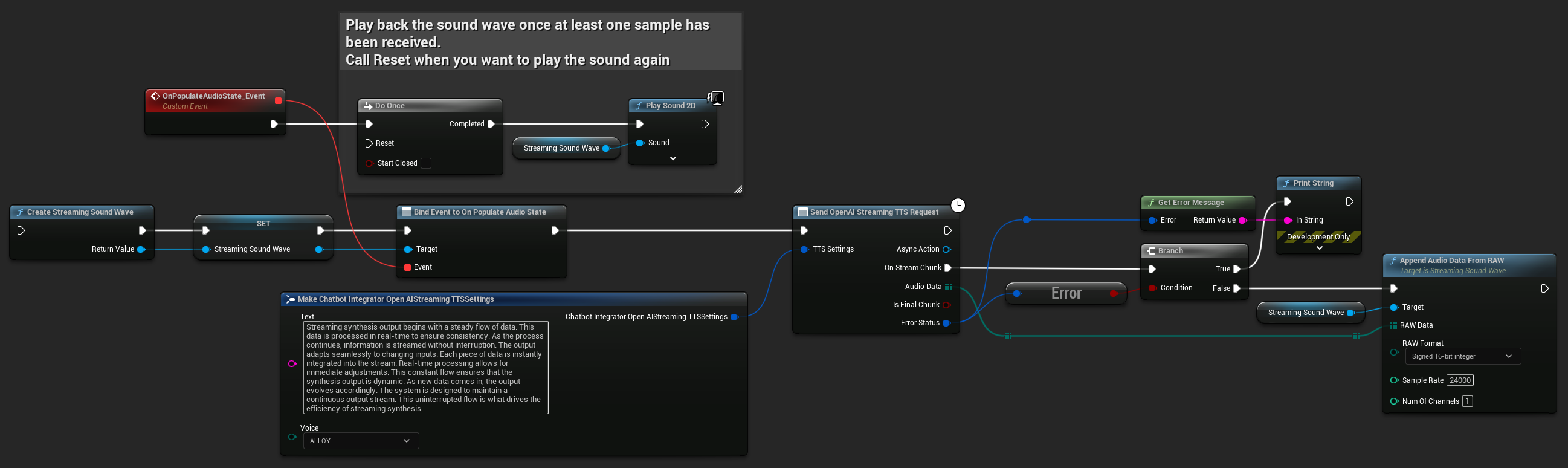

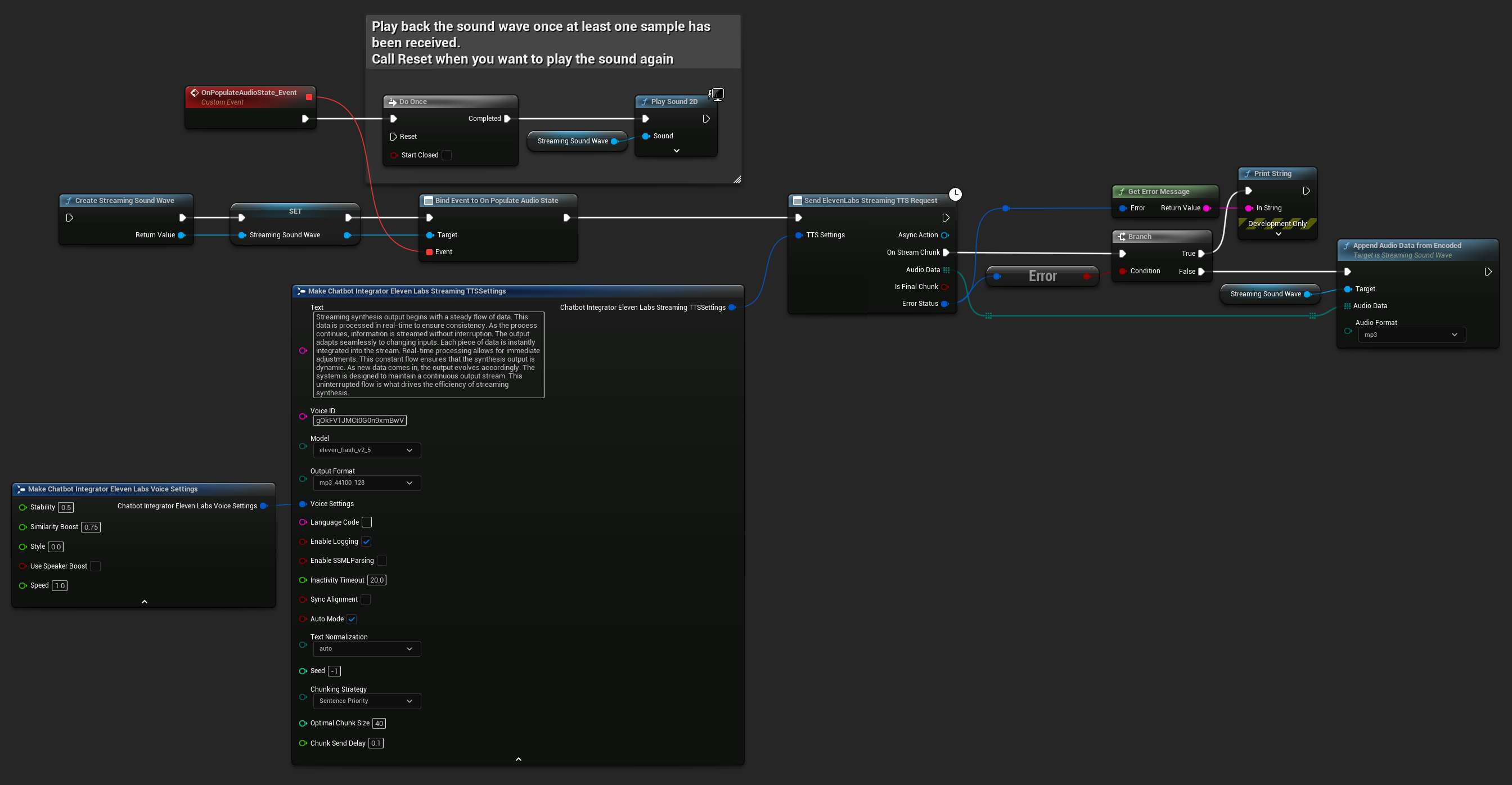

طلبات توليف الكلام النصي بالبث المباشر (Streaming TTS)

يقوم توليف الكلام النصي بالبث المباشر (Streaming TTS) بتسليم مقاطع الصوت فور توليدها، مما يسمح لك بمعالجة البيانات بشكل تدريجي بدلاً من الانتظار حتى يتم توليف الصوت بالكامل. هذا يقلل بشكل كبير من زمن الانتظار الملحوظ للنصوص الطويلة ويمكن التطبيقات التي تعمل في الوقت الفعلي. كما يدعم توليف الكلام النصي بالبث المباشر (Streaming TTS) من ElevenLabs وظائف البث المجزء المتقدمة لسيناريوهات توليد النصوص الديناميكية.

- OpenAI Streaming TTS

- ElevenLabs Streaming TTS

- Blueprint

- C++

UPROPERTY()

UStreamingSoundWave* StreamingSoundWave;

UPROPERTY()

bool bIsPlaying = false;

UFUNCTION(BlueprintCallable)

void StartStreamingTTS()

{

// Create a sound wave for streaming if not already created

if (!StreamingSoundWave)

{

StreamingSoundWave = UStreamingSoundWave::CreateStreamingSoundWave();

StreamingSoundWave->OnPopulateAudioStateNative.AddWeakLambda(this, [this]()

{

if (!bIsPlaying)

{

bIsPlaying = true;

UGameplayStatics::PlaySound2D(GetWorld(), StreamingSoundWave);

}

});

}

FChatbotIntegrator_OpenAIStreamingTTSSettings TTSSettings;

TTSSettings.Text = TEXT("Streaming synthesis output begins with a steady flow of data. This data is processed in real-time to ensure consistency.");

TTSSettings.Voice = EChatbotIntegrator_OpenAIStreamingTTSVoice::ALLOY;

UAIChatbotIntegratorOpenAIStreamTTS::SendStreamingTTSRequestNative(TTSSettings, FOnOpenAIStreamingTTSNative::CreateWeakLambda(this, [this](const TArray<uint8>& AudioData, bool IsFinalChunk, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError)

{

UE_LOG(LogTemp, Log, TEXT("Received TTS audio chunk: %d bytes"), AudioData.Num());

StreamingSoundWave->AppendAudioDataFromRAW(AudioData, ERuntimeRAWAudioFormat::Int16, 24000, 1);

}

}));

}

يدعم ElevenLabs Streaming TTS كلاً من وضع البث القياسي ووضع البث المجزأ المتقدم، مما يوفر مرونة لاستخدامات مختلفة.

وضع البث القياسي

يعالج وضع البث القياسي النص المحدد مسبقاً ويوفر مقاطع الصوت أثناء توليدها.

- Blueprint

- C++

UPROPERTY()

UStreamingSoundWave* StreamingSoundWave;

UPROPERTY()

bool bIsPlaying = false;

UFUNCTION(BlueprintCallable)

void StartStreamingTTS()

{

// Create a sound wave for streaming if not already created

if (!StreamingSoundWave)

{

StreamingSoundWave = UStreamingSoundWave::CreateStreamingSoundWave();

StreamingSoundWave->OnPopulateAudioStateNative.AddWeakLambda(this, [this]()

{

if (!bIsPlaying)

{

bIsPlaying = true;

UGameplayStatics::PlaySound2D(GetWorld(), StreamingSoundWave);

}

});

}

FChatbotIntegrator_ElevenLabsStreamingTTSSettings TTSSettings;

TTSSettings.Text = TEXT("Streaming synthesis output begins with a steady flow of data. This data is processed in real-time to ensure consistency.");

TTSSettings.Model = EChatbotIntegrator_ElevenLabsTTSModel::ELEVEN_TURBO_V2_5;

TTSSettings.OutputFormat = EChatbotIntegrator_ElevenLabsTTSFormat::MP3_22050_32;

TTSSettings.VoiceID = TEXT("YOUR_VOICE_ID");

TTSSettings.bEnableChunkedStreaming = false; // Standard streaming mode

UAIChatbotIntegratorElevenLabsStreamTTS::SendStreamingTTSRequestNative(GetWorld(), TTSSettings, FOnElevenLabsStreamingTTSNative::CreateWeakLambda(this, [this](const TArray<uint8>& AudioData, bool IsFinalChunk, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError)

{

UE_LOG(LogTemp, Log, TEXT("Received TTS audio chunk: %d bytes"), AudioData.Num());

StreamingSoundWave->AppendAudioDataFromEncoded(AudioData, ERuntimeAudioFormat::Mp3);

}

}));

}

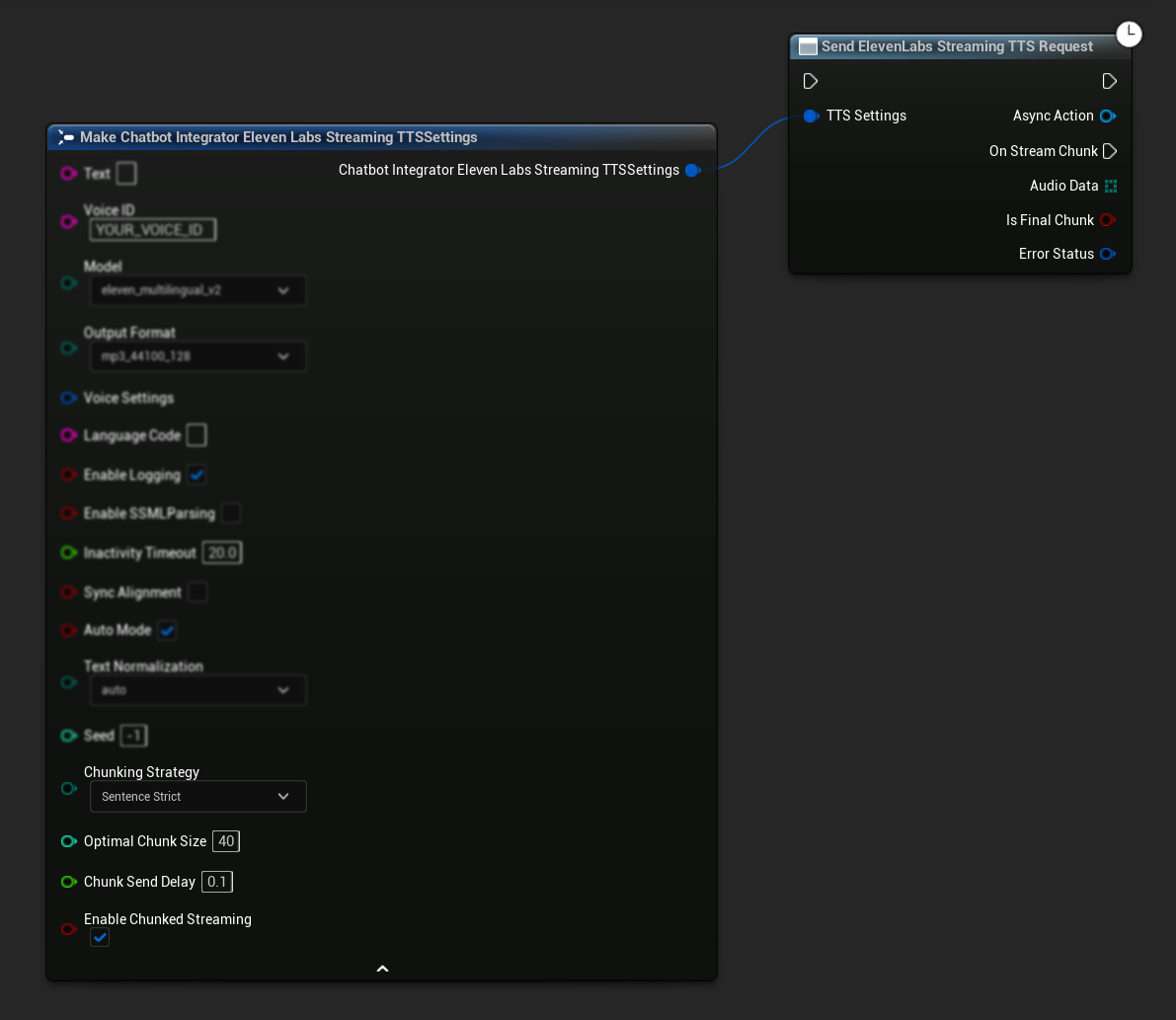

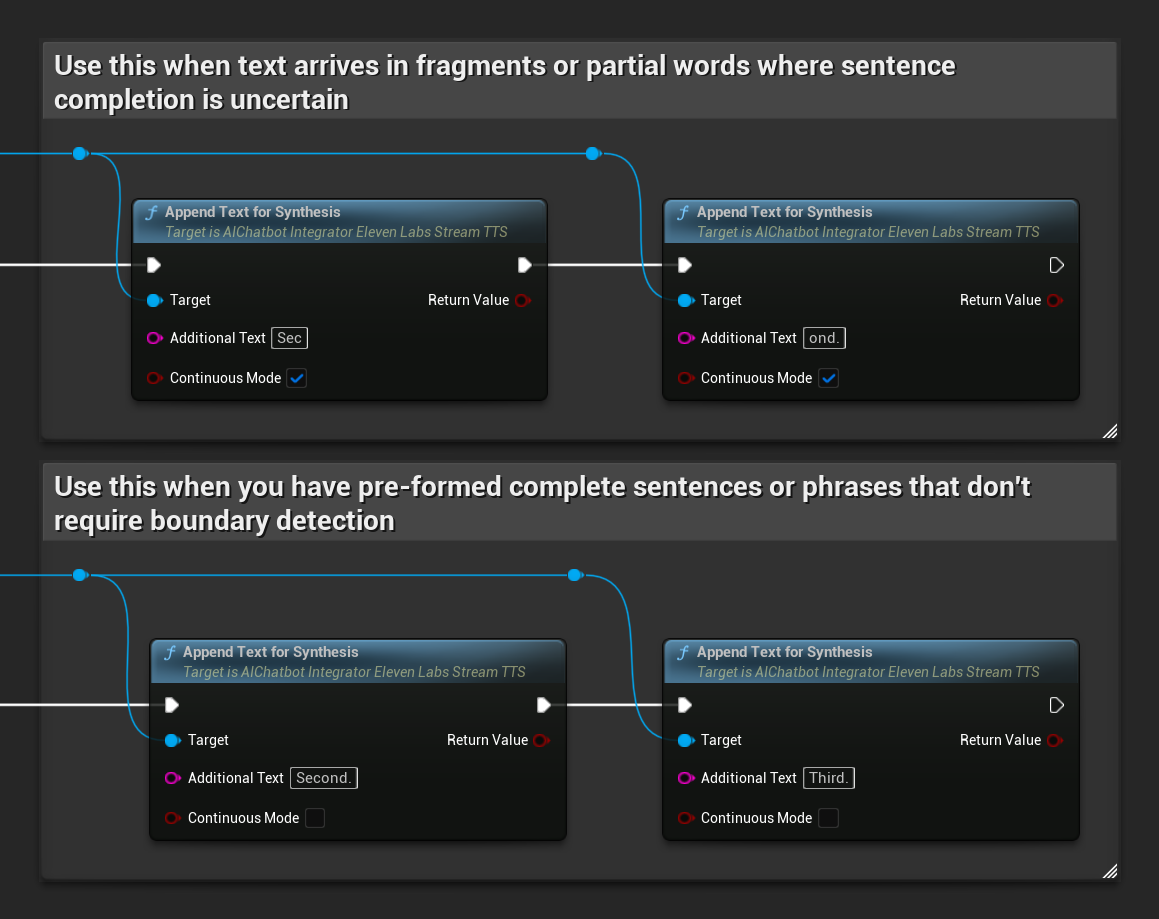

وضع البث المجزأ

يسمح لك وضع البث المجزأ بإلحاق النص ديناميكيًا أثناء التوليف، وهو مثالي للتطبيقات في الوقت الفعلي حيث يتم إنشاء النص تدريجيًا (مثل استجابات الدردشة بالذكاء الاصطناعي التي يتم توليفها أثناء إنشائها). لتمكين هذا الوضع، عيّن bEnableChunkedStreaming إلى true في إعدادات TTS الخاصة بك.

- Blueprint

- C++

الإعداد الأولي: قم بإعداد البث المجزأ عن طريق تمكين وضع البث المجزأ في إعدادات TTS الخاصة بك وإنشاء الطلب الأولي. تُرجع دالة الطلب كائن إجراء غير متزامن يوفر طرقًا لإدارة جلسة البث المجزأ:

إلحاق نص للتوليف:

استخدم هذه الدالة على كائن الإجراء غير المتزامن المُعاد لإضافة نص ديناميكيًا أثناء جلسة بث مجزأة نشطة. يتحكم المعامل bContinuousMode في كيفية معالجة النص:

- عندما يكون

bContinuousModeهوtrue: يتم تخزين النص مؤقتًا داخليًا حتى يتم اكتشاف حدود الجمل الكاملة (النقاط، علامات التعجب، علامات الاستفهام). يستخرج النظام تلقائيًا الجمل الكاملة للتوليف مع الاحتفاظ بالنص غير المكتمل في المخزن المؤقت. استخدم هذا عندما يصل النص في أجزاء أو كلمات جزئية حيث يكون اكتمال الجمل غير مؤكد. - عندما يكون

bContinuousModeهوfalse: تتم معالجة النص على الفور دون تخزين مؤقت أو تحليل لحدود الجمل. كل استدعاء يؤدي إلى معالجة فورية للجزء والتوليف. استخدم هذا عندما يكون لديك جمل أو عبارات مكتملة مسبقًا لا تتطلب اكتشاف الحدود.

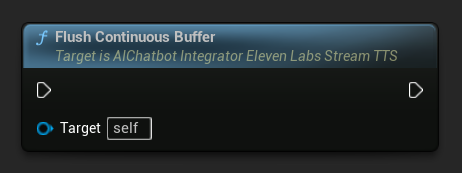

تفريغ المخزن المؤقت المستمر: يجبر معالجة أي نص مستمر مخزن مؤقتًا على كائن الإجراء غير المتزامن، حتى لو لم يتم اكتشاف حد للجملة. مفيد عندما تعلم أنه لن يأتي المزيد من النص لبعض الوقت:

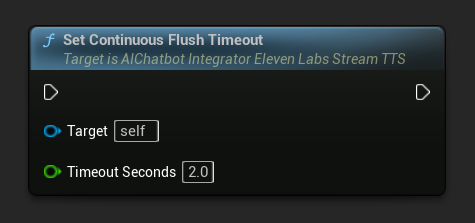

تعيين مهلة تفريغ المستمر: يضبط التفريغ التلقائي للمخزن المؤقت المستمر على كائن الإجراء غير المتزامن عندما لا يصل نص جديد خلال المهلة المحددة:

عيّن على 0 لتعطيل التفريغ التلقائي. القيم الموصى بها هي 1-3 ثوانٍ للتطبيقات في الوقت الفعلي.

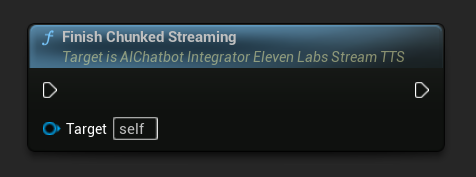

إنهاء البث المجزأ: يغلق جلسة البث المجزأ على كائن الإجراء غير المتزامن ويحدد التوليف الحالي على أنه نهائي. استدعِ هذا دائمًا عندما تنتهي من إضافة النص:

UPROPERTY()

UAIChatbotIntegratorElevenLabsStreamTTS* ChunkedTTSRequest;

UPROPERTY()

UStreamingSoundWave* StreamingSoundWave;

UPROPERTY()

bool bIsPlaying = false;

UFUNCTION(BlueprintCallable)

void StartChunkedStreamingTTS()

{

// Create a sound wave for streaming if not already created

if (!StreamingSoundWave)

{

StreamingSoundWave = UStreamingSoundWave::CreateStreamingSoundWave();

StreamingSoundWave->OnPopulateAudioStateNative.AddWeakLambda(this, [this]()

{

if (!bIsPlaying)

{

bIsPlaying = true;

UGameplayStatics::PlaySound2D(GetWorld(), StreamingSoundWave);

}

});

}

FChatbotIntegrator_ElevenLabsStreamingTTSSettings TTSSettings;

TTSSettings.Text = TEXT(""); // Start with empty text in chunked mode

TTSSettings.Model = EChatbotIntegrator_ElevenLabsTTSModel::ELEVEN_TURBO_V2_5;

TTSSettings.OutputFormat = EChatbotIntegrator_ElevenLabsTTSFormat::MP3_22050_32;

TTSSettings.VoiceID = TEXT("YOUR_VOICE_ID");

TTSSettings.bEnableChunkedStreaming = true; // Enable chunked streaming mode

// Store the returned async action object to call chunked streaming functions on it

ChunkedTTSRequest = UAIChatbotIntegratorElevenLabsStreamTTS::SendStreamingTTSRequestNative(

GetWorld(),

TTSSettings,

FOnElevenLabsStreamingTTSNative::CreateWeakLambda(this, [this](const TArray<uint8>& AudioData, bool IsFinalChunk, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError && AudioData.Num() > 0)

{

UE_LOG(LogTemp, Log, TEXT("Received TTS audio chunk: %d bytes"), AudioData.Num());

StreamingSoundWave->AppendAudioDataFromEncoded(AudioData, ERuntimeAudioFormat::Mp3);

}

if (IsFinalChunk)

{

UE_LOG(LogTemp, Log, TEXT("Chunked streaming session completed"));

ChunkedTTSRequest = nullptr;

}

})

);

// Now you can append text dynamically as it becomes available

// For example, from an AI chat response stream:

AppendTextToTTS(TEXT("Hello, this is the first part of the message. "));

}

UFUNCTION(BlueprintCallable)

void AppendTextToTTS(const FString& AdditionalText)

{

// Call AppendTextForSynthesis on the returned async action object

if (ChunkedTTSRequest)

{

// Use continuous mode (true) when text is being generated word-by-word

// and you want to wait for complete sentences before processing

bool bContinuousMode = true;

bool bSuccess = ChunkedTTSRequest->AppendTextForSynthesis(AdditionalText, bContinuousMode);

if (bSuccess)

{

UE_LOG(LogTemp, Log, TEXT("Successfully appended text: %s"), *AdditionalText);

}

}

}

// Configure continuous text buffering with custom timeout

UFUNCTION(BlueprintCallable)

void SetupAdvancedChunkedStreaming()

{

// Call SetContinuousFlushTimeout on the async action object

if (ChunkedTTSRequest)

{

// Set automatic flush timeout to 1.5 seconds

// Text will be automatically processed if no new text arrives within this timeframe

ChunkedTTSRequest->SetContinuousFlushTimeout(1.5f);

}

}

// Example of handling real-time AI chat response synthesis

UFUNCTION(BlueprintCallable)

void HandleAIChatResponseForTTS(const FString& ChatChunk, bool IsStreamFinalChunk)

{

if (ChunkedTTSRequest)

{

if (!IsStreamFinalChunk)

{

// Append each chat chunk in continuous mode

// The system will automatically extract complete sentences for synthesis

ChunkedTTSRequest->AppendTextForSynthesis(ChatChunk, true);

}

else

{

// Add the final chunk

ChunkedTTSRequest->AppendTextForSynthesis(ChatChunk, true);

// Flush any remaining buffered text and finish the session

ChunkedTTSRequest->FlushContinuousBuffer();

ChunkedTTSRequest->FinishChunkedStreaming();

}

}

}

// Example of immediate chunk processing (bypassing sentence boundary detection)

UFUNCTION(BlueprintCallable)

void AppendImmediateText(const FString& Text)

{

// Call AppendTextForSynthesis with continuous mode = false on the async action object

if (ChunkedTTSRequest)

{

// Use continuous mode = false for immediate processing

// Useful when you have complete sentences or phrases ready

ChunkedTTSRequest->AppendTextForSynthesis(Text, false);

}

}

UFUNCTION(BlueprintCallable)

void FinishChunkedTTS()

{

// Call FlushContinuousBuffer and FinishChunkedStreaming on the async action object

if (ChunkedTTSRequest)

{

// Flush any remaining buffered text

ChunkedTTSRequest->FlushContinuousBuffer();

// Mark the session as finished

ChunkedTTSRequest->FinishChunkedStreaming();

}

}

الميزات الرئيسية للبث المجزأ من ElevenLabs:

- الوضع المستمر: عندما يكون

bContinuousModeعلىtrue، يتم تخزين النص مؤقتًا حتى يتم اكتشاف حدود الجمل الكاملة، ثم تتم معالجته للتركيب - الوضع الفوري: عندما يكون

bContinuousModeعلىfalse، تتم معالجة النص فورًا كأجزاء منفصلة دون تخزين مؤقت - التفريغ التلقائي: مهلة قابلة للتكوين تعالج النص المخزن مؤقتًا عندما لا يصل مدخل جديد ضمن الإطار الزمني المحدد

- كشف حدود الجمل: يكتشف نهايات الجمل (., !, ?) ويستخرج الجمل الكاملة من النص المخزن مؤقتًا

- التكامل في الوقت الحقيقي: يدعم إدخال النص التدريجي حيث يصل المحتوى على شكل أجزاء مع مرور الوقت

- تجزئة النص المرنة: تتوفر استراتيجيات متعددة (الأولوية للجمل، الجمل الصارمة، القائم على الحجم) لتحسين معالجة التركيب

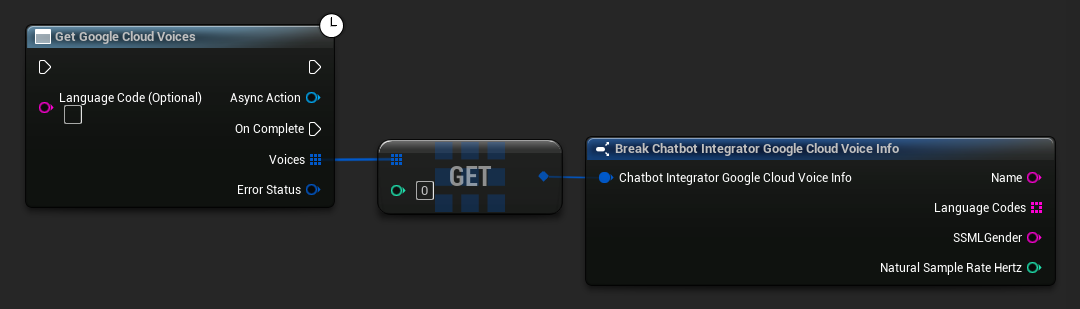

الحصول على الأصوات المتاحة

تقدم بعض مزودي خدمة تحويل النص إلى كلام واجهات برمجة تطبيقات لعرض الأصوات لاكتشاف الأصوات المتاحة برمجيًا.

- Google Cloud Voices

- أصوات Azure

- Blueprint

- C++

// Example of getting available voices from Google Cloud

UAIChatbotIntegratorGoogleCloudVoices::GetVoicesNative(

TEXT("en-US"), // Optional language filter

FOnGoogleCloudVoicesResponseNative::CreateWeakLambda(

this,

[this](const TArray<FChatbotIntegrator_GoogleCloudVoiceInfo>& Voices, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError)

{

for (const auto& Voice : Voices)

{

UE_LOG(LogTemp, Log, TEXT("Voice: %s (%s)"), *Voice.Name, *Voice.SSMLGender);

}

}

}

)

);

- Blueprint

- C++

// Example of getting available voices from Azure

UAIChatbotIntegratorAzureGetVoices::GetVoicesNative(

EChatbotIntegrator_AzureRegion::EAST_US,

FOnAzureVoiceListResponseNative::CreateWeakLambda(

this,

[this](const TArray<FChatbotIntegrator_AzureVoiceInfo>& Voices, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError)

{

for (const auto& Voice : Voices)

{

UE_LOG(LogTemp, Log, TEXT("Voice: %s (%s)"), *Voice.DisplayName, *Voice.Gender);

}

}

}

)

);

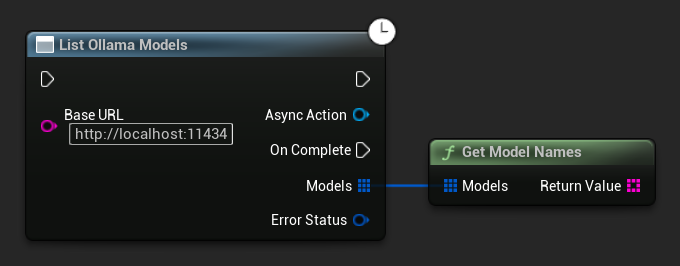

سرد نماذج أولاما

يمكنك الاستعلام عن مثيل أولاما المحلي الخاص بك لجميع النماذج المتاحة باستخدام دالة ListOllamaModels. يمكن أن يكون هذا مفيدًا، على سبيل المثال، لملء منتقي نموذج ديناميكيًا في واجهة المستخدم الخاصة بك. يقوم المساعد GetModelNames باستخراج أسماء السلاسل فقط من النتيجة للراحة.

- Blueprint

- C++

// Example of listing locally available Ollama models

UAIChatbotIntegratorOllamaModelList::ListModelsNative(

TEXT("http://localhost:11434"),

FOnOllamaListModelsResponseNative::CreateWeakLambda(

this,

[this](const TArray<FOllamaModelInfo>& Models, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (!ErrorStatus.bIsError)

{

for (const FOllamaModelInfo& Model : Models)

{

UE_LOG(LogTemp, Log, TEXT("Model: %s | Family: %s | Parameters: %s | Quantization: %s | Size: %lld bytes"),

*Model.Name, *Model.Family, *Model.ParameterSize, *Model.QuantizationLevel, Model.Size);

}

// Convenience helper to get just the name strings, e.g. for a UI dropdown

TArray<FString> ModelNames = UAIChatbotIntegratorOllamaModelList::GetModelNames(Models);

}

else

{

UE_LOG(LogTemp, Error, TEXT("Failed to list Ollama models: %s"), *ErrorStatus.ErrorMessage);

}

}

)

);

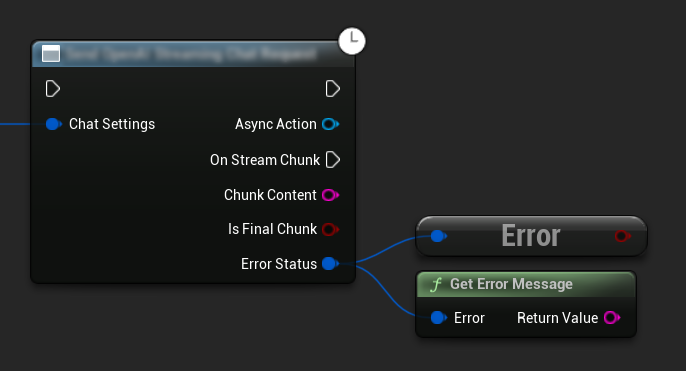

معالجة الأخطاء

عند إرسال أي طلبات، من الضروري معالجة الأخطاء المحتملة عن طريق التحقق من ErrorStatus في رد الاتصال الخاص بك. يوفر ErrorStatus معلومات حول أي مشكلات قد تحدث أثناء الطلب.

- Blueprint

- C++

// Example of error handling in a request

UAIChatbotIntegratorOpenAI::SendChatRequestNative(

Settings,

FOnOpenAIChatCompletionResponseNative::CreateWeakLambda(

this,

[this](const FString& Response, const FChatbotIntegratorErrorStatus& ErrorStatus)

{

if (ErrorStatus.bIsError)

{

// Handle the error

UE_LOG(LogTemp, Error, TEXT("Chat request failed: %s"), *ErrorStatus.ErrorMessage);

}

else

{

// Process the successful response

UE_LOG(LogTemp, Log, TEXT("Received response: %s"), *Response);

}

}

)

);

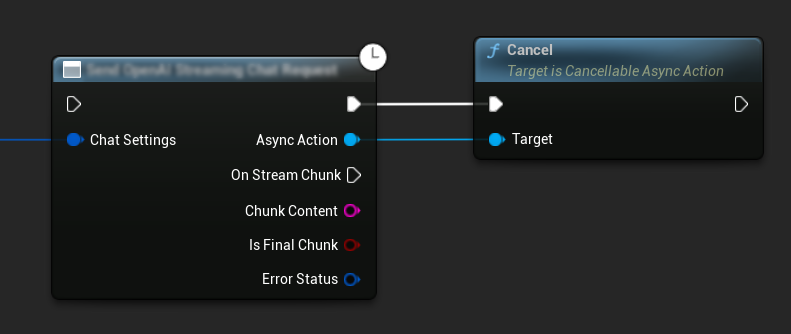

إلغاء الطلبات

يسمح لك البرنامج المساعد بإلغاء كل من طلبات النص إلى النص وطلبات تحويل النص إلى كلام أثناء تنفيذها. يمكن أن يكون هذا مفيدًا عندما تريد مقاطعة طلب طويل الأمد أو تغيير تدفق المحادثة ديناميكيًا.

- Blueprint

- C++

// Example of cancelling requests

UAIChatbotIntegratorOpenAI* ChatRequest = UAIChatbotIntegratorOpenAI::SendChatRequestNative(

ChatSettings,

ChatResponseCallback

);

// Cancel the chat request at any time

ChatRequest->Cancel();

// TTS requests can be cancelled similarly

UAIChatbotIntegratorOpenAITTS* TTSRequest = UAIChatbotIntegratorOpenAITTS::SendTTSRequestNative(

TTSSettings,

TTSResponseCallback

);

// Cancel the TTS request

TTSRequest->Cancel();

أفضل الممارسات

- تعامل دائمًا مع الأخطاء المحتملة عن طريق التحقق من

ErrorStatusفي رد الاتصال الخاص بك - كن حذرًا بشأن حدود معدل الطلبات والتكاليف لكل مزود خدمة

- استخدم وضع التدفق للمحادثات الطويلة أو التفاعلية

- فكر في إلغاء الطلبات التي لم تعد هناك حاجة إليها لإدارة الموارد بكفاءة

- استخدم تحويل النص إلى كلام بالتدفق للنصوص الطويلة لتقليل زمن التأخير الملحوظ

- لمعالجة الصوت، يقدم مكون Runtime Audio Importer الإضافي حلاً مناسبًا، ولكن يمكنك تنفيذ معالجة مخصصة بناءً على احتياجات مشروعك

- عند استخدام نماذج التفكير (DeepSeek Reasoner, Grok)، تعامل مع مخرجات التفكير والمحتوى بشكل مناسب

- اكتشف الأصوات المتاحة باستخدام واجهات برمجة التطبيقات الخاصة بسرد الأصوات قبل تنفيذ ميزات تحويل النص إلى كلام

- للتدفق المجزأ لـ ElevenLabs: استخدم الوضع المستمر عندما يتم إنشاء النص تدريجيًا (مثل ردود الذكاء الاصطناعي) والوضع الفوري لقطع النص المشكلة مسبقًا

- قم بتكوين مهلات إفراغ مناسبة للوضع المستمر لتحقيق التوازن بين سرعة الاستجابة وتدفق الكلام الطبيعي

- اختر أحجام القطع المثلى وفترات التأخير في الإرسال بناءً على متطلبات التطبيق في الوقت الفعلي

- لـ Ollama: استخدم

ListOllamaModelsلاكتشاف النماذج المتاحة ديناميكيًا بدلاً من ترميز أسماء النماذج بشكل ثابت

استكشاف الأخطاء وإصلاحها

- تحقق من صحة بيانات اعتماد واجهة برمجة التطبيقات الخاصة بك لكل مزود خدمة

- تحقق من اتصالك بالإنترنت

- تأكد من تثبيت أي مكتبات لمعالجة الصوت تستخدمها (مثل Runtime Audio Importer) بشكل صحيح عند العمل مع ميزات تحويل النص إلى كلام

- تحقق من أنك تستخدم تنسيق الصوت الصحيح عند معالجة بيانات استجابة تحويل النص إلى كلام

- لتحويل النص إلى كلام بالتدفق، تأكد من أنك تتعامل مع قطع الصوت بشكل صحيح

- لنماذج التفكير، تأكد من أنك تعالج مخرجات التفكير والمحتوى معًا

- راجع الوثائق الخاصة بالمزود لتوافر النماذج وإمكانياتها

- للتدفق المجزأ لـ ElevenLabs: تأكد من استدعاء

FinishChunkedStreamingعند الانتهاء لإغلاق الجلسة بشكل صحيح - لمشاكل الوضع المستمر: تحقق من اكتشاف حدود الجمل بشكل صحيح في نصك

- للتطبيقات في الوقت الفعلي: اضبط فترات التأخير في إرسال القطع ومهلات الإفراغ بناءً على متطلبات زمن التأخير لديك

- لـ Ollama: تأكد من أن خادم Ollama قيد التشغيل ويمكن الوصول إليه عند

BaseUrlالمُكون قبل إرسال الطلبات