Wie man das Plugin verwendet

Dieser Leitfaden behandelt die vollständige Laufzeit-API: Erstellen einer LLM-Instanz, Laden von Modellen, Senden von Nachrichten, Herunterladen von Modellen zur Laufzeit, Verwalten des Zustands und Dienstprogrammfunktionen.

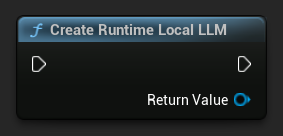

Eine LLM-Instanz erstellen

Beginnen Sie, indem Sie ein Runtime Local LLM-Objekt erstellen. Behalten Sie eine Referenz darauf (z. B. als Variable in Blueprints oder als UPROPERTY in C++), um eine vorzeitige Garbage Collection zu verhindern.

- Blueprint

- C++

UPROPERTY()

URuntimeLocalLLM* LLM;

LLM = URuntimeLocalLLM::CreateRuntimeLocalLLM();

Modell laden

Sie müssen ein Modell laden, bevor Sie Nachrichten senden können. Das Plugin bietet mehrere Lademethoden, abhängig von Ihrem Workflow.

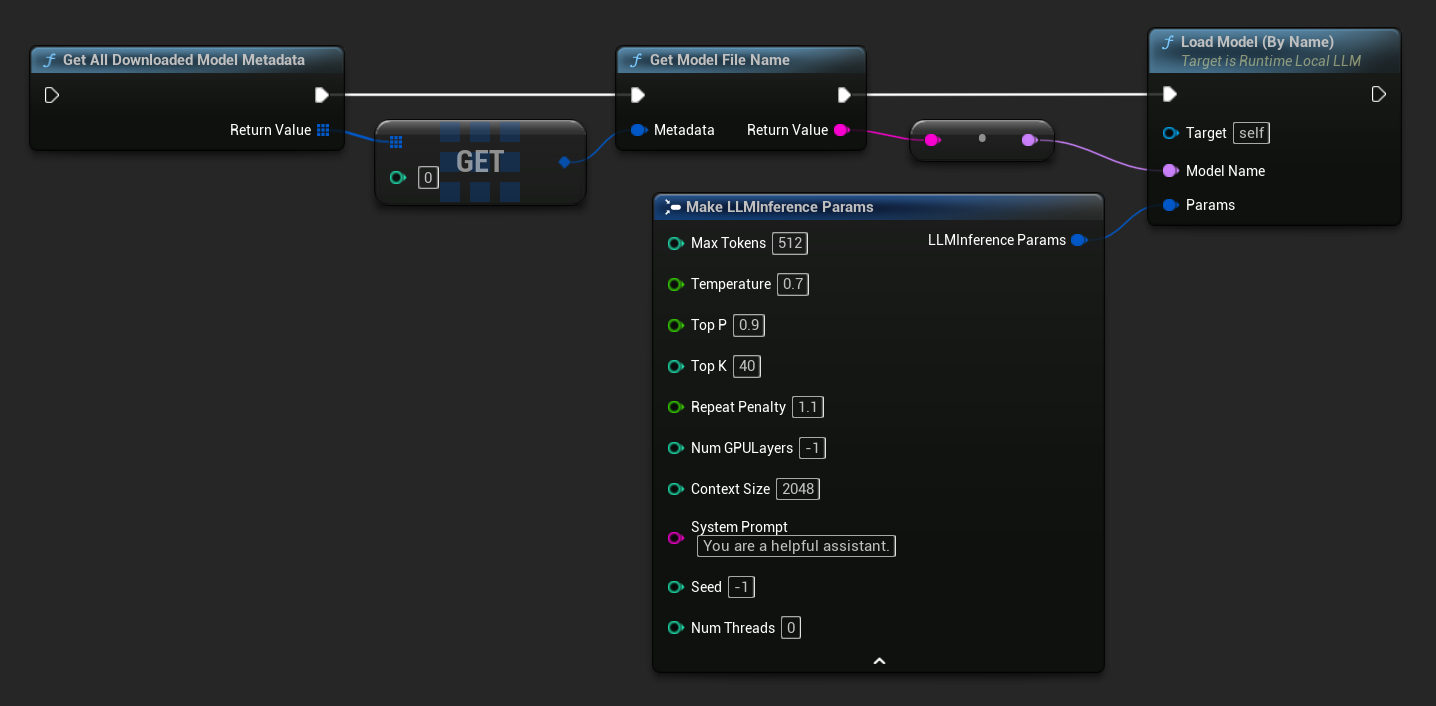

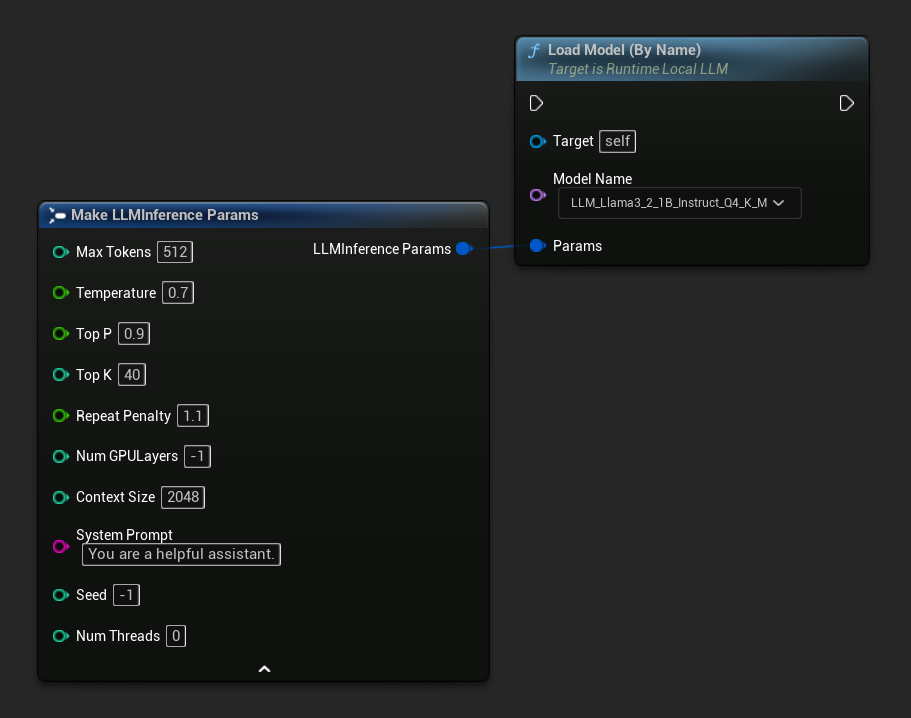

Nach Name laden

Wenn Sie Modelle über das Editor-Einstellungsfeld verwalten, verwenden Sie Load Model (By Name).

- Blueprint

- C++

- UE 5.3 und früher

- UE 5.4+

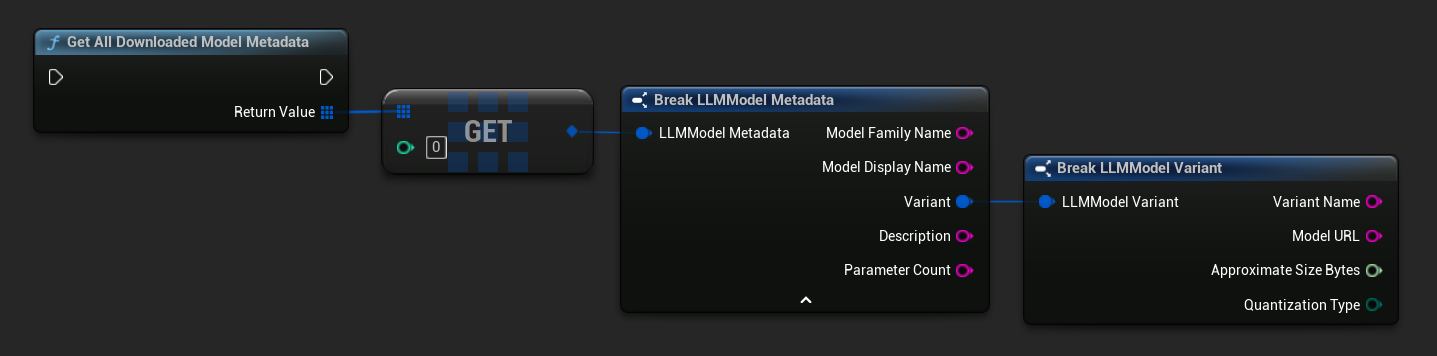

In UE 5.3 und früher wird das Dropdown-Menü nicht angezeigt, daher müssen Sie die verfügbaren Modelle manuell abrufen. Verwenden Sie Get All Downloaded Model Metadata, holen Sie das Element an Index 0 (oder das benötigte Modell), übergeben Sie es an Get Model File Name, um den Namensstring abzurufen, und übergeben Sie diesen dann an Load Model (By Name).

In UE 5.4 und später zeigt Load Model (By Name) ein Dropdown-Menü aller Modelle auf der Festplatte an – wählen Sie einfach das gewünschte Modell zum Laden aus.

In C++ verwenden Sie GetAllDownloadedModelMetadata, um verfügbare Modelle abzurufen, und GetModelFileName, um den Namen zu erhalten, den Sie an LoadModelByName übergeben:

FLLMInferenceParams Params;

Params.MaxTokens = 512;

Params.Temperature = 0.7f;

Params.SystemPrompt = TEXT("You are a helpful assistant.");

TArray<FLLMModelMetadata> DownloadedModels = URuntimeLLMLibrary::GetAllDownloadedModelMetadata();

if (DownloadedModels.Num() > 0)

{

const FLLMModelMetadata& Model = DownloadedModels[0]; // Select the first available model

FString ModelFileName = URuntimeLLMLibrary::GetModelFileName(Model);

LLM->LoadModelByName(FName(*ModelFileName), Params);

}

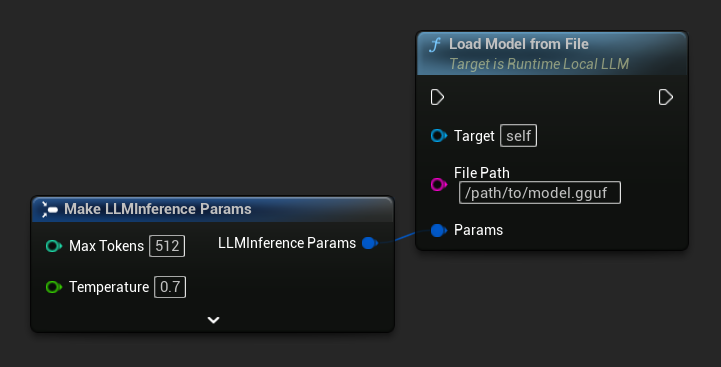

Aus Dateipfad laden

Laden Sie ein Modell direkt von einem absoluten Dateipfad zu einer .gguf-Datei:

- Blueprint

- C++

FLLMInferenceParams Params;

LLM->LoadModelFromFile(TEXT("/path/to/model.gguf"), Params);

Laden von URL (Herunterladen und Laden)

Ein Modell von einer URL herunterladen (falls nicht bereits auf der Festplatte vorhanden) und automatisch laden. Wenn die Datei bereits lokal existiert, wird der Download übersprungen.

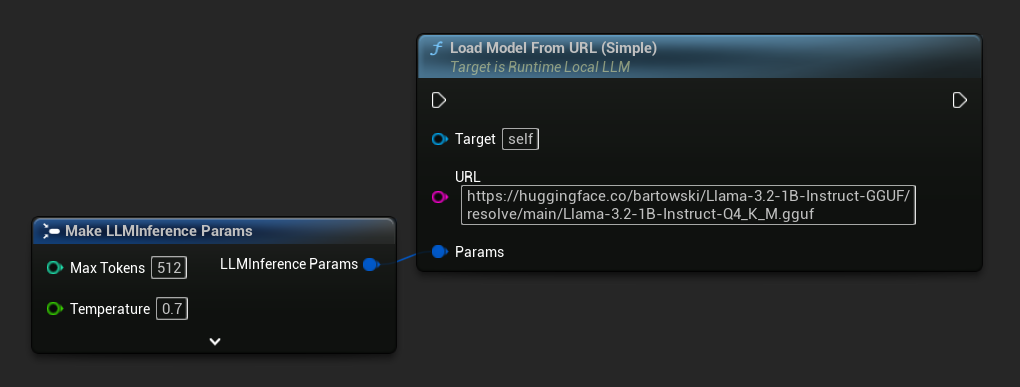

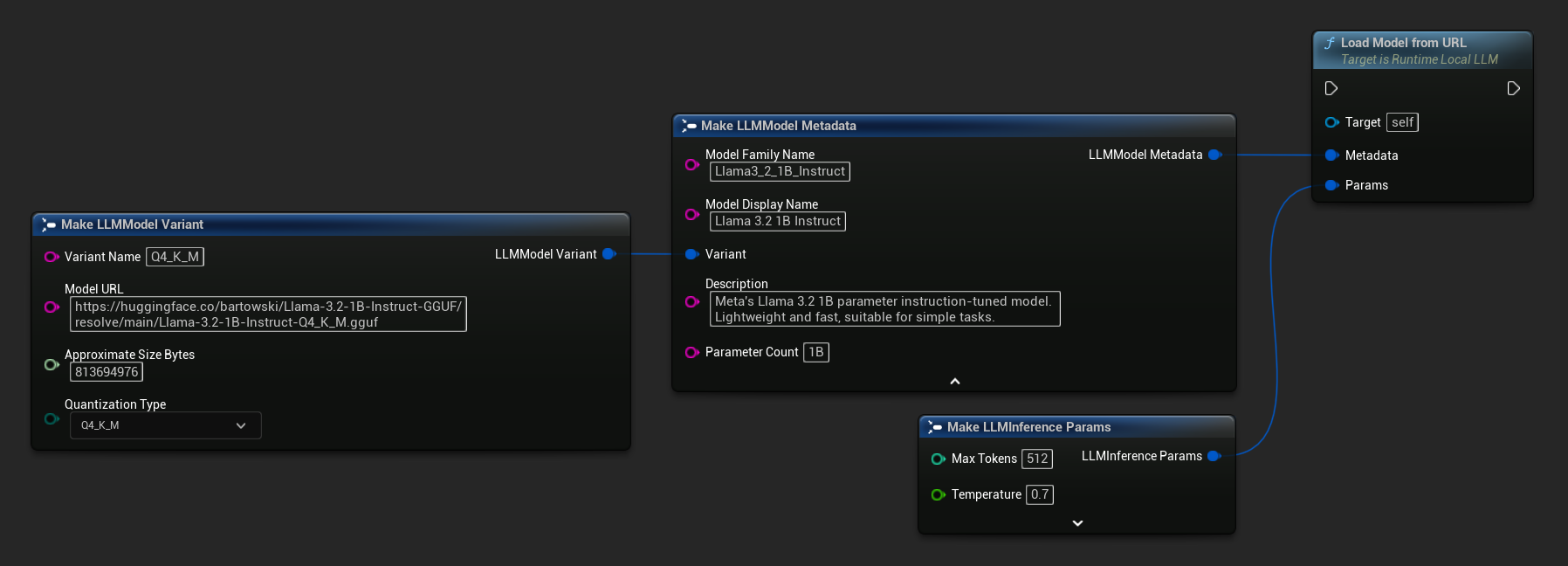

- Blueprint

- C++

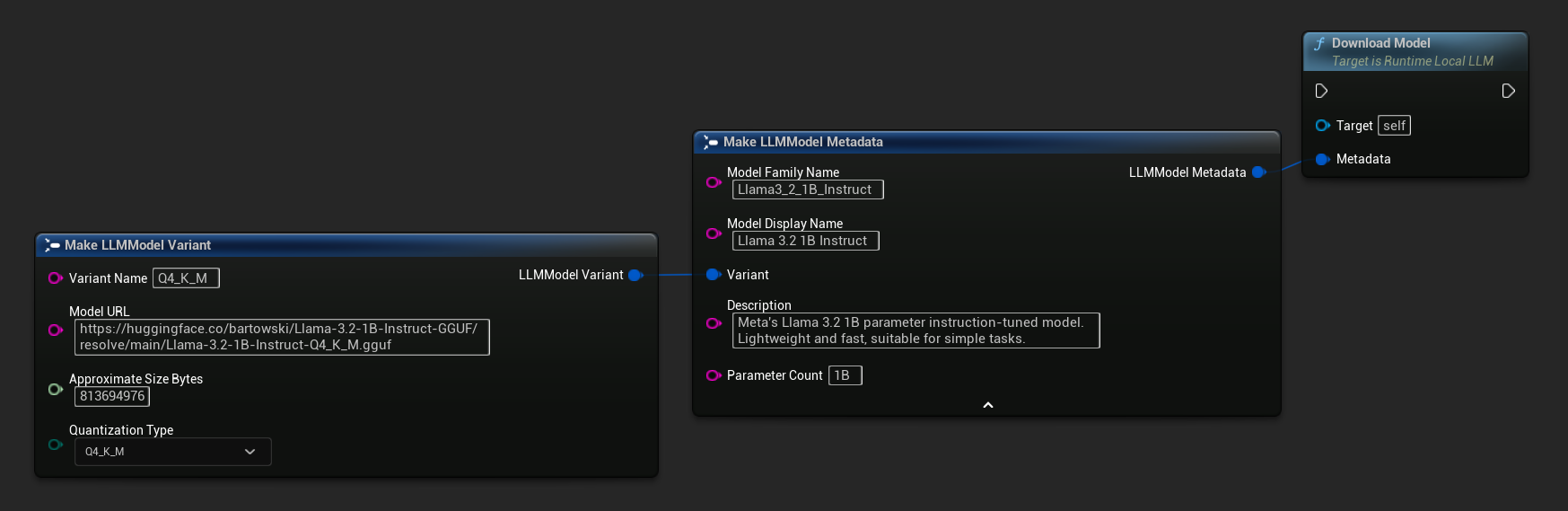

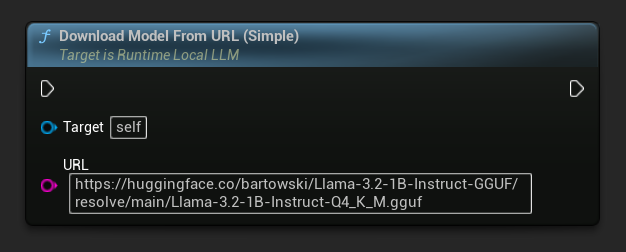

Die einfachste Variante benötigt nur eine URL – die Metadaten werden aus dem Dateinamen abgeleitet:

Sie können auch Load Model From URL mit vollständigen Modellmetadaten für umfangreichere Modellinformationen verwenden:

FLLMInferenceParams Params;

// Simple: URL only - metadata is derived from the filename

LLM->LoadModelFromURLSimple(

TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf"), Params);

// With full metadata

FLLMModelMetadata Metadata;

Metadata.ModelFamilyName = TEXT("Llama3_2_1B_Instruct");

Metadata.ModelDisplayName = TEXT("Llama 3.2 1B Instruct");

Metadata.Description = TEXT("Meta's Llama 3.2 1B parameter instruction-tuned model. Lightweight and fast, suitable for simple tasks.");

Metadata.ParameterCount = TEXT("1B");

Metadata.Variant.VariantName = TEXT("Q4_K_M");

Metadata.Variant.ModelURL = TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf");

Metadata.Variant.ApproximateSizeBytes = 776LL * 1024 * 1024;

Metadata.Variant.QuantizationType = ELLMQuantizationType::Q4_K_M;

LLM->LoadModelFromURL(Metadata, Params);

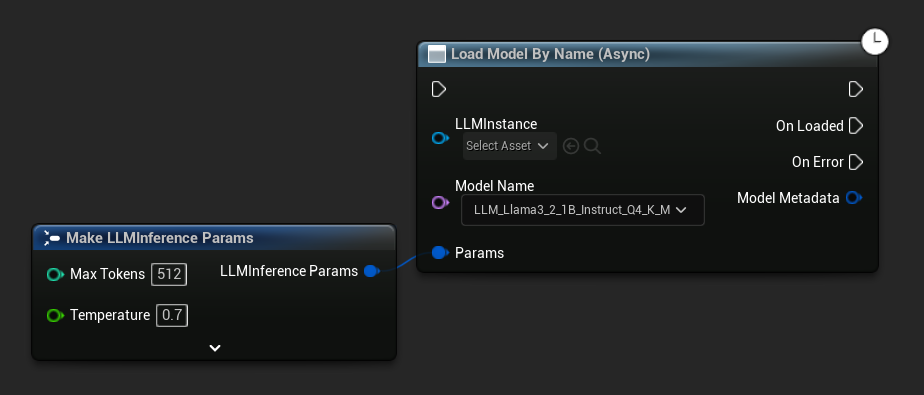

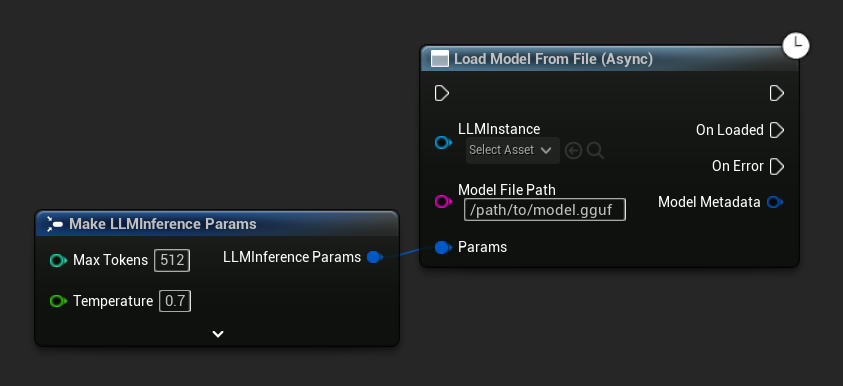

Asynchrones Laden (Blueprint)

Um Ladeabschluss und Fehler über Ausgangspins zu behandeln, anstatt Delegaten manuell zu binden, stehen zwei asynchrone Knoten zur Verfügung.

Load Model By Name (Async) spiegelt Load Model (By Name) wider – in UE 5.4+ zeigt es ein Dropdown aller Modelle auf der Festplatte:

- UE 5.4+

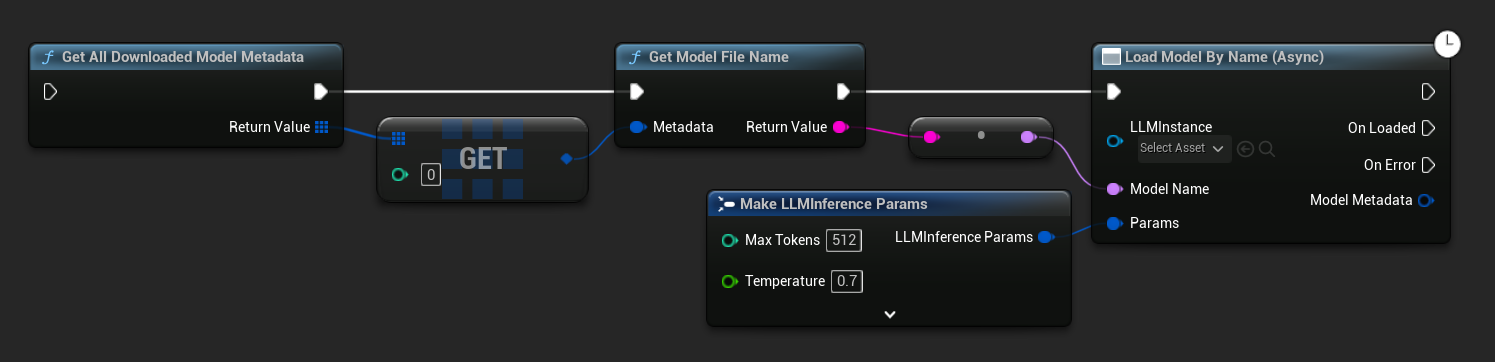

- UE 5.3 and earlier

In UE 5.3 und früher erscheint das Dropdown nicht. Verwenden Sie Get All Downloaded Model Metadata, holen Sie das Element bei Index 0 (oder das benötigte Modell), übergeben Sie es an Get Model File Name und dann an Load Model By Name (Async).

Load Model From File (Async) nimmt stattdessen einen absoluten Dateipfad entgegen:

Ereignisse binden

Binden Sie an die Delegaten der LLM-Instanz, um Rückrufe zu erhalten. Alle Rückrufe werden auf dem Game-Thread ausgelöst.

- Blueprint

- C++

Verfügbare Delegaten:

- On Token Generated: Löst für jedes Ausgabetoken aus

- On Generation Complete: Löst aus, wenn die vollständige Antwort bereit ist, mit Dauer, Token-Anzahl und Tokens pro Sekunde

- On Prompt Processed: Löst nach der Verarbeitung des Eingabe-Prompts und vor Beginn der Generierung aus

- On Error: Löst aus, wenn bei einem Vorgang ein Fehler auftritt

- On Model Loaded: Löst aus, wenn ein Modell den Ladevorgang abgeschlossen hat

- On Model Unloaded: Löst aus, wenn das Modell entladen wird

- On Download Progress: Löst periodisch während eines Modell-Downloads aus (Fortschrittsanteil, empfangene Bytes, Gesamtbytes)

- On Model Downloaded: Löst aus, wenn ein reiner Download-Vorgang abgeschlossen ist

LLM->OnTokenGeneratedNative.AddLambda([](const FString& Token)

{

});

LLM->OnGenerationCompleteNative.AddLambda([](const FString& FullResponse)

{

});

LLM->OnPromptProcessedNative.AddLambda([]()

{

});

LLM->OnErrorNative.AddLambda([](const FString& ErrorMessage)

{

});

LLM->OnModelLoadedNative.AddLambda([](const FString& ModelName)

{

});

LLM->OnModelUnloadedNative.AddLambda([](const FString& ModelName)

{

});

LLM->OnDownloadProgressNative.AddLambda([](const FString& ModelName, float Progress)

{

});

LLM->OnModelDownloadedNative.AddLambda([](const FString& ModelName)

{

});

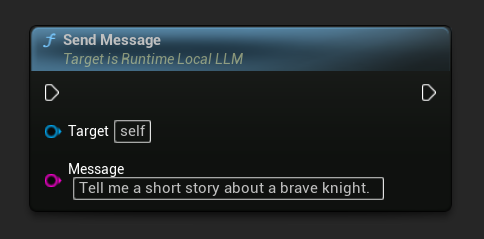

Nachrichten senden

Sobald ein Modell geladen ist, senden Sie eine Benutzernachricht, um eine Antwort zu generieren:

- Blueprint

- C++

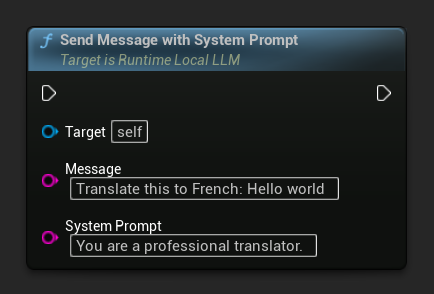

Um den Systemprompt für eine bestimmte Nachricht zu überschreiben, verwenden Sie Send Message With System Prompt:

LLM->SendMessage(TEXT("Tell me a short story about a brave knight."));

// With a custom system prompt override

LLM->SendMessageWithSystemPrompt(

TEXT("Translate this to French: Hello world"),

TEXT("You are a professional translator.")

);

Token strömen durch OnTokenGenerated, sobald sie erzeugt werden. Wenn die Generierung abgeschlossen ist, wird OnGenerationComplete mit der vollständigen Antwort, Dauer, Tokenanzahl und Token pro Sekunde ausgelöst.

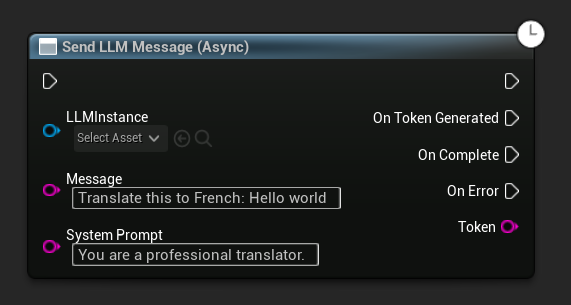

Asynchrones Senden einer Nachricht (Blueprint)

Der Send LLM Message (Async)-Knoten bietet dedizierte Ausgabepins für Token, Abschluss und Fehler:

Modelle zur Laufzeit herunterladen

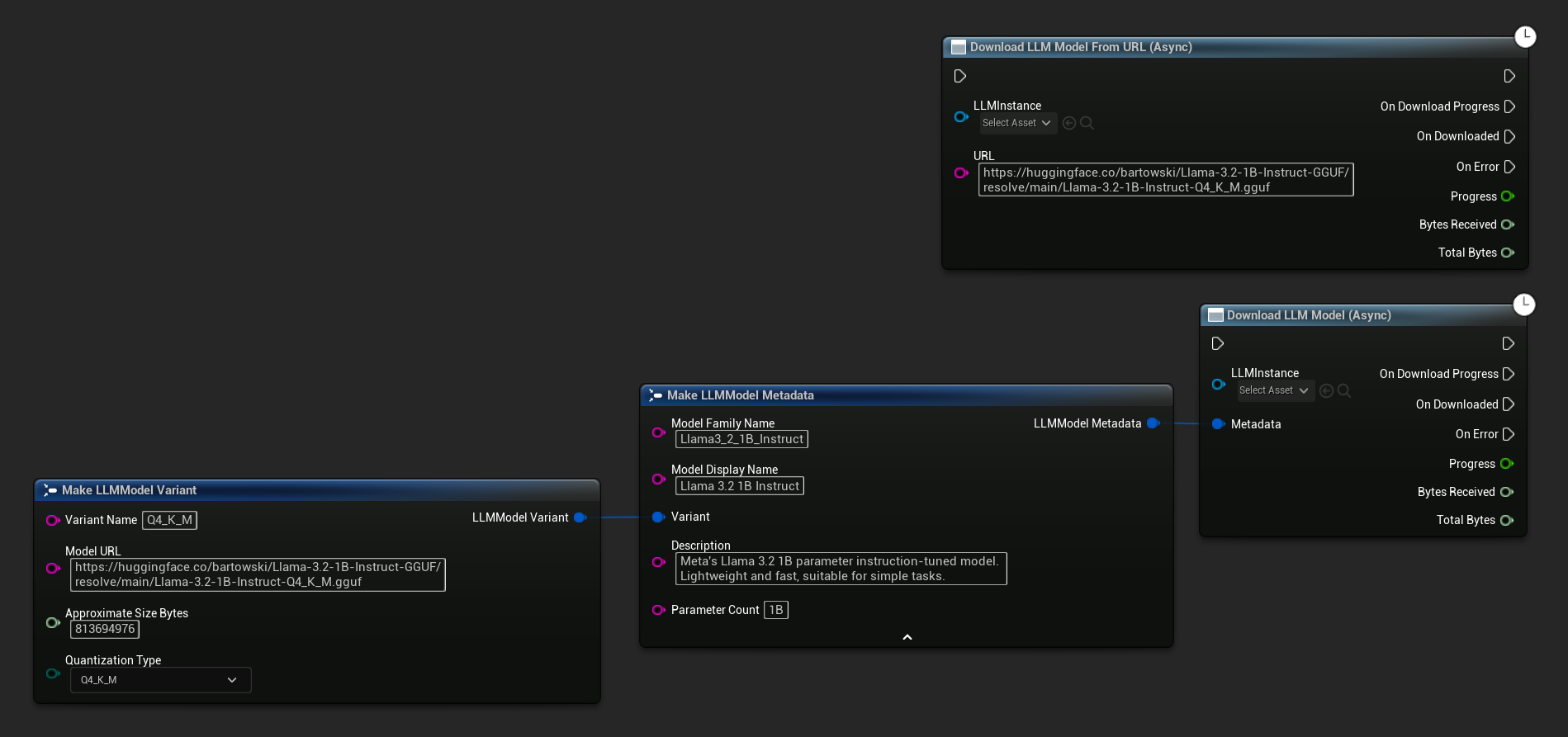

Neben dem oben beschriebenen Herunterladen-und-Laden-Ablauf können Sie ein Modell auch ohne es zu laden auf die Festplatte herunterladen. Dies ist nützlich, um Modelle in einem Ladebildschirm oder Einstellungsmenü vorab zwischenzuspeichern.

- Blueprint

- C++

Eine reine URL-Variante ist ebenfalls verfügbar:

Der Knoten Download LLM Model (Async) und Download LLM Model From URL (Async) bietet Ausgabepins für Fortschritt, Abschluss und Fehler:

// With full metadata

FLLMModelMetadata Metadata;

Metadata.ModelFamilyName = TEXT("Llama3_2_1B_Instruct");

Metadata.ModelDisplayName = TEXT("Llama 3.2 1B Instruct");

Metadata.Description = TEXT("Meta's Llama 3.2 1B parameter instruction-tuned model. Lightweight and fast, suitable for simple tasks.");

Metadata.ParameterCount = TEXT("1B");

Metadata.Variant.VariantName = TEXT("Q4_K_M");

Metadata.Variant.ModelURL = TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf");

Metadata.Variant.ApproximateSizeBytes = 776LL * 1024 * 1024;

Metadata.Variant.QuantizationType = ELLMQuantizationType::Q4_K_M;

LLM->DownloadModel(Metadata);

// URL only

LLM->DownloadModelFromURL(

TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf"));

Der OnDownloadProgress-Delegat meldet den Fortschritt während des Downloads. OnModelDownloaded wird ausgelöst, wenn die Datei auf der Festplatte gespeichert wird.

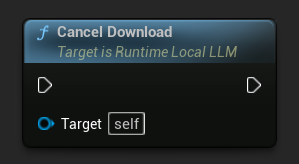

Zum Abbrechen eines laufenden Downloads:

- Blueprint

- C++

LLM->CancelDownload();

Das Plugin verhindert automatisch doppelte Downloads - wenn bereits ein Download für dasselbe Modell läuft, werden nachfolgende Aufrufe ignoriert.

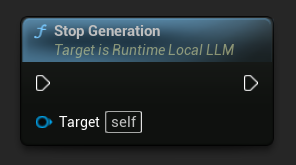

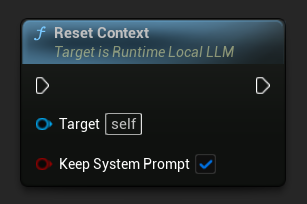

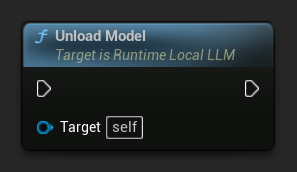

Generierung stoppen

Um eine laufende Generierung zu unterbrechen:

- Blueprint

- C++

LLM->StopGeneration();

Gesprächskontext zurücksetzen

Gesprächsverlauf löschen, um ein neues Gespräch zu beginnen:

- Blueprint

- C++

// Keep the system prompt

LLM->ResetContext(true);

// Clear everything including the system prompt

LLM->ResetContext(false);

Ein Modell entladen

Ressourcen freigeben, wenn ein Modell nicht mehr benötigt wird:

- Blueprint

- C++

LLM->UnloadModel();

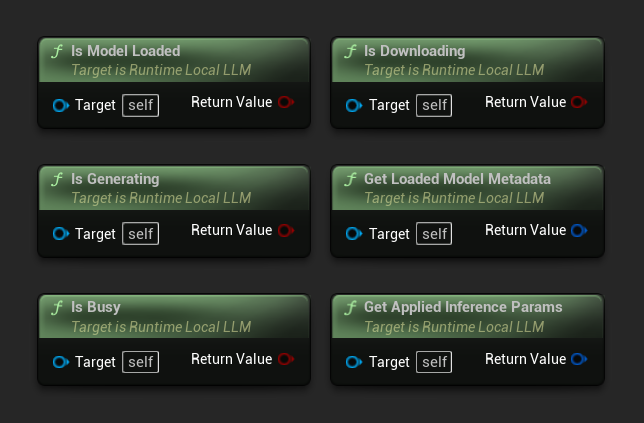

Abfragestatus

Überprüfen Sie den aktuellen Zustand der LLM-Instanz:

- Blueprint

- C++

- Is Model Loaded: True, wenn ein Modell für die Inferenz bereit ist

- Is Generating: True, wenn eine Generierung gerade läuft

- Is Busy: True, wenn irgendeine Operation (Laden, Generieren, Herunterladen) aktiv ist

- Is Downloading: True, wenn ein Modell-Download läuft

- Get Loaded Model Metadata: Gibt die Metadaten des aktuellen Modells zurück

- Get Applied Inference Params: Gibt die beim Laden angewendeten Parameter zurück

// Is Model Loaded - true if a model is ready for inference

if (LLM->IsModelLoaded())

{

FLLMModelMetadata Metadata = LLM->GetLoadedModelMetadata();

UE_LOG(LogTemp, Log, TEXT("Model: %s"), *Metadata.ModelDisplayName);

FLLMInferenceParams Params = LLM->GetAppliedInferenceParams();

UE_LOG(LogTemp, Log, TEXT("Context size: %d"), Params.ContextSize);

}

// Is Generating - true if token generation is currently active

if (LLM->IsGenerating())

{

UE_LOG(LogTemp, Log, TEXT("Generation in progress..."));

}

// Is Busy - true if any operation (loading, generating, downloading) is active

if (LLM->IsBusy())

{

UE_LOG(LogTemp, Log, TEXT("LLM is busy, deferring request"));

}

// Is Downloading - true if a model download is currently in progress

if (LLM->IsDownloading())

{

UE_LOG(LogTemp, Log, TEXT("Model download in progress..."));

}

// Safe to send a new message or load a different model

if (!LLM->IsGenerating() && !LLM->IsBusy())

{

UE_LOG(LogTemp, Log, TEXT("LLM is idle and ready"));

}

Modellbibliothek-Funktionen

Es wird eine Reihe statischer Hilfsfunktionen zur Verwaltung von Modelldateien auf der Festplatte bereitgestellt. Diese sind nützlich, um eine Benutzeroberfläche zur Modellauswahl zu erstellen oder die Modellverfügbarkeit zur Laufzeit zu überprüfen.

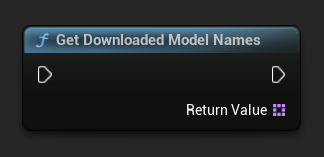

Heruntergeladene Modellnamen / Metadaten abrufen

- Blueprint

- C++

TArray<FName> ModelNames = URuntimeLLMLibrary::GetDownloadedModelNames();

TArray<FLLMModelMetadata> AllModels = URuntimeLLMLibrary::GetAllDownloadedModelMetadata();

for (const FLLMModelMetadata& Model : AllModels)

{

UE_LOG(LogTemp, Log, TEXT("Model: %s (%s)"), *Model.ModelDisplayName, *Model.Variant.VariantName);

}

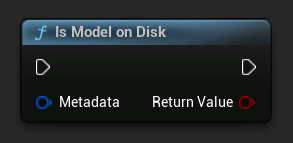

Überprüfen, ob ein Modell auf der Festplatte vorhanden ist

- Blueprint

- C++

bool bExists = URuntimeLLMLibrary::IsModelOnDisk(Metadata);

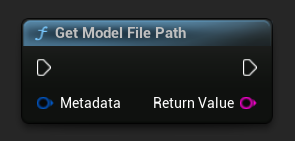

Modell-Dateipfad abrufen

- Blueprint

- C++

FString FilePath = URuntimeLLMLibrary::GetModelFilePath(Metadata);

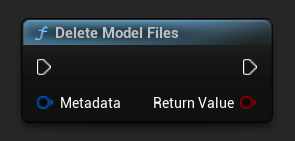

Modelldateien löschen

- Blueprint

- C++

```cpp

bool bDeleted = URuntimeLLMLibrary::DeleteModelFiles(Metadata);

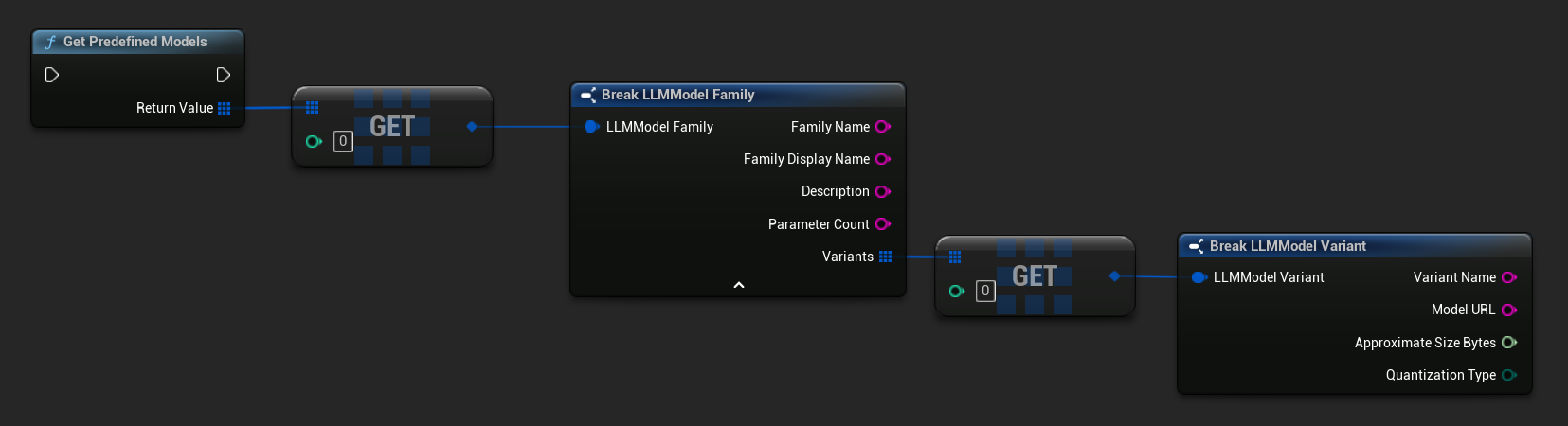

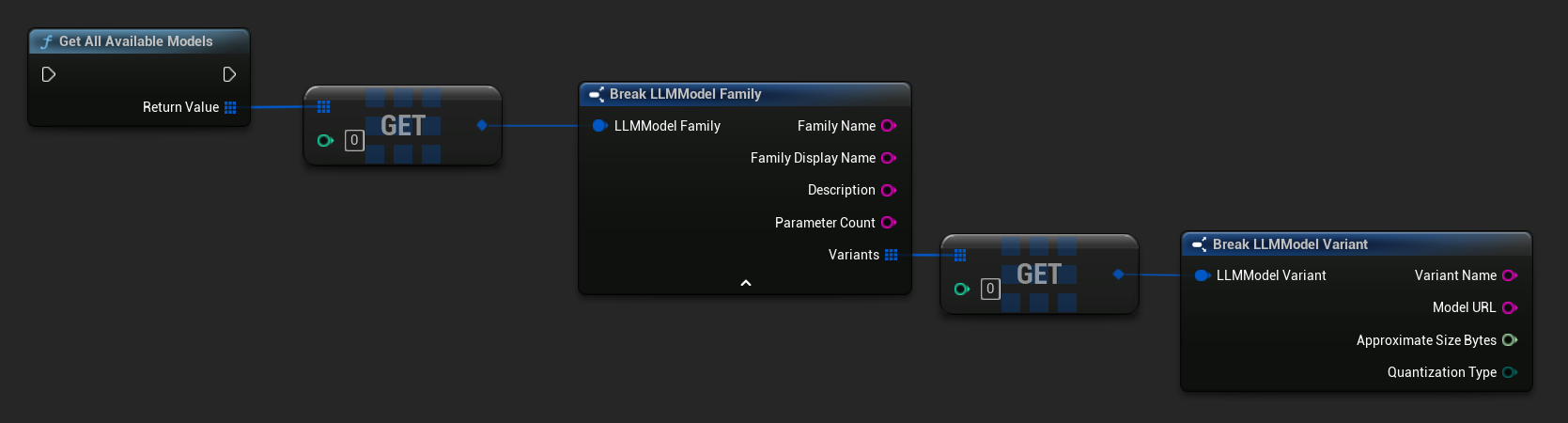

Vordefinierte und verfügbare Modelle abrufen

- Blueprint

- C++

// Built-in catalog only

TArray<FLLMModelFamily> Predefined = URuntimeLLMLibrary::GetPredefinedModels();

// Catalog + custom imports

TArray<FLLMModelFamily> All = URuntimeLLMLibrary::GetAllAvailableModels();

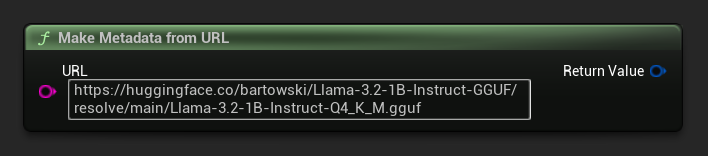

Metadaten aus einer URL erstellen

Konstruieren Sie Modellmetadaten aus einer rohen URL (Felder werden aus dem Dateinamen abgeleitet):

- Blueprint

- C++

FLLMModelMetadata Metadata = URuntimeLocalLLM::MakeMetadataFromURL(

TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf")

);

Hilfsfunktionen

Eine Reihe von Hilfsfunktionen wird für Formatierung und Fehleranzeige bereitgestellt.

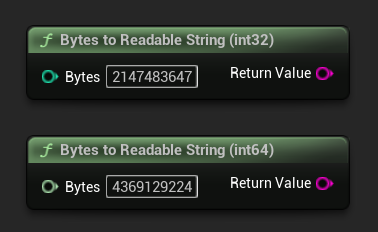

Bytes in lesbare Zeichenkette umwandeln

Wandelt eine Byte-Anzahl in eine lesbare Zeichenkette um (z. B. "4.07 GB"). Nützlich zur Anzeige von Modellgrößen in der Benutzeroberfläche.

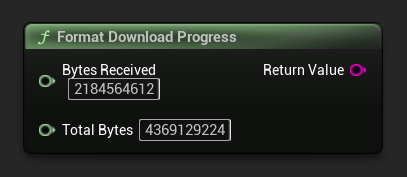

Download-Fortschritt formatieren

Formatiert eine Download-Fortschrittszeichenkette wie "1.23 GB / 4.07 GB (30.2%)". Wenn die Gesamtgröße unbekannt ist, wird nur der empfangene Betrag zurückgegeben.

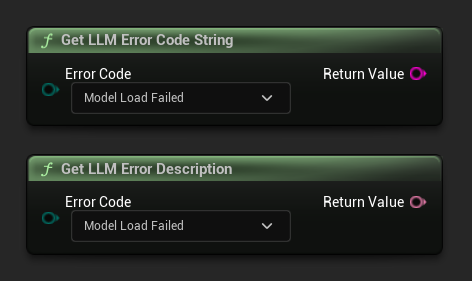

Fehlerbeschreibung / Fehlercode-Zeichenkette abrufen

Get LLM Error Description gibt eine lesbare Textbeschreibung für einen Fehlercode zurück. Get LLM Error Code String gibt den Enum-Wert-Namen als Zeichenkette zurück (nützlich für die Protokollierung).

Fehlercodes-Referenz

| Code | Wert | Beschreibung |

|---|---|---|

| Unknown | 0 | Ein nicht näher spezifizierter Fehler |

| ModelLoadFailed | 10 | Die GGUF-Datei konnte nicht geladen werden (beschädigte Datei, inkompatibles Format usw.) |

| ContextCreateFailed | 11 | Fehler beim Erstellen des Inferenzkontexts |

| ModelNotLoaded | 20 | Es wurde versucht, eine Inferenz ohne geladenes Modell durchzuführen |

| ChatTemplateFailed | 21 | Die Chat-Vorlage des Modells konnte nicht angewendet werden |

| TokenizationFailed | 22 | Der Eingabetext konnte nicht tokenisiert werden |

| ContextOverflow | 23 | Die Eingabeaufforderung + Kontext überschreitet die konfigurierte Kontextgröße |

| PromptDecodeFailed | 24 | Die Tokens der Eingabeaufforderung konnten nicht dekodiert werden |

| ContextTooFullToGenerate | 25 | Nicht genügend Kontextspeicherplatz verbleibend, um eine Ausgabe zu generieren |

| GenerationDecodeFailed | 30 | Ein Token konnte während der Generierung nicht dekodiert werden |

| GenerationTruncated | 31 | Die Generierung wurde gestoppt, weil das maximale Token-Limit erreicht wurde |

| LLMInstanceNull | 40 | Die LLM-Instanz ist null oder ungültig |

| ModelNotFoundOnDisk | 41 | Die Modelldatei existiert nicht am erwarteten Pfad |

| ModelURLEmpty | 42 | Ein Download wurde mit einer leeren URL angefordert |

| ModelDownloadCancelled | 43 | Der Download wurde abgebrochen |

| ModelDownloadEmptyData | 44 | Der Download wurde abgeschlossen, aber der Antwortkörper war leer |

| ModelDownloadSaveFailed | 45 | Der Download wurde abgeschlossen, aber die Datei konnte nicht auf der Festplatte gespeichert werden |