Ejemplos

Esta página proporciona ejemplos completos y listos para usar que demuestran flujos de trabajo comunes con el plugin Runtime Local LLM. Cada ejemplo incluye implementaciones tanto en Blueprint como en C++.

Asegúrate de haber leído Cómo usar el plugin primero para obtener una visión general de la API.

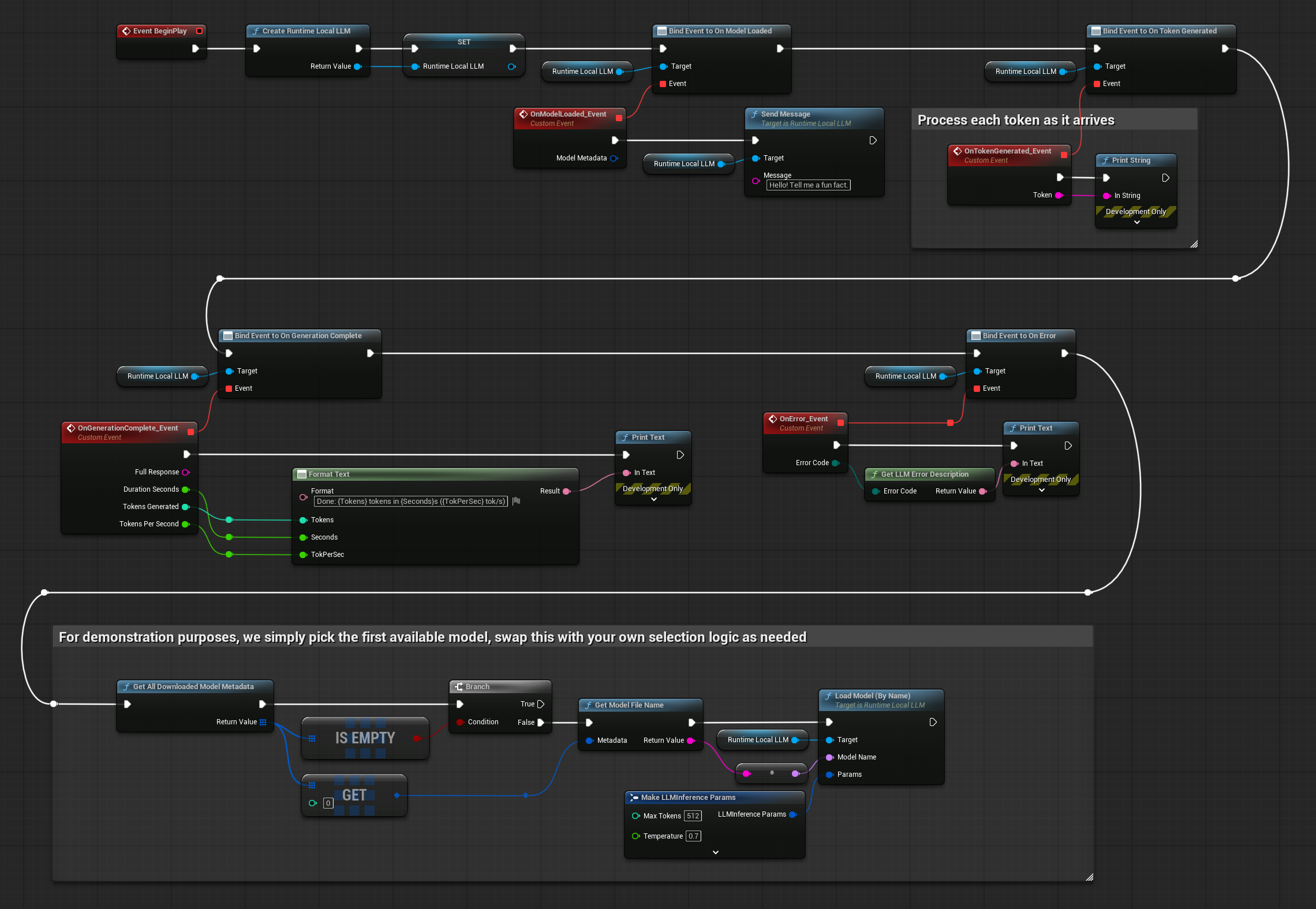

Chat Simple

Crea una instancia de LLM, carga un modelo por nombre, envía un mensaje y muestra la respuesta token por token.

- Blueprint

- C++

// Assuming "this" is an AActor with a UPROPERTY() URuntimeLocalLLM* LLM member

// Note: Callback functions (OnModelReady, OnToken, OnComplete, OnLLMError) must be marked as UFUNCTION() in your header file

void AMyActor::BeginPlay()

{

Super::BeginPlay();

LLM = URuntimeLocalLLM::CreateRuntimeLocalLLM();

LLM->OnModelLoadedNative.AddUObject(this, &AMyActor::OnModelReady);

LLM->OnTokenGeneratedNative.AddUObject(this, &AMyActor::OnToken);

LLM->OnGenerationCompleteNative.AddUObject(this, &AMyActor::OnComplete);

LLM->OnErrorNative.AddUObject(this, &AMyActor::OnLLMError);

FLLMInferenceParams Params;

Params.MaxTokens = 512;

Params.Temperature = 0.7f;

Params.SystemPrompt = TEXT("You are a helpful assistant.");

TArray<FLLMModelMetadata> DownloadedModels = URuntimeLLMLibrary::GetAllDownloadedModelMetadata();

// For demonstration purposes, we simply pick the first available model, swap this with your own selection logic as needed

if (DownloadedModels.Num() > 0)

{

const FLLMModelMetadata& Model = DownloadedModels[0]; // Select the first available model

FString ModelFileName = URuntimeLLMLibrary::GetModelFileName(Model);

LLM->LoadModelByName(FName(*ModelFileName), Params);

}

}

void AMyActor::OnModelReady(const FLLMModelMetadata& Metadata)

{

UE_LOG(LogTemp, Log, TEXT("Model ready: %s"), *Metadata.ModelDisplayName);

LLM->SendMessage(TEXT("Hello! Tell me a fun fact."));

}

void AMyActor::OnToken(const FString& Token)

{

// Handle each token as it's generated (e.g. append to a string, update UI, stream to audio, etc)

UE_LOG(LogTemp, Log, TEXT("%s"), *Token);

}

void AMyActor::OnComplete(const FString& FullResponse, float Duration, int32 Tokens, float TPS)

{

UE_LOG(LogTemp, Log, TEXT("Done: %d tokens in %.1fs (%.1f tok/s)"), Tokens, Duration, TPS);

}

void AMyActor::OnLLMError(ELLMErrorCode ErrorCode)

{

FText Desc = URuntimeLLMUtils::GetLLMErrorDescription(ErrorCode);

UE_LOG(LogTemp, Error, TEXT("LLM Error: %s"), *Desc.ToString());

}

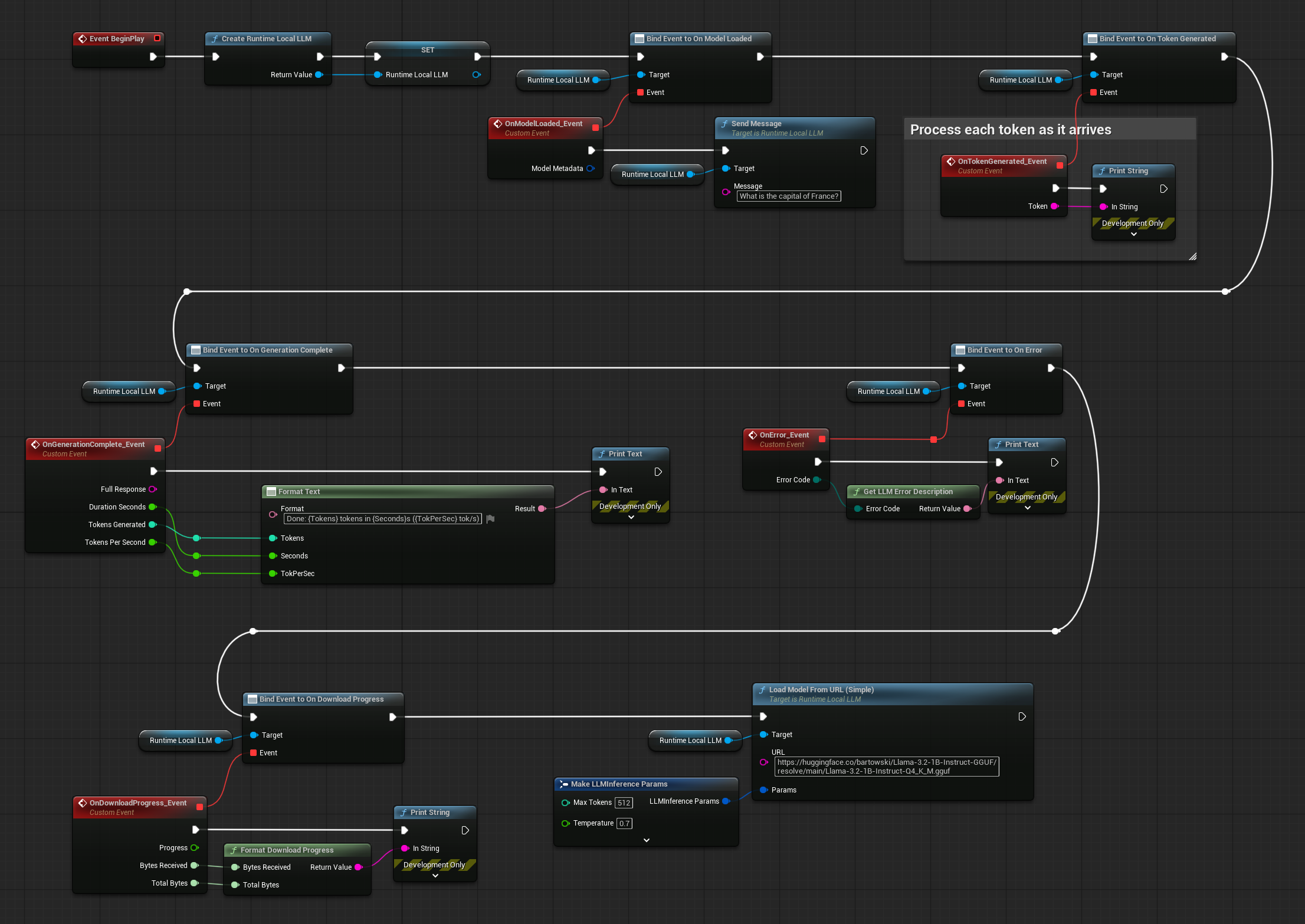

Descargar, Cargar y Chatear

Descargar un modelo desde una URL en tiempo de ejecución, cargarlo e iniciar una conversación. La descarga se omite si el modelo ya existe en el disco.

- Blueprint

- C++

// Assuming "this" is an AActor with a UPROPERTY() URuntimeLocalLLM* LLM member

// Note: Callback functions (OnProgress, OnModelReady, OnToken, OnComplete, OnLLMError) must be marked as UFUNCTION() in your header file

void AMyActor::BeginPlay()

{

Super::BeginPlay();

LLM = URuntimeLocalLLM::CreateRuntimeLocalLLM();

LLM->OnDownloadProgressNative.AddUObject(this, &AMyActor::OnProgress);

LLM->OnModelLoadedNative.AddUObject(this, &AMyActor::OnModelReady);

LLM->OnTokenGeneratedNative.AddUObject(this, &AMyActor::OnToken);

LLM->OnGenerationCompleteNative.AddUObject(this, &AMyActor::OnComplete);

LLM->OnErrorNative.AddUObject(this, &AMyActor::OnLLMError);

FLLMInferenceParams Params;

Params.MaxTokens = 256;

LLM->LoadModelFromURLSimple(

TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf"), Params);

}

void AMyActor::OnProgress(float Progress, int64 BytesReceived, int64 TotalBytes)

{

FString ProgressText = URuntimeLLMUtils::FormatDownloadProgress(BytesReceived, TotalBytes);

UE_LOG(LogTemp, Log, TEXT("Downloading: %s"), *ProgressText);

}

void AMyActor::OnModelReady(const FLLMModelMetadata& Metadata)

{

LLM->SendMessage(TEXT("What is the capital of France?"));

}

void AMyActor::OnToken(const FString& Token)

{

// Handle each token as it's generated (e.g. append to a string, update UI, stream to audio, etc)

UE_LOG(LogTemp, Log, TEXT("%s"), *Token);

}

void AMyActor::OnComplete(const FString& FullResponse, float Duration, int32 Tokens, float TPS)

{

UE_LOG(LogTemp, Log, TEXT("Done: %d tokens in %.1fs (%.1f tok/s)"), Tokens, Duration, TPS);

}

void AMyActor::OnLLMError(ELLMErrorCode ErrorCode)

{

FText Desc = URuntimeLLMUtils::GetLLMErrorDescription(ErrorCode);

UE_LOG(LogTemp, Error, TEXT("LLM Error: %s"), *Desc.ToString());

}

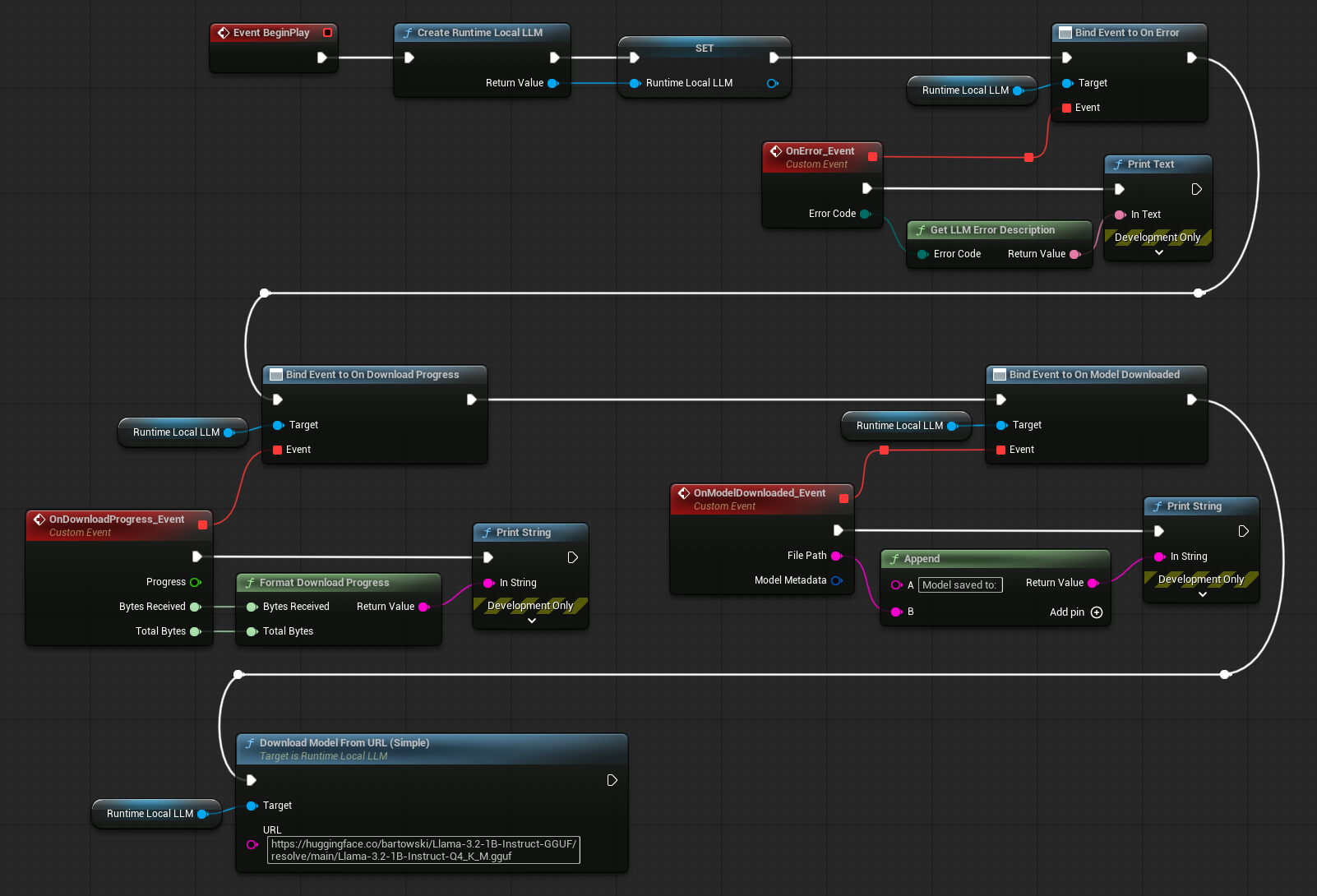

Pre-descarga de modelos

Descarga modelos en el disco sin cargarlos, útil para una pantalla de configuración o menú de carga donde los usuarios seleccionan modelos para almacenar en caché con antelación.

- Blueprint

- C++

// Assuming "this" is an AActor with a UPROPERTY() URuntimeLocalLLM* LLM member

// Note: Callback functions (OnProgress, OnDownloaded, OnLLMError) must be marked as UFUNCTION() in your header file

void AMyActor::StartPredownload()

{

LLM = URuntimeLocalLLM::CreateRuntimeLocalLLM();

LLM->OnDownloadProgressNative.AddUObject(this, &AMyActor::OnProgress);

LLM->OnModelDownloadedNative.AddUObject(this, &AMyActor::OnDownloaded);

LLM->OnErrorNative.AddUObject(this, &AMyActor::OnLLMError);

LLM->DownloadModelFromURL(

TEXT("https://huggingface.co/user/model/resolve/main/model-Q4_K_M.gguf"));

}

void AMyActor::OnProgress(float Progress, int64 BytesReceived, int64 TotalBytes)

{

FString ProgressText = URuntimeLLMUtils::FormatDownloadProgress(BytesReceived, TotalBytes);

UE_LOG(LogTemp, Log, TEXT("Downloading: %s"), *ProgressText);

}

void AMyActor::OnDownloaded(const FString& FilePath, const FLLMModelMetadata& Metadata)

{

UE_LOG(LogTemp, Log, TEXT("Model saved to: %s"), *FilePath);

// Model is now on disk - load it later with LoadModelByName or LoadModelFromFile

}

void AMyActor::OnLLMError(ELLMErrorCode ErrorCode)

{

FText Desc = URuntimeLLMUtils::GetLLMErrorDescription(ErrorCode);

UE_LOG(LogTemp, Error, TEXT("LLM Error: %s"), *Desc.ToString());

}

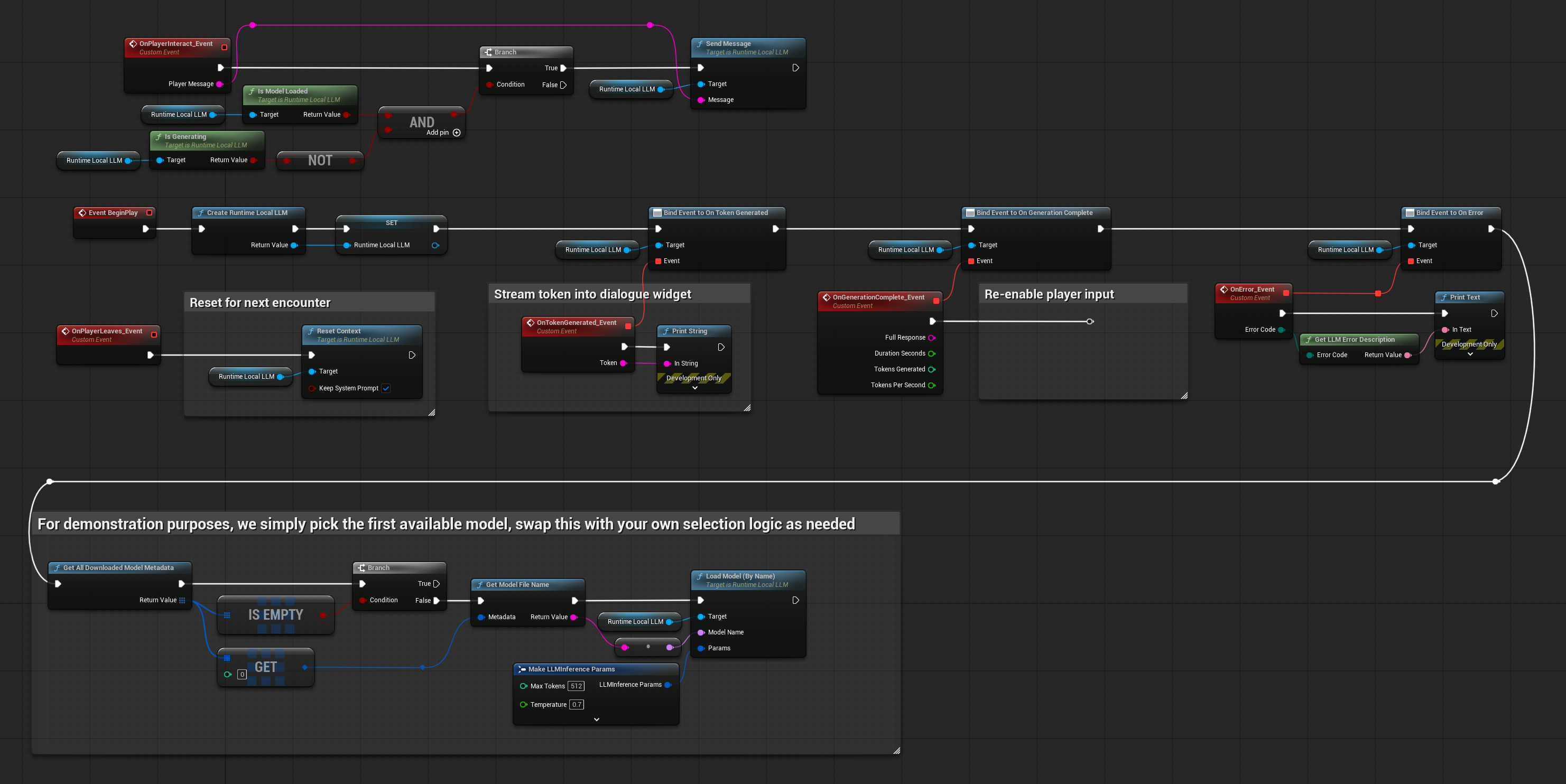

Diálogo de NPC

Un sistema básico de diálogo con NPC donde el jugador escribe un mensaje y el NPC responde. El contexto de la conversación persiste entre mensajes, por lo que el NPC recuerda interacciones anteriores.

- Blueprint

- C++

// Assuming "this" is an AActor with a UPROPERTY() URuntimeLocalLLM* LLM member

// Note: Callback functions (OnToken, OnComplete, OnLLMError) must be marked as UFUNCTION() in your header file

void ANPCActor::BeginPlay()

{

Super::BeginPlay();

LLM = URuntimeLocalLLM::CreateRuntimeLocalLLM();

LLM->OnTokenGeneratedNative.AddUObject(this, &ANPCActor::OnToken);

LLM->OnGenerationCompleteNative.AddUObject(this, &ANPCActor::OnComplete);

LLM->OnErrorNative.AddUObject(this, &AMyActor::OnLLMError);

FLLMInferenceParams Params;

Params.MaxTokens = 150;

Params.Temperature = 0.9f;

Params.SystemPrompt = TEXT(

"You are a grumpy blacksmith in a medieval village. "

"Keep responses to 2-3 sentences. Stay in character.");

// For demonstration purposes, we simply pick the first available model, swap this with your own selection logic as needed

if (DownloadedModels.Num() > 0)

{

const FLLMModelMetadata& Model = DownloadedModels[0]; // Select the first available model

FString ModelFileName = URuntimeLLMLibrary::GetModelFileName(Model);

LLM->LoadModelByName(FName(*ModelFileName), Params);

}

}

void ANPCActor::OnPlayerInteract(const FString& PlayerMessage)

{

if (LLM->IsModelLoaded() && !LLM->IsGenerating())

{

LLM->SendMessage(PlayerMessage);

}

}

void ANPCActor::OnToken(const FString& Token)

{

// Stream token into dialogue widget

}

void ANPCActor::OnComplete(const FString& FullResponse, float Duration, int32 Tokens, float TPS)

{

// Re-enable player input

}

void ANPCActor::OnPlayerLeaves()

{

// Reset for next encounter

LLM->ResetContext(true); // Keep system prompt

}