Parámetros de inferencia

La estructura LLM Inference Parameters controla cómo el modelo se carga y genera texto. Estos parámetros se pasan al cargar un modelo. Esta página describe cada parámetro y su efecto.

Referencia de parámetros

| Parámetro | Tipo | Predeterminado | Rango | Descripción |

|---|---|---|---|---|

| Tokens máximos | int32 | 512 | 1–8192 | Número máximo de tokens a generar en una única respuesta |

| Temperatura | float | 0.7 | 0.0–2.0 | Controla la aleatoriedad. 0.0 = determinista. Valores más altos = salida más creativa |

| Top P | float | 0.9 | 0.0–1.0 | Muestreo por núcleo. Solo se consideran los tokens cuya probabilidad acumulada supera este valor |

| Top K | int32 | 40 | 0–200 | Limita la selección a los K tokens más probables. 0 = desactivado |

| Penalización por repetición | float | 1.1 | 0.0–3.0 | Penaliza los tokens que ya aparecen en la salida. 1.0 = sin penalización |

| Núm. de capas GPU | int32 | -1 | -1–200 | Capas del modelo a descargar en la GPU. -1 = automático. 0 = solo CPU |

| Tamaño de contexto | int32 | 2048 | 128–131072 | Ventana de contexto máxima en tokens. Valores más altos usan más memoria |

| Prompt del sistema | FString | "Eres un asistente útil." | — | Instrucción del sistema que define el comportamiento del modelo |

| Semilla | int32 | -1 | -1+ | Semilla aleatoria para salida reproducible. -1 = aleatorio |

| Núm. de hilos | int32 | 0 | 0–128 | Hilos de CPU para la generación. 0 = automático |

Uso

- Blueprint

- C++

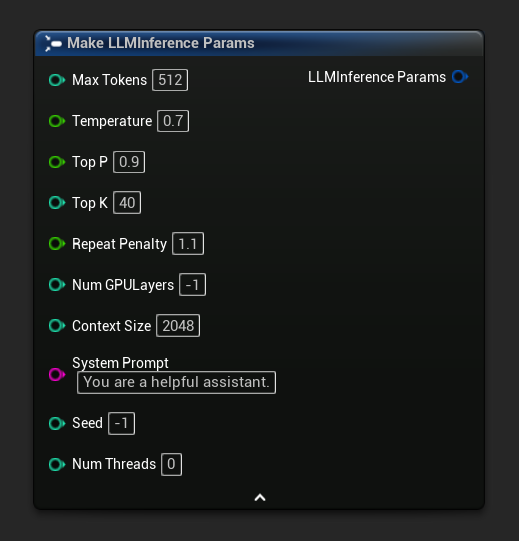

Los parámetros de inferencia aparecen como un pin de estructura en los nodos de carga y asíncronos. Rompe la estructura para establecer valores individuales:

Para obtener un conjunto de parámetros predeterminados como punto de partida, usa Get Default Inference Params:

// Creative writing

FLLMInferenceParams CreativeParams;

CreativeParams.MaxTokens = 1024;

CreativeParams.Temperature = 1.2f;

CreativeParams.TopP = 0.95f;

CreativeParams.TopK = 80;

CreativeParams.RepeatPenalty = 1.2f;

CreativeParams.SystemPrompt = TEXT("You are a creative storyteller.");

// Factual / deterministic

FLLMInferenceParams FactualParams;

FactualParams.MaxTokens = 256;

FactualParams.Temperature = 0.1f;

FactualParams.TopP = 0.5f;

FactualParams.TopK = 10;

FactualParams.SystemPrompt = TEXT("Answer questions concisely and accurately.");

// Mobile-optimized

FLLMInferenceParams MobileParams;

MobileParams.MaxTokens = 128;

MobileParams.ContextSize = 1024;

MobileParams.NumGPULayers = 0;

MobileParams.NumThreads = 4;

MobileParams.SystemPrompt = TEXT("You are a helpful assistant. Keep responses brief.");

// Get defaults programmatically

FLLMInferenceParams DefaultParams = URuntimeLocalLLM::GetDefaultInferenceParams();

Recomendaciones de Plataforma

Móvil / RV (Android, iOS, Meta Quest)

- Tamaño de Contexto: 1024–2048

- Num GPU Layers: 0 (solo CPU) a menos que el dispositivo tenga soporte de computación GPU confirmado

- Max Tokens: Por debajo de 256 para interacciones receptivas

- Num Threads: 2–4 dependiendo del dispositivo

Escritorio (Windows, Mac, Linux)

- Tamaño de Contexto: 2048–8192 para la mayoría de conversaciones

- Num GPU Layers: -1 (auto) para aprovechar la aceleración de GPU cuando esté disponible

- Num Threads: 0 (auto)

- Max Tokens: 512–2048 para respuestas más largas