Demo Projects

To help you get started quickly with Runtime MetaHuman Lip Sync, two ready-to-use demo projects are available. Both are built with Unreal Engine 5.6+, are Blueprint-only, and run cross-platform on Windows, Mac, Linux, iOS, Android, and Android-based platforms (including Meta Quest).

Available Demo Projects

- AI Conversational NPC / Interactive Avatar

- Basic Lip Sync Demo

A full AI conversational avatar workflow combining speech recognition, an AI chatbot (LLM), text-to-speech, and audio playback with real-time lip sync - all running together in a single project. Suitable for a wide range of use cases - including games, interactive kiosks, virtual production, museum installations, digital assistants, and training simulations.

Pipeline Overview

🎤 Microphone → Speech Recognition → 💬 LLM Chatbot → 🔊 Text-to-Speech → 👄 Lip Sync + Playback

Videos

Quick Preview (~30 sec)

A short showcase of the demo in action.

Full Walkthrough

A detailed walkthrough covering setup, configuration, and the full conversational pipeline.

Downloads

Required & Optional Plugins

The demo project is modular - you only need the plugins for the providers you want to use.

| Plugin | Purpose | Required? |

|---|---|---|

| Runtime MetaHuman Lip Sync | Lip sync animation | ✅ Always |

| Runtime Audio Importer | Audio capture & processing | ✅ Always |

| Runtime Speech Recognizer | Offline speech recognition (whisper.cpp) | ✅ Always |

| Runtime AI Chatbot Integrator | External LLMs (OpenAI, Claude, DeepSeek, Gemini, Grok, Ollama) and/or External TTS (OpenAI, ElevenLabs) | 🔶 Optional |

| Runtime Local LLM | Local LLM inference via llama.cpp (Llama, Mistral, Gemma, etc, GGUF models) | 🔶 Optional |

| Runtime Text To Speech | Local TTS via Piper and Kokoro | 🔶 Optional |

While each plugin above is individually optional, you need at least one LLM provider and at least one TTS provider for the demo to work. Mix and match freely (e.g. local LLM + ElevenLabs TTS, or OpenAI LLM + local TTS).

Modular Architecture

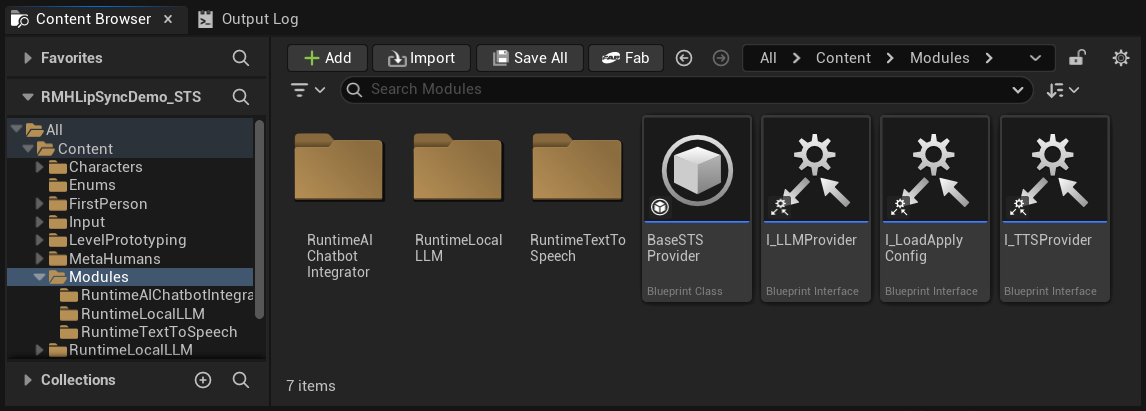

In the Content folder you'll find a Modules folder that contains three subfolders:

Content/

└── Modules/

├── RuntimeAIChatbotIntegrator/ ← External LLMs and/or external TTS

├── RuntimeLocalLLM/ ← Local LLM via llama.cpp

└── RuntimeTextToSpeech/ ← Local TTS via Piper/Kokoro

If you didn't acquire one (or more) of the optional plugins, simply delete the corresponding folder(s). The demo project's base assets (game instance, widgets, etc.) do not reference these modules directly, so deleting them won't cause asset reference errors. The configuration UI will automatically hide any provider whose folder is missing.

This modularity applies only to LLM and TTS providers. Speech Recognition (Runtime Speech Recognizer) and Lip Sync (Runtime MetaHuman Lip Sync) are part of the base demo project and are always required.

On first launch, Unreal may ask whether to disable any missing optional plugins - click Yes. Make sure you've also deleted the corresponding Content/Modules/ folder (see above).

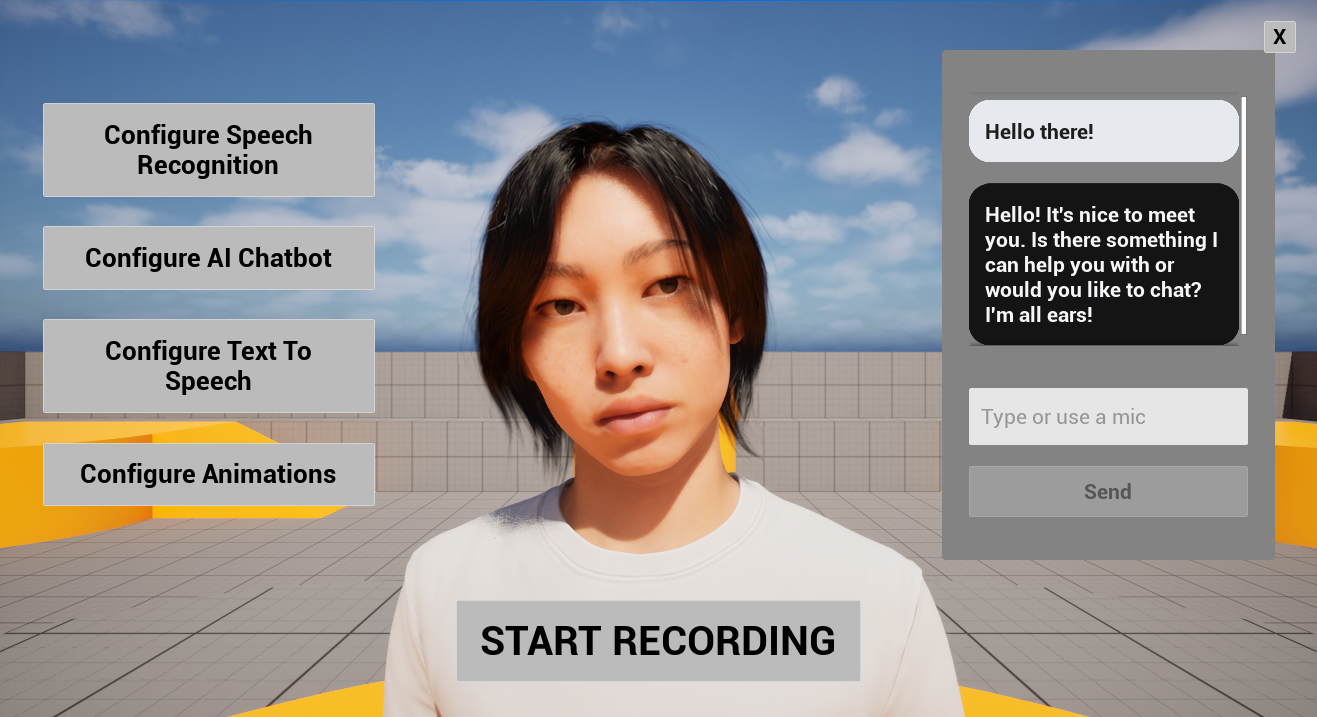

Demo Project Layout

The user interface shown below is built entirely with UMG (Unreal Motion Graphics) and is intended purely to demonstrate the pipeline - speech recognition → LLM → TTS → lip sync. You're free to restyle or replace it to match your project's visual design, control scheme, or platform (VR/AR, mobile, console, kiosk, etc.). If certain widgets aren't needed in your use case, you can also simply hide them (e.g. set their visibility to Collapsed or Hidden).

| Area | What's there |

|---|---|

| Center | The MetaHuman character. |

| Left side | Four configuration buttons (Speech Recognition, AI Chatbot, Text To Speech, Animations), described in detail below. |

| Center bottom | A Start Recording button. Click it to begin a voice conversation: your microphone is captured, transcribed, sent to the LLM, the response is synthesized via TTS, and played back with lip sync, fully hands-free. |

| Right center | A conversation history widget showing the full back-and-forth between you and the AI (both user and assistant messages). It also includes a text input field, so you can type messages directly without using speech recognition, useful for testing, for accessibility, or when a microphone isn't available. |

You can mix both input modes freely in the same session - speak some messages, type others.

Configuration Buttons

The four configuration buttons on the left open dedicated panels for each part of the pipeline:

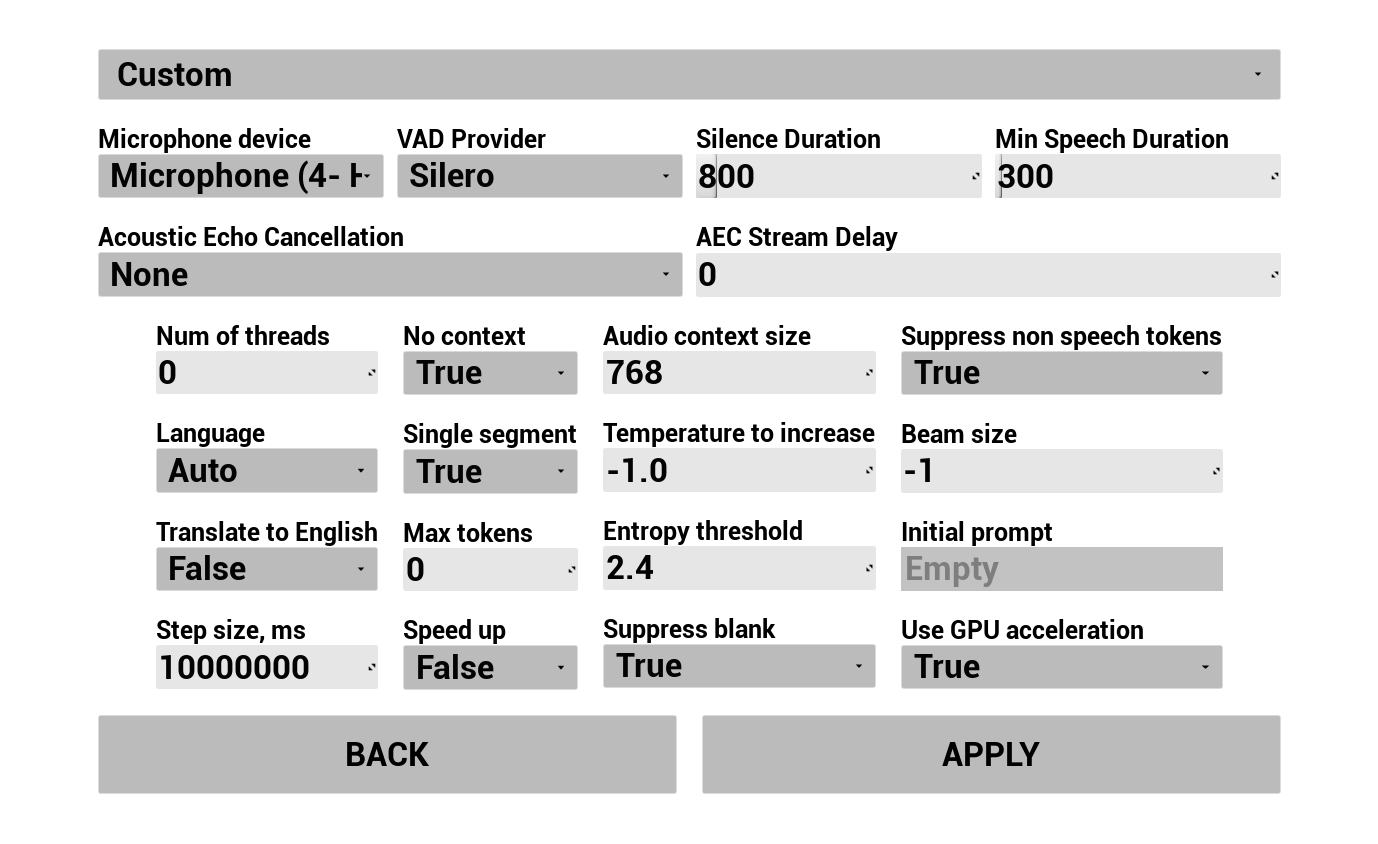

1. Configure Speech Recognition

Configure how the user's voice is captured and transcribed:

- Select language

- Adjust speech recognition parameters (Whisper model settings)

- Configure AEC (Acoustic Echo Cancellation)

- Configure VAD (Voice Activity Detection)

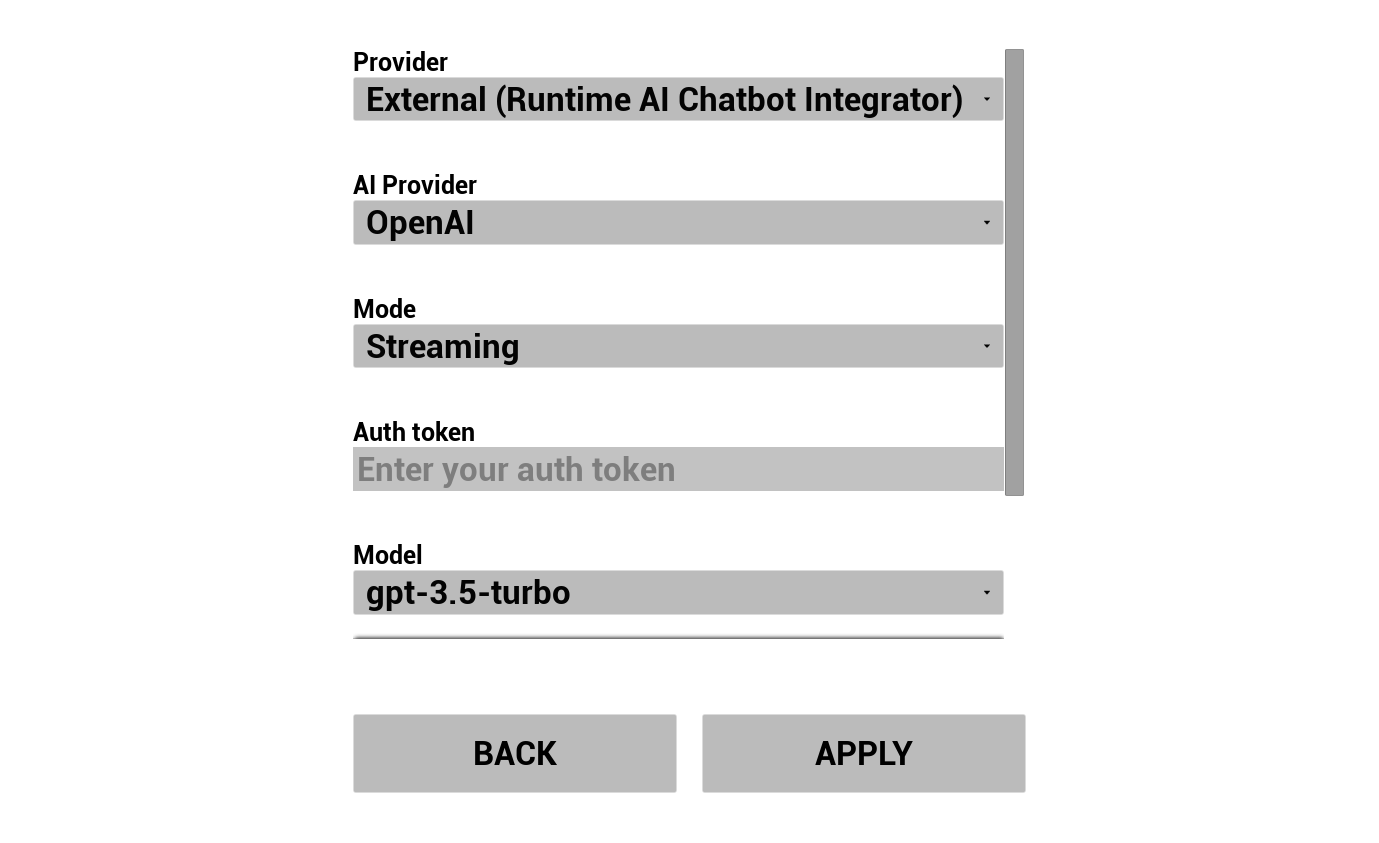

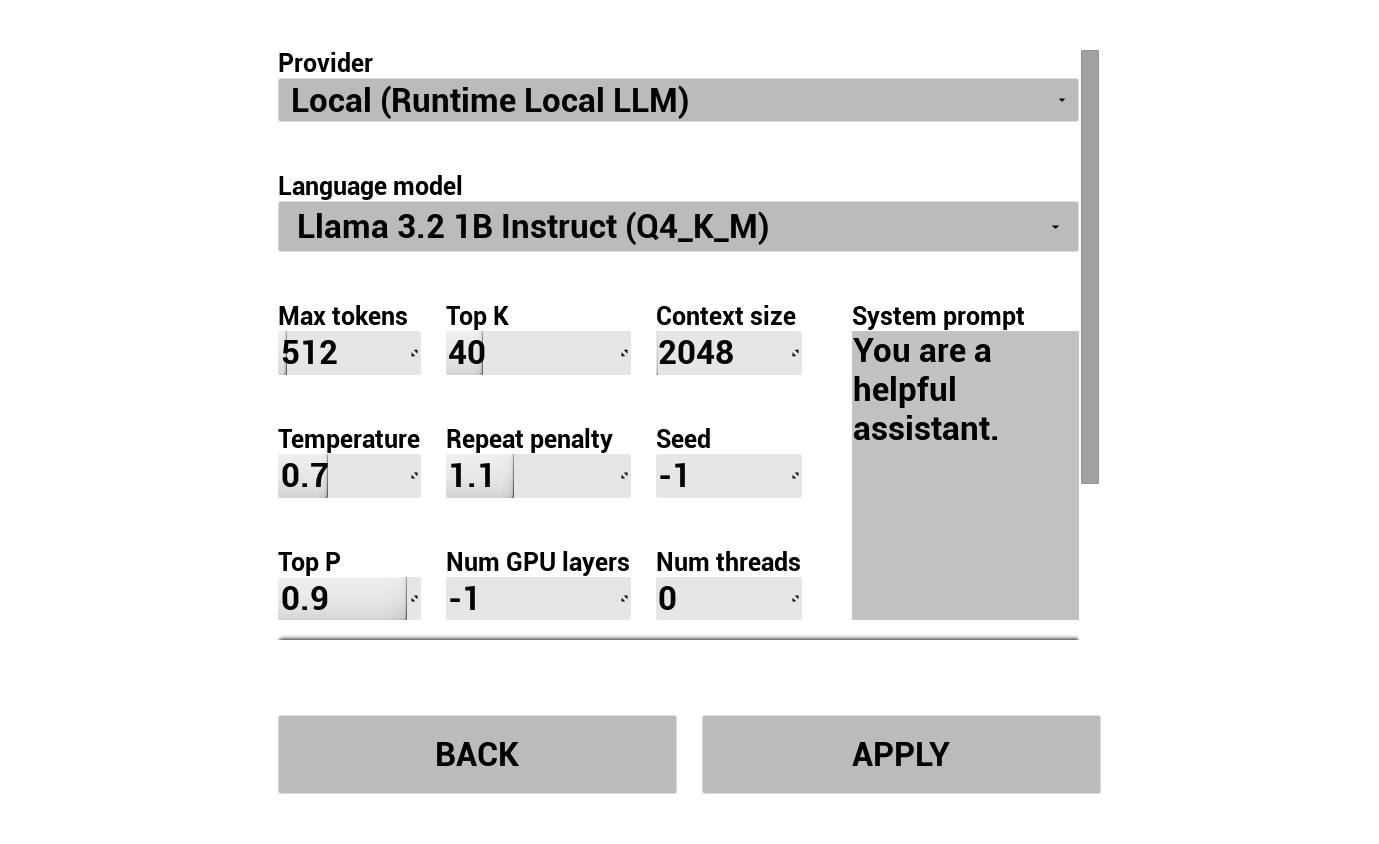

2. Configure AI Chatbot

Choose your LLM provider and configure it:

- Select provider (Runtime AI Chatbot Integrator or Runtime Local LLM)

- For external providers: auth token, model name, etc.

- For local LLM: select a GGUF model, set context size, and other inference parameters. You can also download your own GGUF model at runtime directly from the demo (e.g. by URL), and use it immediately without rebuilding the project.

The provider combobox only shows providers whose plugin module folder is present in Content/Modules/.

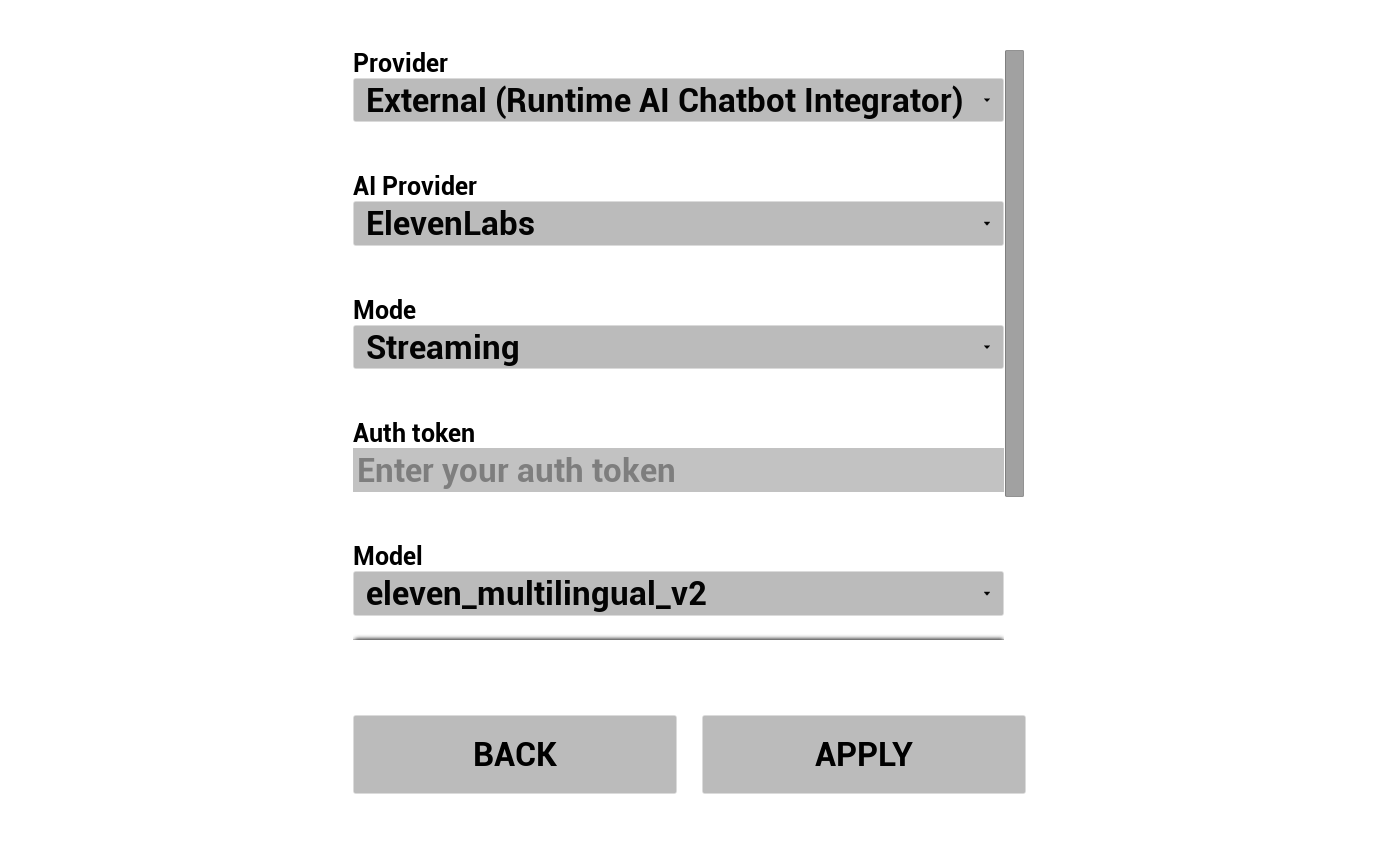

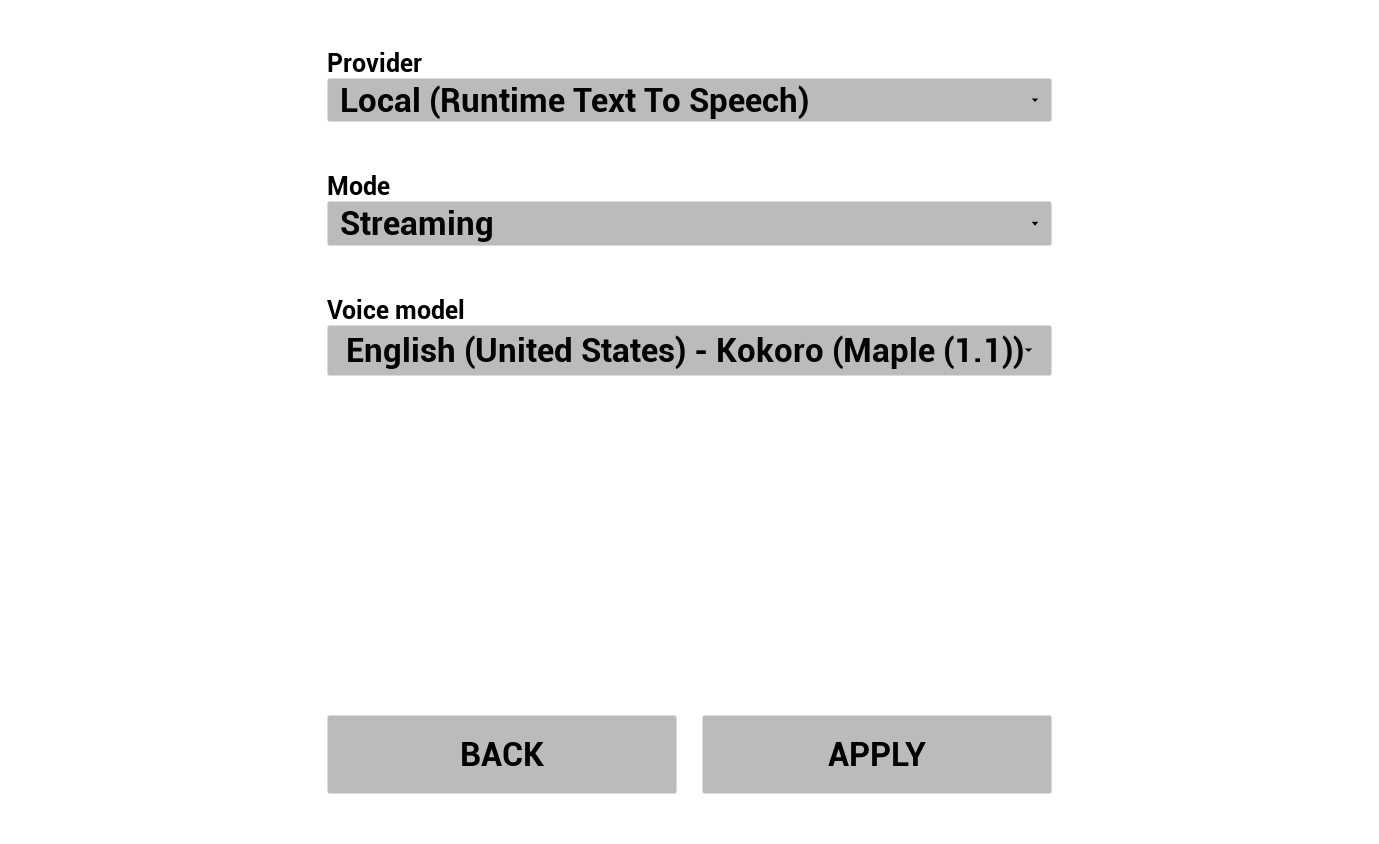

3. Configure Text To Speech

Choose your TTS provider and configure voices/models:

- Select provider (Runtime AI Chatbot Integrator for OpenAI/ElevenLabs, or Runtime Text To Speech for local Piper/Kokoro)

- Select voice/model

- Adjust provider-specific parameters

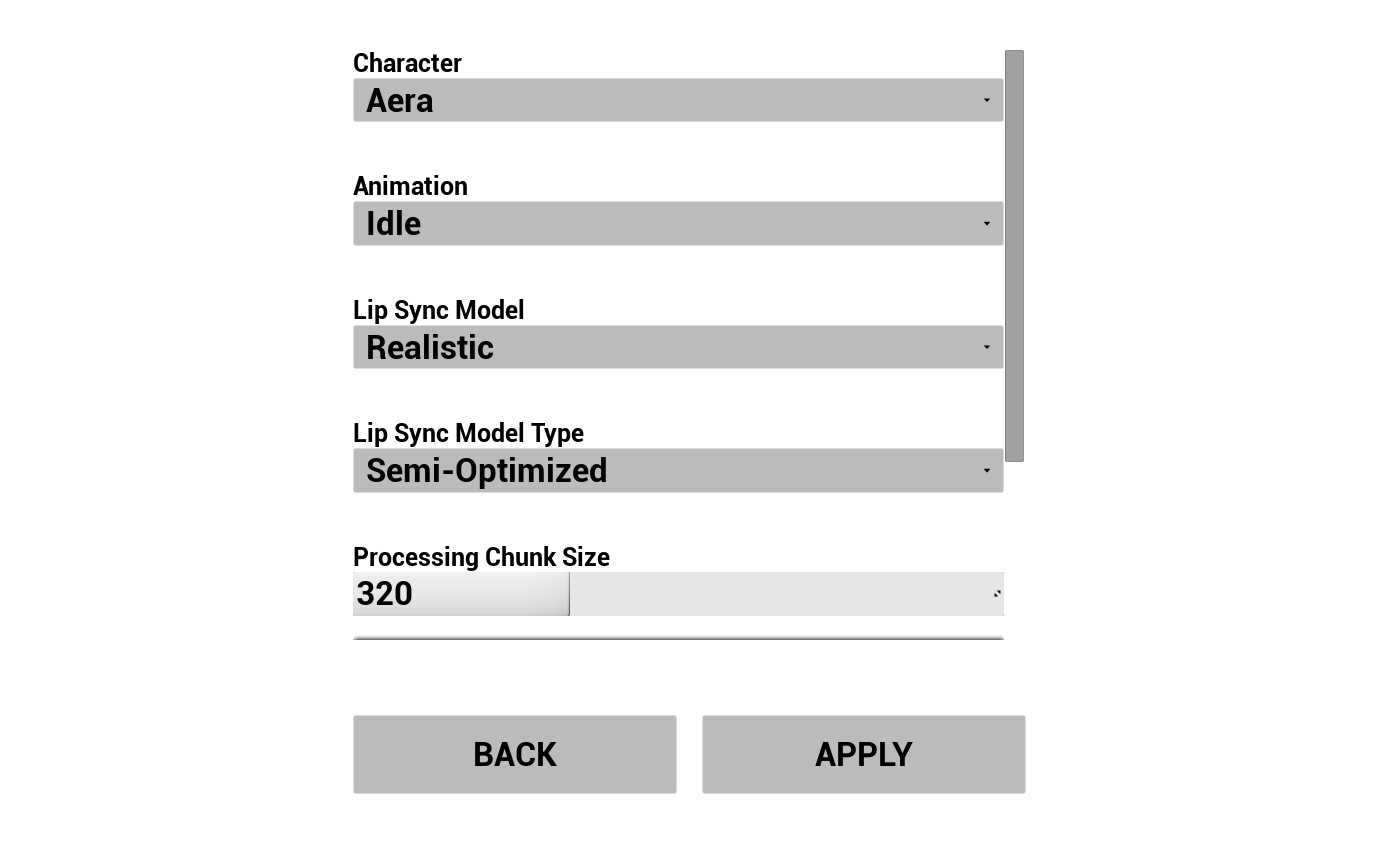

4. Configure Animations

Control the visuals of your AI avatar:

- Choose between 3 pre-downloaded MetaHuman characters (Aera, Ada, Orlando)

- Select lip sync model (Standard or Realistic)

- Select lip sync model type - Highly Optimized, Semi-Optimized, or Original (see Model Type)

- Adjust Processing Chunk Size - controls how often lip sync inference runs (see Processing Chunk Size)

- Select an idle animation to play on the MetaHuman during conversation

Pre-Configuring the Demo in the Editor

When working with the source version, you can pre-fill defaults directly in the editor so values don't need to be re-entered every run:

| What | Where |

|---|---|

| General settings (lip sync model, idle animation, character class, speech recognition, etc) | Content/LipSyncSTSGameInstance |

| External LLM / External TTS settings (Runtime AI Chatbot Integrator) | Content/Modules/RuntimeAIChatbotIntegrator/RuntimeAIChatbotIntegrator_Provider |

| Local LLM settings (Runtime Local LLM) | Content/Modules/RuntimeLocalLLM/RuntimeLocalLLM_Provider |

| Local TTS settings (Runtime Text To Speech) | Content/Modules/RuntimeTextToSpeech/RuntimeTextToSpeech_Provider |

Cross-Platform Notes

All plugins used by the demo support Windows, Mac, Linux, iOS, Android, and Android-based platforms (including Meta Quest), so the demo project works on all of these as well. This makes it suitable for deployment across a wide variety of environments - from games and desktop kiosks to mobile apps, standalone VR headsets, and on-set virtual production setups.

For weaker devices (mobile, standalone VR), you may want to:

- Use the Standard lip sync model instead of Realistic - see the Model comparison

- Switch to Highly Optimized model type

- Increase Processing Chunk Size to reduce CPU load

- Pick smaller LLM / TTS models

See Platform-specific Configuration for additional setup steps on Android, iOS, Mac, and Linux.

Bringing Your Own Character

The demo project ships with three sample MetaHuman characters (Aera, Ada, Orlando), but you can import your own MetaHuman and use it in the demo.

📺 Video tutorial: Adding a Custom MetaHuman Character to the Demo Project

The Runtime MetaHuman Lip Sync plugin itself supports many other character systems beyond MetaHumans (ARKit-based characters, Daz Genesis 8/9, Reallusion CC3/CC4, Mixamo, ReadyPlayerMe, etc - see the Custom Character Setup Guide). Whether you're building a game NPC, a virtual presenter, a kiosk attendant, or a digital human for virtual production, the plugin adapts to your character pipeline.

A simpler demo project that focuses purely on the lip sync feature itself, without the full AI conversational workflow. Suitable if you just want to see lip sync in action with various audio sources.

Featured Video

Downloads

What's Included

This demo showcases the basic lip sync workflows:

- Microphone input - real-time lip sync from live audio

- Audio file playback - lip sync from imported audio files

- Text-to-Speech - lip sync driven by synthesized speech

Required & Optional Plugins

| Plugin | Purpose | Required? |

|---|---|---|

| Runtime MetaHuman Lip Sync | Lip sync animation | ✅ Required |

| Runtime Audio Importer | Audio import & capture | ✅ Required |

| Runtime Text To Speech | Local TTS for the TTS demo scene | 🔶 Optional |

| Runtime AI Chatbot Integrator | External TTS providers (OpenAI, ElevenLabs) | 🔶 Optional |

Notes for the Standard Lip Sync Model

If you plan to use the Standard Model (instead of Realistic) in either demo project, you'll need to install the Standard Lip Sync Extension plugin. See Standard Model Extension for installation instructions.

Need Help?

If you run into any issues setting up or running the demo projects, feel free to reach out:

For custom development requests (e.g. extending the demo with your own logic, adapting it for a specific platform or character pipeline), contact [email protected].