如何使用该插件

本指南涵盖完整的运行时 API:创建 LLM 实例、加载模型、发送消息、在运行时下载模型、管理状态以及实用工具函数。

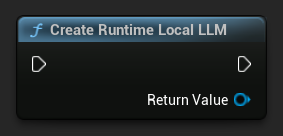

创建 LLM 实例

首先创建一个 Runtime Local LLM 对象。保持对它的引用(例如,在 Blueprints 中作为变量,或在 C++ 中作为 UPROPERTY),以防止过早的垃圾回收。

- Blueprint

- C++

UPROPERTY()

URuntimeLocalLLM* LLM;

LLM = URuntimeLocalLLM::CreateRuntimeLocalLLM();

加载模型

发送消息前必须先加载模型。插件根据你的工作流程提供了几种加载方法。

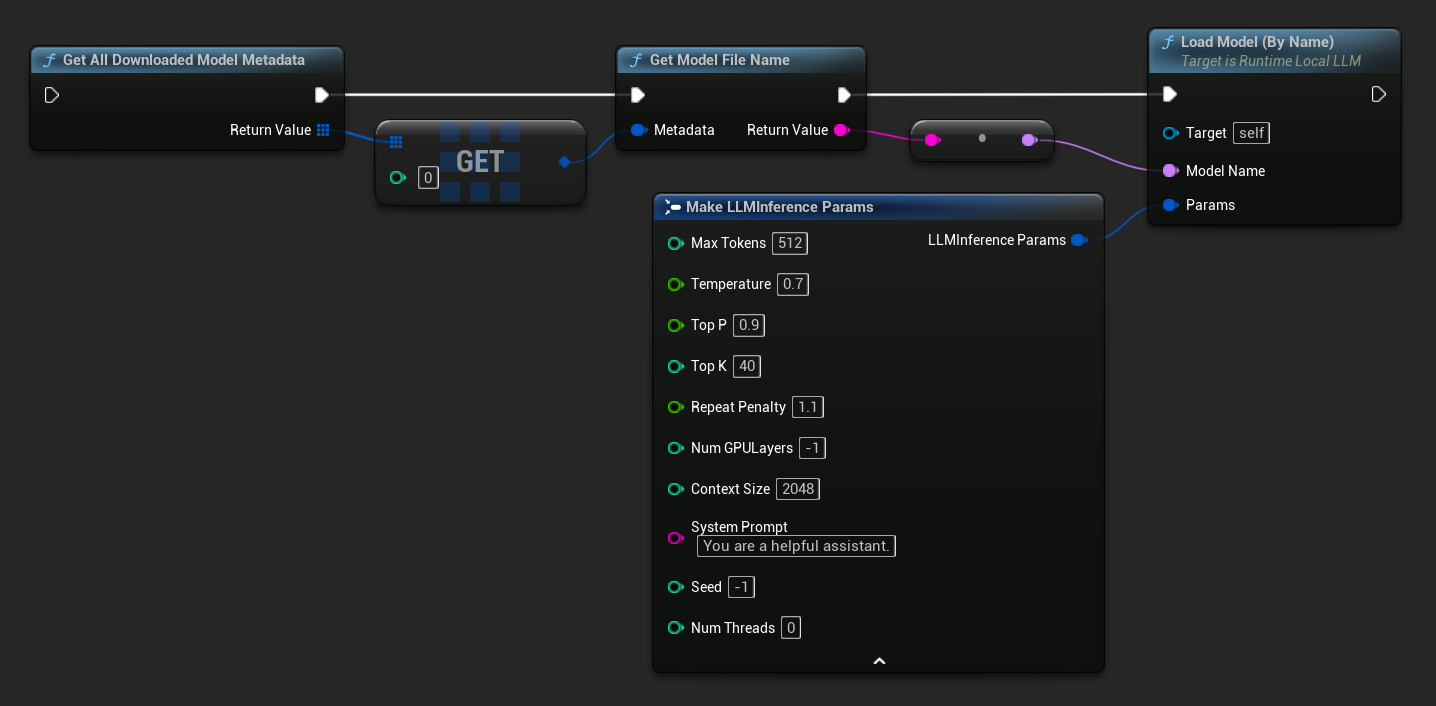

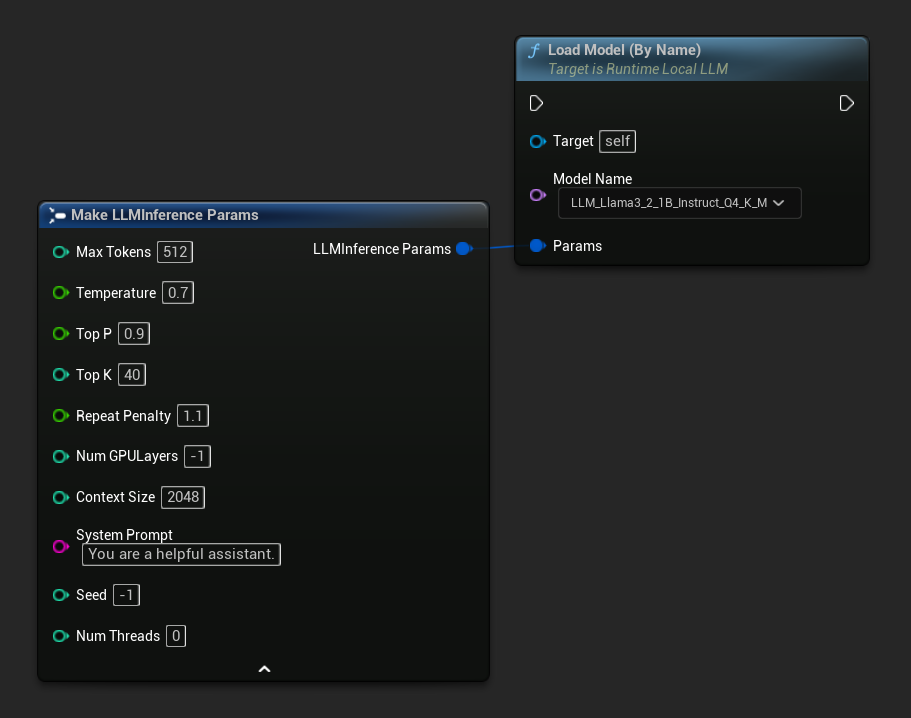

按名称加载

如果你通过编辑器设置面板管理模型,请使用 Load Model (By Name)。

- Blueprint

- C++

- UE 5.3 及更早版本

- UE 5.4+

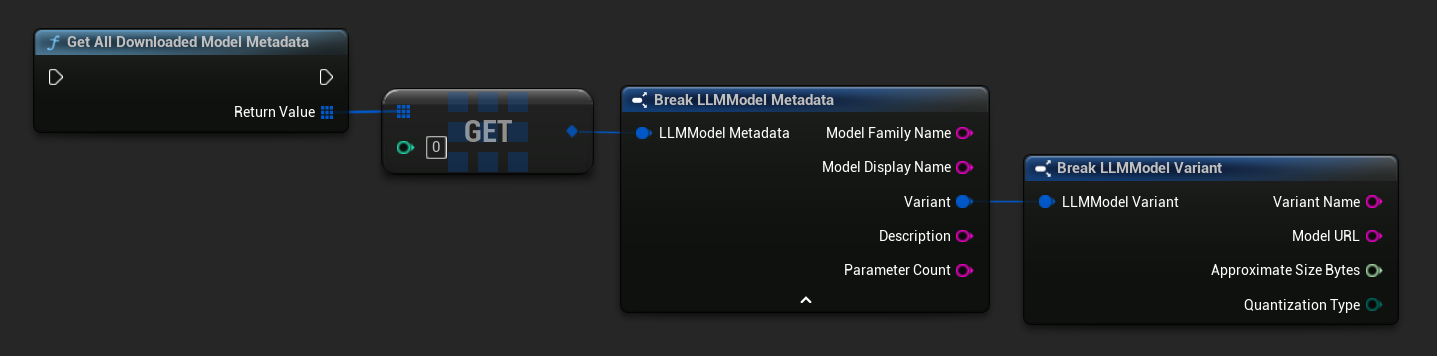

在 UE 5.3 及更早版本中,下拉菜单不会出现,因此你需要手动获取可用模型。使用 Get All Downloaded Model Metadata,获取索引 0(或你需要的模型)处的元素,将其传递给 Get Model File Name 以获取名称字符串,然后将其传递给 Load Model (By Name)。

在 UE 5.4 及更高版本中,Load Model (By Name) 会显示一个下拉菜单,列出磁盘上的所有模型——只需选择你要加载的模型即可。

在 C++ 中,使用 GetAllDownloadedModelMetadata 获取可用模型,并使用 GetModelFileName 获取名称传递给 LoadModelByName:

FLLMInferenceParams Params;

Params.MaxTokens = 512;

Params.Temperature = 0.7f;

Params.SystemPrompt = TEXT("You are a helpful assistant.");

TArray<FLLMModelMetadata> DownloadedModels = URuntimeLLMLibrary::GetAllDownloadedModelMetadata();

if (DownloadedModels.Num() > 0)

{

const FLLMModelMetadata& Model = DownloadedModels[0]; // Select the first available model

FString ModelFileName = URuntimeLLMLibrary::GetModelFileName(Model);

LLM->LoadModelByName(FName(*ModelFileName), Params);

}

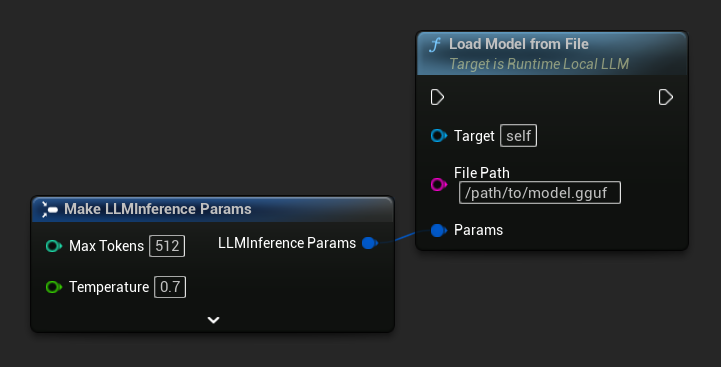

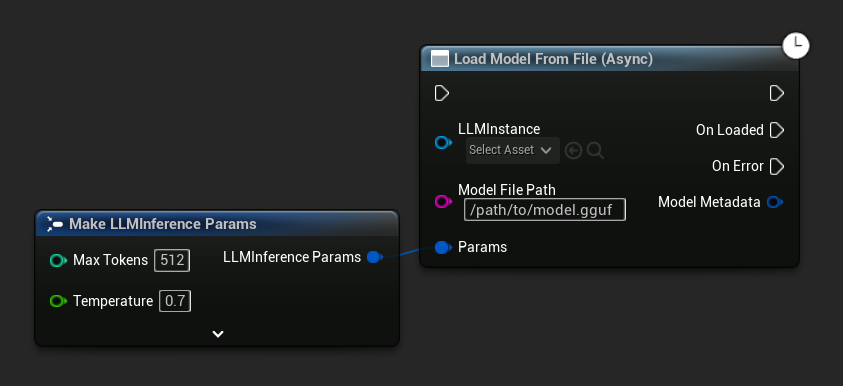

从文件路径加载

直接从绝对文件路径加载一个 .gguf 文件的模型:

- Blueprint

- C++

FLLMInferenceParams Params;

LLM->LoadModelFromFile(TEXT("/path/to/model.gguf"), Params);

从 URL 加载(下载并加载)

从 URL 下载模型(如果本地磁盘上不存在),然后自动加载。如果文件已在本地存在,则跳过下载。

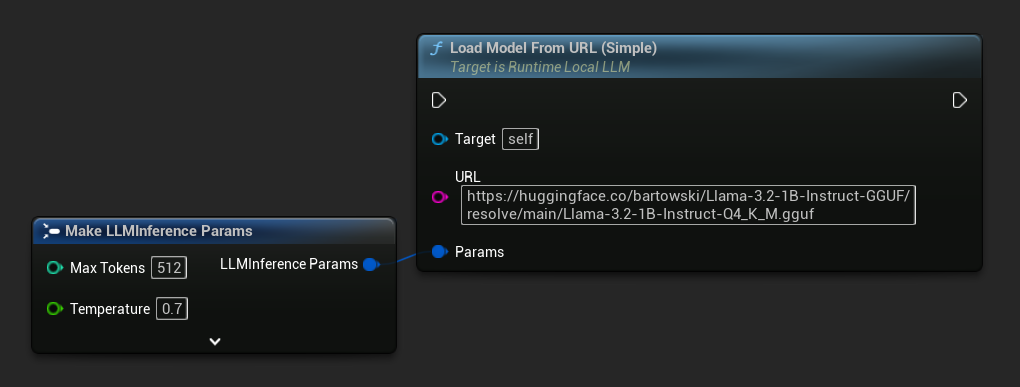

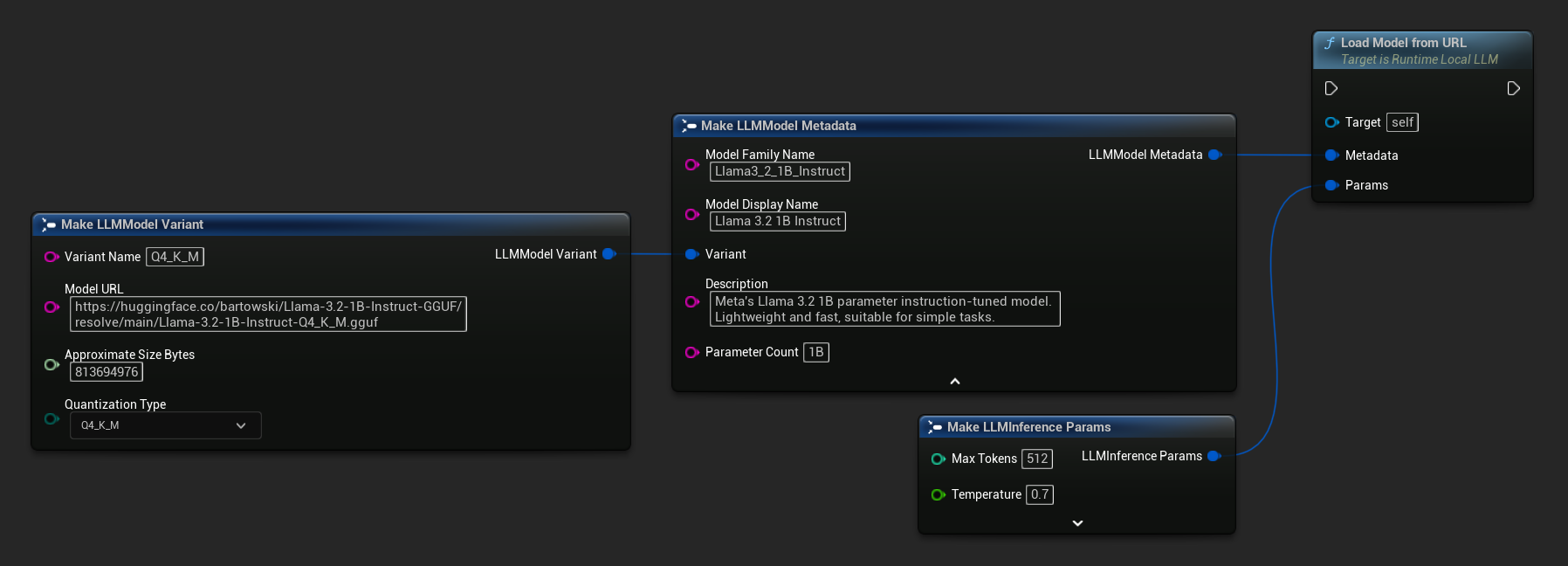

- Blueprint

- C++

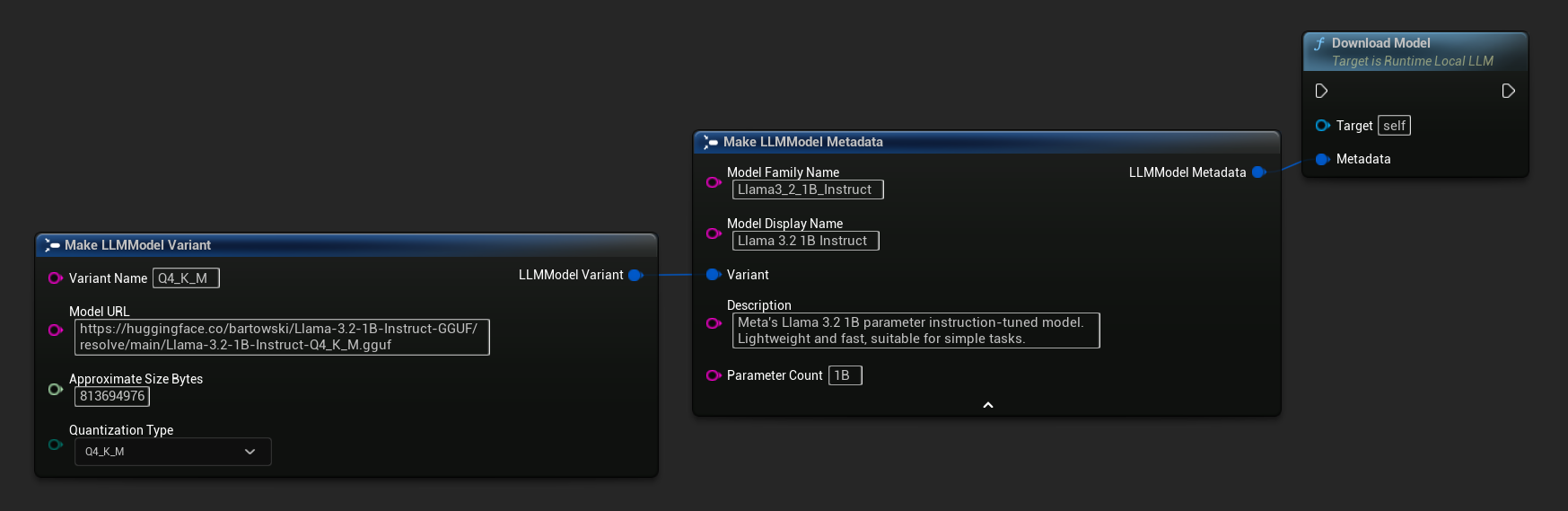

最简单的变体仅接受 URL,元数据从文件名派生:

你也可以使用带有完整模型元数据的 Load Model From URL,以获得更丰富的模型信息:

FLLMInferenceParams Params;

// Simple: URL only - metadata is derived from the filename

LLM->LoadModelFromURLSimple(

TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf"), Params);

// With full metadata

FLLMModelMetadata Metadata;

Metadata.ModelFamilyName = TEXT("Llama3_2_1B_Instruct");

Metadata.ModelDisplayName = TEXT("Llama 3.2 1B Instruct");

Metadata.Description = TEXT("Meta's Llama 3.2 1B parameter instruction-tuned model. Lightweight and fast, suitable for simple tasks.");

Metadata.ParameterCount = TEXT("1B");

Metadata.Variant.VariantName = TEXT("Q4_K_M");

Metadata.Variant.ModelURL = TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf");

Metadata.Variant.ApproximateSizeBytes = 776LL * 1024 * 1024;

Metadata.Variant.QuantizationType = ELLMQuantizationType::Q4_K_M;

LLM->LoadModelFromURL(Metadata, Params);

异步加载 (Blueprint)

为了通过输出引脚处理加载完成和错误,而不是手动绑定委托,有两个异步节点可用。

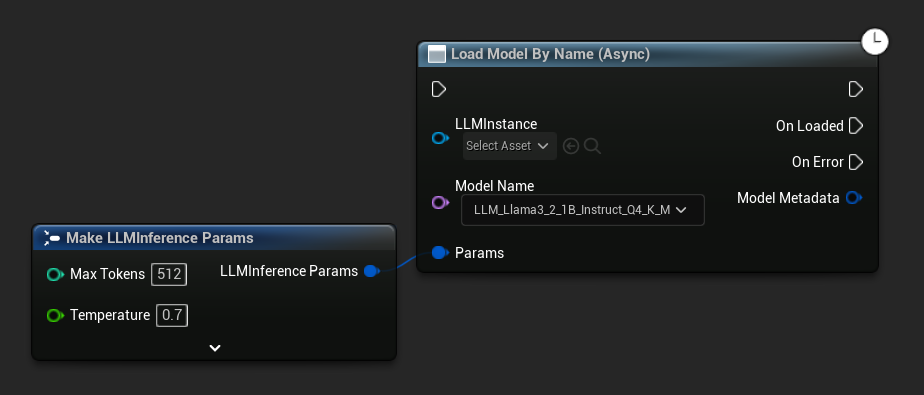

Load Model By Name (Async) 镜像了 Load Model (By Name) - 在 UE 5.4+ 中,它会显示磁盘上所有模型的下拉列表:

- UE 5.4+

- UE 5.3 and earlier

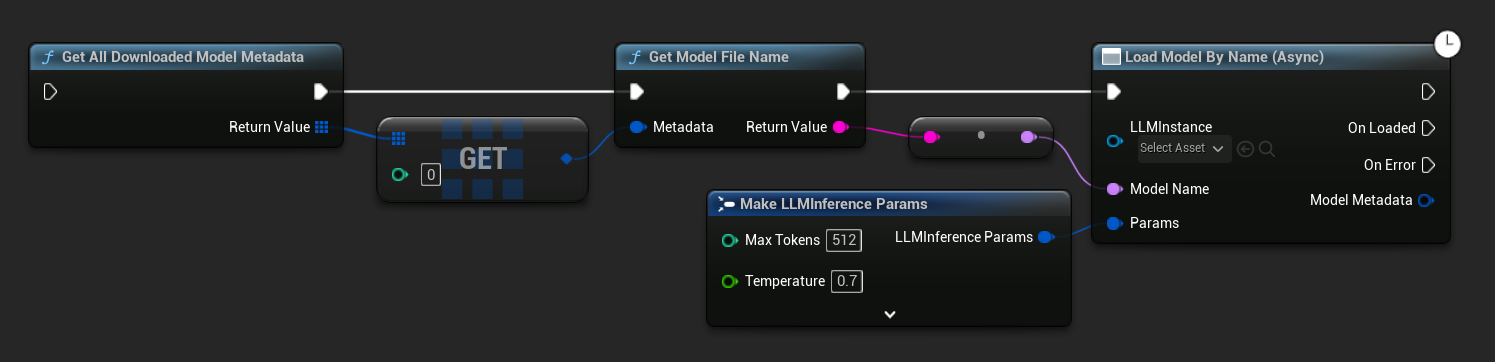

在 UE 5.3 及更早版本中,下拉列表不会出现。使用 Get All Downloaded Model Metadata,获取索引 0 的元素(或你需要的任何模型),将其传递给 Get Model File Name,然后将其传递给 Load Model By Name (Async)。

Load Model From File (Async) 则接受一个绝对文件路径:

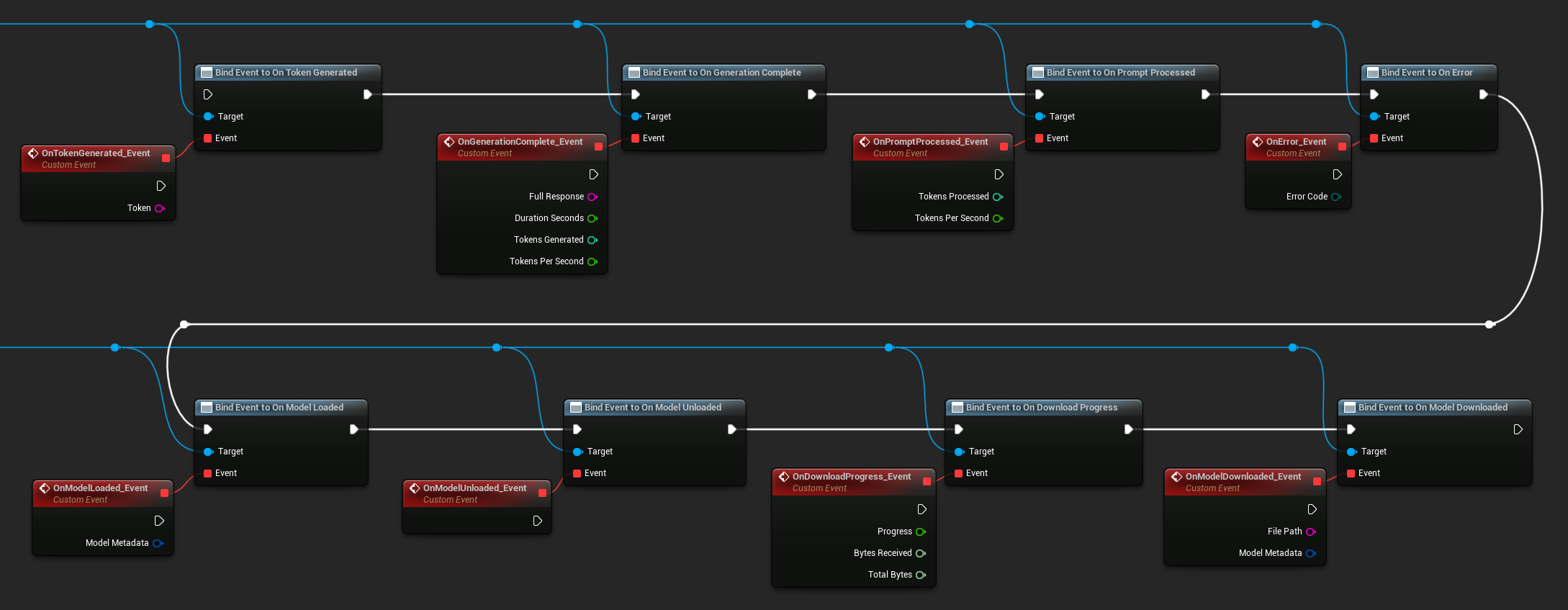

绑定事件

绑定到 LLM 实例的委托以接收回调。所有回调都在游戏线程上触发。

- Blueprint

- C++

可用委托:

- On Token Generated:每个输出令牌触发

- On Generation Complete:当完整响应准备就绪时触发,附带持续时间、令牌数和每秒令牌数

- On Prompt Processed:在处理输入提示后、开始生成之前触发

- On Error:如果在任何操作期间发生错误则触发

- On Model Loaded:当模型完成加载时触发

- On Model Unloaded:当模型被卸载时触发

- On Download Progress:在模型下载过程中定期触发(进度分数、接收字节、总字节数)

- On Model Downloaded:当仅下载操作完成时触发

LLM->OnTokenGeneratedNative.AddLambda([](const FString& Token)

{

});

LLM->OnGenerationCompleteNative.AddLambda([](const FString& FullResponse)

{

});

LLM->OnPromptProcessedNative.AddLambda([]()

{

});

LLM->OnErrorNative.AddLambda([](const FString& ErrorMessage)

{

});

LLM->OnModelLoadedNative.AddLambda([](const FString& ModelName)

{

});

LLM->OnModelUnloadedNative.AddLambda([](const FString& ModelName)

{

});

LLM->OnDownloadProgressNative.AddLambda([](const FString& ModelName, float Progress)

{

});

LLM->OnModelDownloadedNative.AddLambda([](const FString& ModelName)

{

});

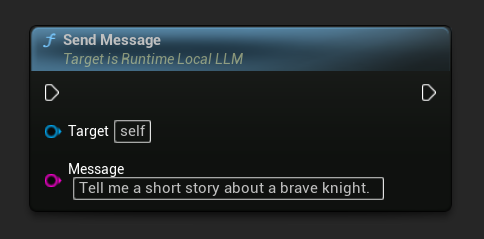

发送消息

加载模型后,发送用户消息以生成响应:

- Blueprint

- C++

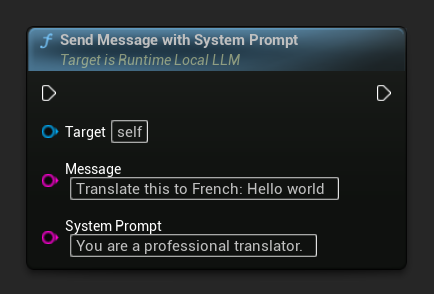

要覆盖特定消息的系统提示,请使用 Send Message With System Prompt:

LLM->SendMessage(TEXT("Tell me a short story about a brave knight."));

// With a custom system prompt override

LLM->SendMessageWithSystemPrompt(

TEXT("Translate this to French: Hello world"),

TEXT("You are a professional translator.")

);

生成的令牌(token)会通过 OnTokenGenerated 实时流式传输。当生成完成时,OnGenerationComplete 事件触发,并附带完整的响应、持续时间、令牌数量和每秒令牌数。

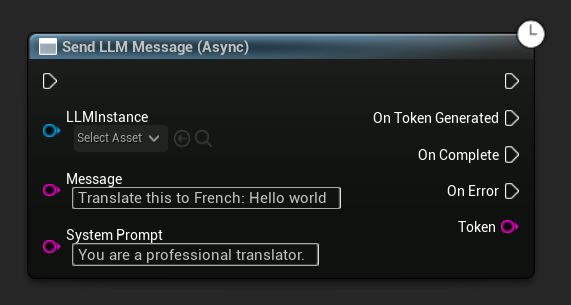

Async Send Message (Blueprint)

Send LLM Message (Async) 节点提供了专用的输出引脚,用于令牌、完成和错误:

运行时下载模型

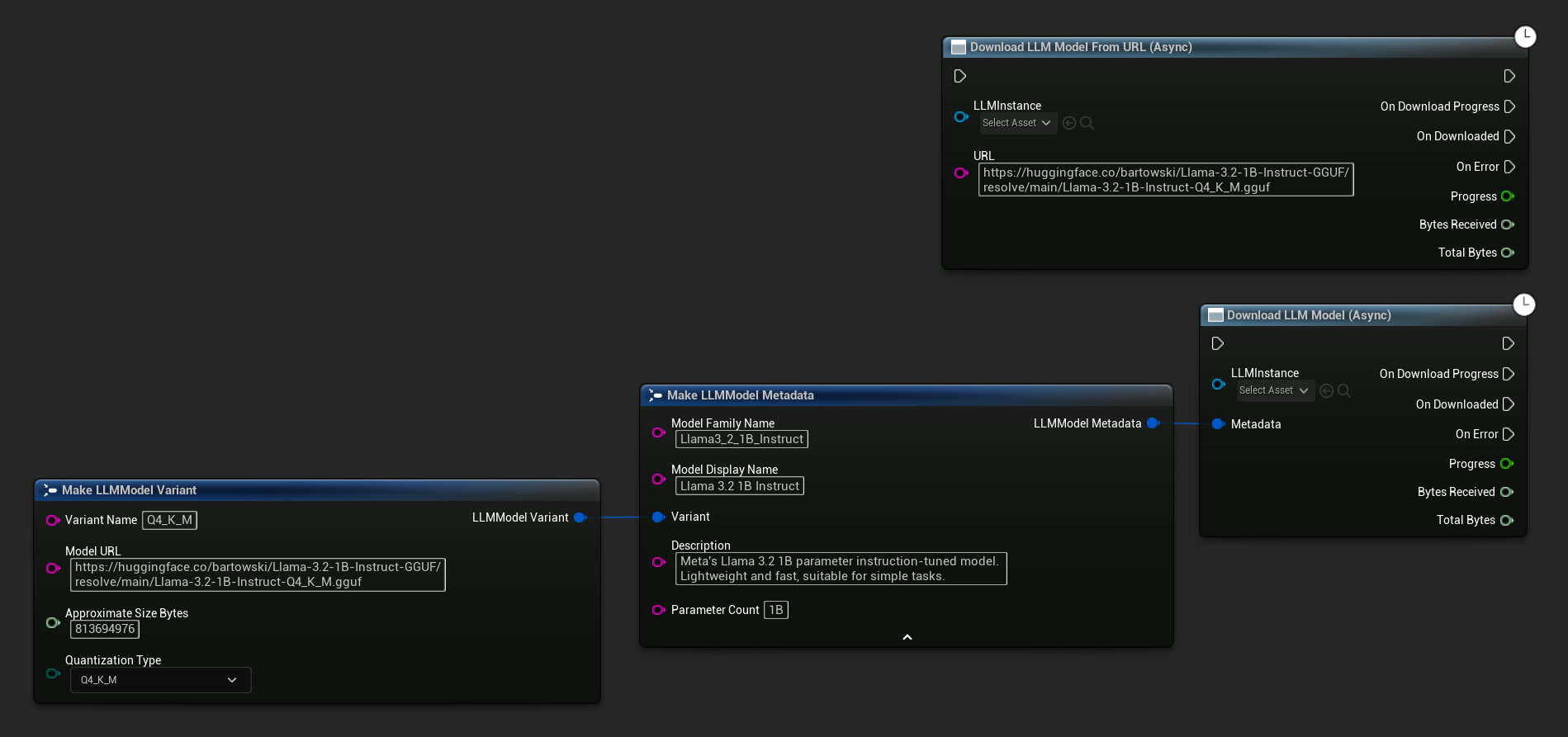

除了上述的下载并加载流程外,您还可以将模型下载到磁盘而不加载它。这对于在加载界面或设置菜单中预缓存模型很有用。

- Blueprint

- C++

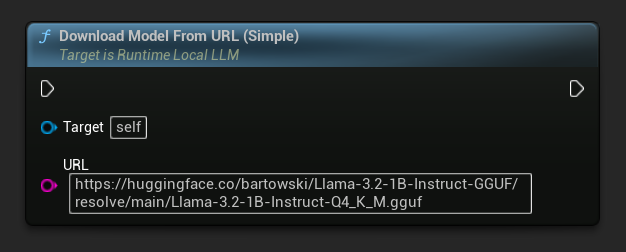

还提供一个仅使用 URL 的变体:

Download LLM Model (Async) 和 Download LLM Model From URL (Async) 节点提供了用于进度、完成和错误的输出引脚:

// With full metadata

FLLMModelMetadata Metadata;

Metadata.ModelFamilyName = TEXT("Llama3_2_1B_Instruct");

Metadata.ModelDisplayName = TEXT("Llama 3.2 1B Instruct");

Metadata.Description = TEXT("Meta's Llama 3.2 1B parameter instruction-tuned model. Lightweight and fast, suitable for simple tasks.");

Metadata.ParameterCount = TEXT("1B");

Metadata.Variant.VariantName = TEXT("Q4_K_M");

Metadata.Variant.ModelURL = TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf");

Metadata.Variant.ApproximateSizeBytes = 776LL * 1024 * 1024;

Metadata.Variant.QuantizationType = ELLMQuantizationType::Q4_K_M;

LLM->DownloadModel(Metadata);

// URL only

LLM->DownloadModelFromURL(

TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf"));

OnDownloadProgress 委托报告下载进度。OnModelDownloaded 在文件保存到磁盘时触发。

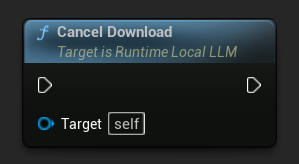

要取消正在进行的下载:

- Blueprint

- C++

```cpp

LLM->CancelDownload();

插件会自动防止重复下载 - 如果同一模型已有下载任务正在进行,后续调用将被忽略。

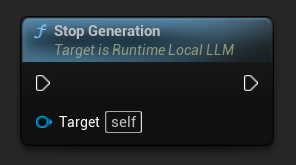

停止生成

要中断正在进行的生成:

- Blueprint

- C++

LLM->StopGeneration();

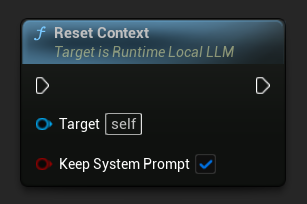

重置对话上下文

清除对话历史以开始新对话:

- Blueprint

- C++

```cpp

// Keep the system prompt

LLM->ResetContext(true);

// Clear everything including the system prompt

LLM->ResetContext(false);

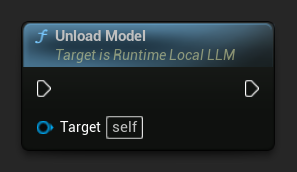

卸载模型

当不再需要模型时,释放资源:

- Blueprint

- C++

LLM->UnloadModel();

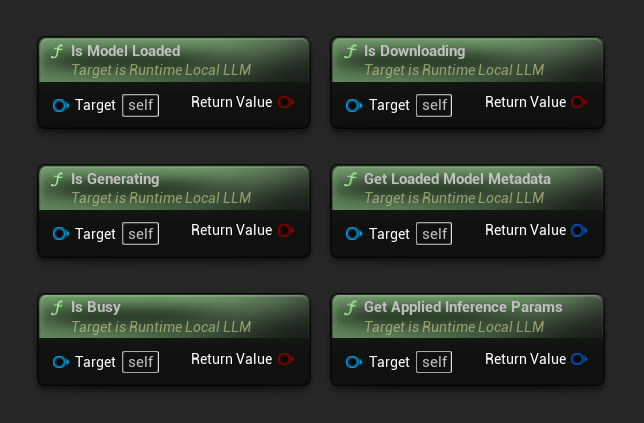

查询状态

检查LLM实例的当前状态:

- Blueprint

- C++

- Is Model Loaded:如果模型已准备好进行推理,则为True

- Is Generating:如果生成正在进行中,则为True

- Is Busy:如果有任何操作(加载、生成、下载)正在进行,则为True

- Is Downloading:如果模型下载正在进行中,则为True

- Get Loaded Model Metadata:返回当前模型的元数据

- Get Applied Inference Params:返回加载时应用的参数

// Is Model Loaded - true if a model is ready for inference

if (LLM->IsModelLoaded())

{

FLLMModelMetadata Metadata = LLM->GetLoadedModelMetadata();

UE_LOG(LogTemp, Log, TEXT("Model: %s"), *Metadata.ModelDisplayName);

FLLMInferenceParams Params = LLM->GetAppliedInferenceParams();

UE_LOG(LogTemp, Log, TEXT("Context size: %d"), Params.ContextSize);

}

// Is Generating - true if token generation is currently active

if (LLM->IsGenerating())

{

UE_LOG(LogTemp, Log, TEXT("Generation in progress..."));

}

// Is Busy - true if any operation (loading, generating, downloading) is active

if (LLM->IsBusy())

{

UE_LOG(LogTemp, Log, TEXT("LLM is busy, deferring request"));

}

// Is Downloading - true if a model download is currently in progress

if (LLM->IsDownloading())

{

UE_LOG(LogTemp, Log, TEXT("Model download in progress..."));

}

// Safe to send a new message or load a different model

if (!LLM->IsGenerating() && !LLM->IsBusy())

{

UE_LOG(LogTemp, Log, TEXT("LLM is idle and ready"));

}

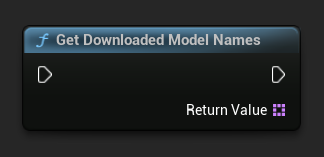

模型库函数

提供了一组静态实用函数,用于在磁盘上管理模型文件。这些函数在构建模型选择UI或在运行时检查模型可用性时非常有用。

获取已下载模型名称/元数据

- Blueprint

- C++

TArray<FName> ModelNames = URuntimeLLMLibrary::GetDownloadedModelNames();

TArray<FLLMModelMetadata> AllModels = URuntimeLLMLibrary::GetAllDownloadedModelMetadata();

for (const FLLMModelMetadata& Model : AllModels)

{

UE_LOG(LogTemp, Log, TEXT("Model: %s (%s)"), *Model.ModelDisplayName, *Model.Variant.VariantName);

}

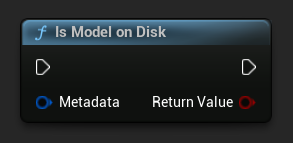

检查模型是否在磁盘上

- Blueprint

- C++

bool bExists = URuntimeLLMLibrary::IsModelOnDisk(Metadata);

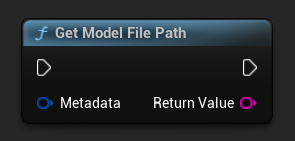

获取模型文件路径

- Blueprint

- C++

```cpp

FString FilePath = URuntimeLLMLibrary::GetModelFilePath(Metadata);

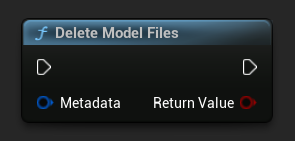

删除模型文件

- Blueprint

- C++

bool bDeleted = URuntimeLLMLibrary::DeleteModelFiles(Metadata);

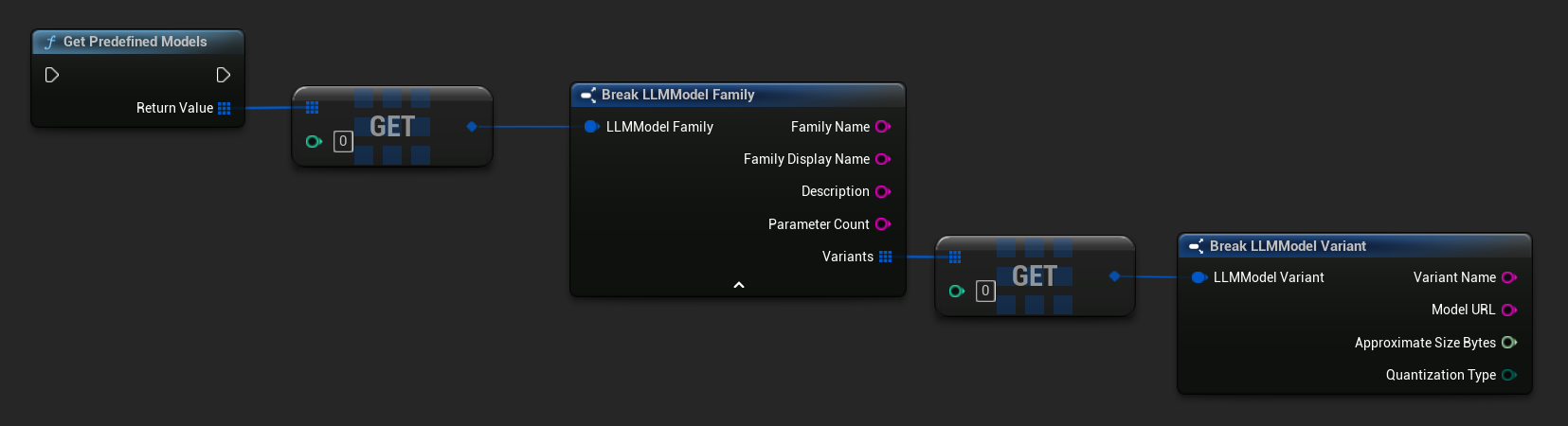

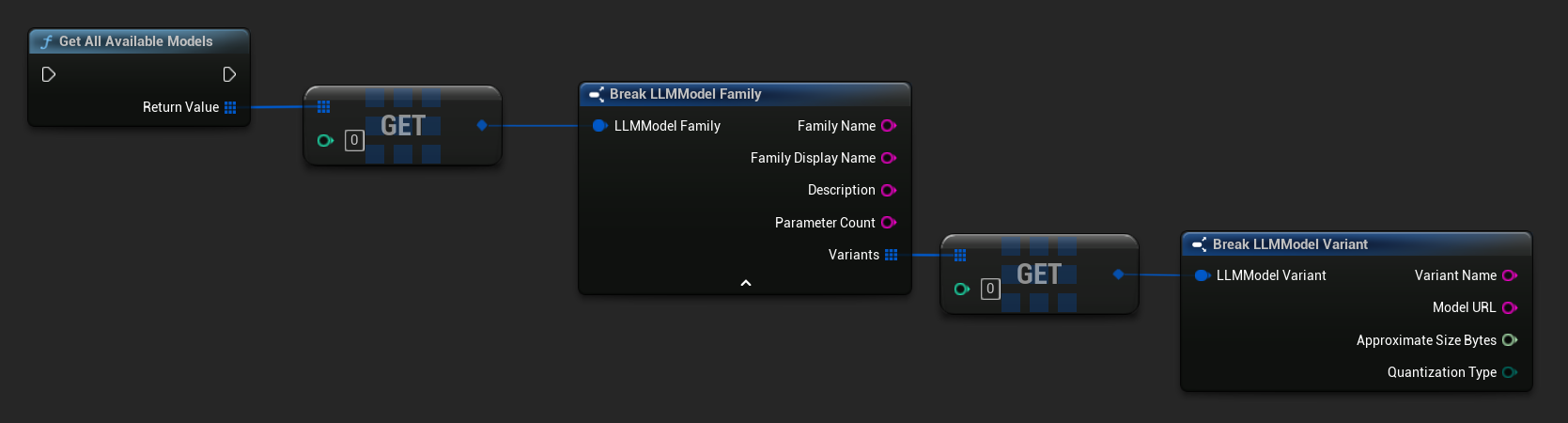

获取预定义模型和可用模型

- Blueprint

- C++

// Built-in catalog only

TArray<FLLMModelFamily> Predefined = URuntimeLLMLibrary::GetPredefinedModels();

// Catalog + custom imports

TArray<FLLMModelFamily> All = URuntimeLLMLibrary::GetAllAvailableModels();

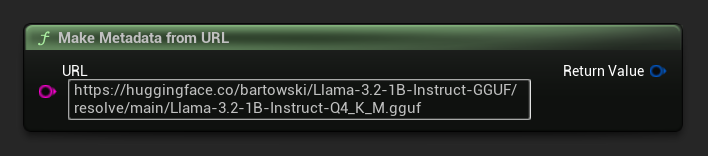

从 URL 构建元数据

从原始 URL 构建模型元数据(字段从文件名中派生):

- Blueprint

- C++

FLLMModelMetadata Metadata = URuntimeLocalLLM::MakeMetadataFromURL(

TEXT("https://huggingface.co/bartowski/Llama-3.2-1B-Instruct-GGUF/resolve/main/Llama-3.2-1B-Instruct-Q4_K_M.gguf")

);

实用函数

提供了一组用于格式化和错误显示的辅助函数。

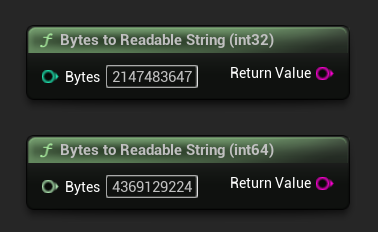

字节转换为可读字符串

将字节数转换为人类可读的字符串(例如“4.07 GB”)。常用于在 UI 中显示模型大小。

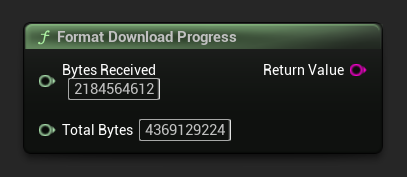

格式化下载进度

将下载进度字符串格式化为类似“1.23 GB / 4.07 GB (30.2%)”的形式。如果总大小未知,则仅返回已接收量。

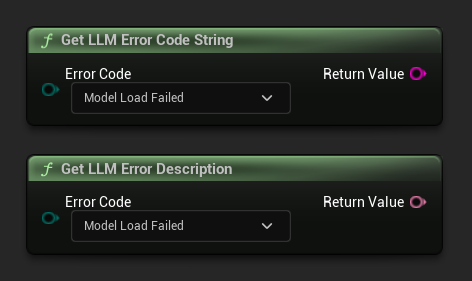

获取错误描述 / 错误代码字符串

Get LLM Error Description 返回给定错误代码的人类可读文本描述。Get LLM Error Code String 返回枚举值名称的字符串形式(可用于日志记录)。

错误代码参考

| 代码 | 值 | 描述 |

|---|---|---|

| Unknown | 0 | 未指定的错误 |

| ModelLoadFailed | 10 | GGUF 文件加载失败(文件损坏、格式不兼容等) |

| ContextCreateFailed | 11 | 推理上下文创建失败 |

| ModelNotLoaded | 20 | 试图在没有加载模型的情况下进行推理 |

| ChatTemplateFailed | 21 | 模型的对话模板应用失败 |

| TokenizationFailed | 22 | 输入文本无法分词 |

| ContextOverflow | 23 | 提示加上下文超出配置的上下文大小 |

| PromptDecodeFailed | 24 | 提示词元解码失败 |

| ContextTooFullToGenerate | 25 | 上下文空间不足,无法生成输出 |

| GenerationDecodeFailed | 30 | 生成过程中某个词元解码失败 |

| GenerationTruncated | 31 | 因为达到最大词元限制,生成停止 |

| LLMInstanceNull | 40 | LLM 实例为空或无效 |

| ModelNotFoundOnDisk | 41 | 模型文件在预期路径不存在 |

| ModelURLEmpty | 42 | 请求下载时 URL 为空 |

| ModelDownloadCancelled | 43 | 下载已取消 |

| ModelDownloadEmptyData | 44 | 下载完成但响应主体为空 |

| ModelDownloadSaveFailed | 45 | 下载完成但文件无法保存到磁盘 |